Running DeepSeek locally on Windows is possible, but choosing the right setup can mean the difference between a smooth experience and constant headaches. This independent guide (unofficial) answers whether you can run DeepSeek on Windows and outlines the most reliable paths. Keep in mind that results will vary based on your hardware (CPU/GPU) and drivers. Below, we cover everything from WSL2 vs native Windows trade-offs to using Ollama or llama.cpp for simplicity, plus GPU support differences, troubleshooting tips, and security notes.

Scope note:

Most practical “DeepSeek on Windows” setups use distilled checkpoints or quantized variants, rather than the full DeepSeek-R1 model. The original DeepSeek-R1 is an extremely large mixture-of-experts model designed primarily for large-scale infrastructure. For local Windows environments, users typically run distilled models (such as the DeepSeek-R1 distilled checkpoints) or quantized formats like GGUF through tools such as Ollama, llama.cpp, or Hugging Face Transformers. This guide focuses on those practical local deployment paths that are realistic on consumer hardware.

What this guide covers:

- WSL2 vs native Windows – which is the better environment for running DeepSeek

- When to use easy alternatives like Ollama or llama.cpp on Windows

- NVIDIA vs AMD GPU realities on Windows (CUDA and ROCm support)

- A troubleshooting cheat-sheet for common DeepSeek-on-Windows issues

- Privacy and security practices for running DeepSeek locally

Quick Answer: Can You Run DeepSeek on Windows?

Yes, you can run DeepSeek on Windows through several methods. In practice, there are three typical setup paths, each with its pros and cons:

- CPU-only (no WSL2): DeepSeek can run on pure CPU inside Windows without Linux at all. This works on any modern Windows PC, but it’s slow for large models. CPU-only mode is fine for smaller DeepSeek models or testing, but expect low token generation rates. Tools like LM Studio or simple CLI runners can load DeepSeek on Windows CPU directly. No special install is needed beyond the model and a runtime, making this the most compatible but slowest option.

- NVIDIA GPU (commonly used with WSL2): If you have an NVIDIA GPU, one of the most common ways to run DeepSeek on Windows is by using WSL2 (Windows Subsystem for Linux) with CUDA support. NVIDIA provides official support for CUDA on WSL, allowing Linux-based applications inside WSL2 to access the GPU using the same CUDA libraries used on native Linux systems. This makes it possible to run DeepSeek through frameworks such as PyTorch, Transformers, or optimized inference engines like vLLM within a Linux environment while still using a Windows host system. Because many AI and LLM tools are developed and tested primarily for Linux, WSL2 often provides better compatibility for these workflows. The setup requires enabling WSL2, installing a Linux distribution (such as Ubuntu), and installing the latest NVIDIA drivers that support CUDA in WSL. Once configured, GPU acceleration inside WSL2 can deliver performance close to native Linux for many machine learning workloads.

- AMD GPU (more variable support): Running DeepSeek with an AMD GPU on Windows is possible, but support is less predictable than with NVIDIA. AMD now provides official Windows support for selected hardware through its HIP SDK and PyTorch-on-Windows paths, but compatibility still depends heavily on the exact GPU, driver, and runtime. In practice, some users can run DeepSeek natively on Windows or through WSL2, while others may get more reliable results with CPU inference, quantized models, or a Linux-based setup.

Bottom line: You can run DeepSeek on Windows via CPU or GPU. CPU-only works universally (with patience), NVIDIA GPUs are typically the smoothest for acceleration (often under WSL2 for full compatibility), and AMD GPUs can work on Windows in supported configurations, but the path remains more variable and runtime-dependent than NVIDIA. Choose the approach that fits your hardware and comfort level – and read on for a decision tree and detailed guidance.

Windows Decision Tree — Choose Your Path

Not sure how to start? Use this mini decision tree to pick the best way to run DeepSeek on Windows for your needs:

“I want the fastest, easiest setup (no dev ops)”: Use a pre-packaged local LLM application. Tools such as Ollama or LM Studio provide streamlined installation and include most of the runtime components you need. For example, Ollama allows you to download and run supported models with a single command. These applications handle much of the configuration and dependency management automatically, making them ideal if you simply want to interact with a model without managing a full development environment. Depending on your hardware and the tool’s capabilities, they may run on CPU or take advantage of available GPU acceleration. The trade-off is reduced control over the underlying environment and fewer options for advanced customization compared with setting up a full Linux-based runtime or development stack.

“I need more control or plan to develop around DeepSeek”: Set up DeepSeek in a Linux environment via WSL2. If you’re comfortable with a Linux command line or need to integrate DeepSeek into custom workflows, WSL2 provides a full Ubuntu (or other distro) environment on Windows. This path is closer to what DeepSeek’s official instructions expect (since DeepSeek models are primarily designed for Linux NVIDIA GPU environments). It requires installing WSL2 and possibly Docker or Python packages, but gives you the flexibility of a Linux toolchain on a Windows machine. Choose this if you need to tinker, use advanced features, or run DeepSeek as an API/server with more customization.

“I have a GPU and want speed”: Your GPU type determines the best path. For NVIDIA GPUs, leveraging CUDA is key – which you can do either by installing Windows-native CUDA libraries or (more seamlessly) via WSL2 where Linux CUDA is supported out-of-the-box. In many cases, WSL2 with NVIDIA is the least problematic route for GPU acceleration. For AMD GPUs, evaluate if your card is supported under ROCm on WSL2. If yes, you could attempt the WSL2 route with AMD’s guides; if not, you’re likely better off using CPU (or considering an NVIDIA card) because Windows-native AI support for AMD is still catching up.

“I want to avoid troubleshooting as much as possible”: Pick the most established setup for your scenario. If you have an NVIDIA GPU, the community consensus is to use WSL2 + Linux-based tools (this combination is well-tested, and even NVIDIA promotes WSL2 for AI on Windows RTX PCs). If you don’t have a compatible GPU, use a simpler route like Ollama or a web UI with a quantized model – something known to work on Windows with minimal tweaks. In short, follow the path that most users with your hardware have succeeded with, as it likely has the fewest roadblocks.

DeepSeek on WSL2 (Recommended Baseline for Many Windows Devs)

For many Windows developers, running DeepSeek on WSL2 is a solid choice. Why? WSL2 enables a full Linux environment on Windows, meaning you can use the same installation process and tools as on a native Linux machine. DeepSeek’s open-source model code and libraries often assume a Linux-like environment, so WSL2 spares you from fighting with Windows-specific issues. Here’s what this setup typically looks like and why it works well:

- Linux userland advantages: By enabling WSL2 and installing a Linux distribution (such as Ubuntu), you gain access to the standard Linux ecosystem including APT packages, Linux Python wheels, and Linux-first AI tooling commonly used for running large language models. Many developers find that things “just work” in WSL2 because it closely mirrors the environment most LLM frameworks are built and tested on. For example, installation instructions for tools commonly used with DeepSeek—such as PyTorch, Transformers, vLLM, or llama.cpp utilities—often assume a Linux environment. Running these steps inside WSL2 usually works without major modification, whereas native Windows setups can sometimes require additional configuration. The Linux filesystem layout and shell tools also make it easier to run supporting utilities such as model conversion scripts, quantization tools, or dataset preprocessing pipelines, which may be more cumbersome to execute in a purely Windows environment.

- GPU support via CUDA: If you have an NVIDIA GPU, WSL2 allows Linux applications to use your GPU through NVIDIA’s CUDA for WSL. Microsoft and NVIDIA collaborated so that CUDA is fully supported on WSL, meaning frameworks like PyTorch detect the GPU inside WSL2 when configured properly. You should install the latest NVIDIA drivers on Windows (which include WSL support) and ensure CUDA Toolkit or the necessary CUDA drivers are present in the WSL environment. Once that’s done, running DeepSeek in WSL2 can utilize the GPU almost as if you were on a native Ubuntu system. This often yields significantly faster generation times compared to CPU. (Tip: inside the WSL2 terminal, use

nvidia-smito verify your GPU is recognized.) - Minimal overhead: WSL2 is designed to provide high performance with minimal virtualization overhead. Microsoft and NVIDIA note that GPU workloads running through CUDA on WSL can achieve performance that is close to native Linux in many scenarios. Because WSL2 runs a real Linux kernel and integrates directly with Windows drivers, most machine learning frameworks can access GPU acceleration with only minor overhead. In practice, performance can vary depending on the workload, driver versions, and system configuration, but many users report near-native GPU throughput when running LLM inference inside WSL2. To avoid resource constraints, it is recommended to ensure that WSL2 has access to sufficient system resources (such as memory and CPU cores), which can be configured using the

.wslconfigfile if needed. Overall, WSL2 provides a practical balance between performance and convenience, allowing developers to run Linux-based AI tooling on a Windows system without the need for a separate Linux installation. - Setup checklist: To run DeepSeek in a WSL2 environment, you need Windows 11 (recommended) or Windows 10 version 2004+ with WSL2 support enabled, along with a Linux distribution such as Ubuntu installed through WSL. On modern Windows systems, WSL can typically be installed with the command

wsl --installin PowerShell. After enabling WSL2, install the required Linux dependencies inside your distribution, such as Python, pip, and common development tools. If you plan to use GPU acceleration, install the latest NVIDIA drivers that support CUDA on WSL, and verify GPU access from inside WSL using tools likenvidia-smi. Once the environment is ready, download the DeepSeek model files or clone the relevant repositories into the WSL filesystem. You can then run the model using common inference frameworks such as PyTorch, Transformers, or optimized serving engines like vLLM, which are primarily developed for Linux environments and therefore work reliably inside WSL2. In practice, WSL2 provides a Linux-like environment on Windows that aligns well with the tooling used across the modern LLM ecosystem. This often reduces compatibility issues compared with running the same software stack directly on native Windows.

DeepSeek Native on Windows (What Usually Works, What Breaks)

Can you run DeepSeek without WSL2, directly on Windows? Yes – there are scenarios where native Windows execution is feasible, but there are also common points of failure to be aware of. Here’s what typically works well natively, and what tends to break:

What usually works natively:

Packaged apps and UIs: If you use a Windows application designed for local LLMs (for example, LM Studio, or another GUI that supports DeepSeek), these can often run out-of-the-box. Such apps typically bundle a CPU inference engine or use simpler backends that have Windows support. DeepSeek quantized models can be loaded in these environments for basic usage.

Ollama for Windows: Ollama provides a native Windows installer that allows you to run models such as DeepSeek directly from PowerShell or Command Prompt. It abstracts away most of the setup complexity, so you don’t need to manually manage Python environments, dependencies, or model runtimes. Depending on your hardware and configuration, Ollama can use CPU or GPU acceleration. On systems with compatible NVIDIA GPUs, Ollama can leverage CUDA for faster inference, while other setups may fall back to CPU execution. This makes Ollama a convenient option for quickly running DeepSeek models locally without setting up a full development environment. Because Ollama bundles the runtime and model management tools together, installation and execution are typically straightforward, making it one of the easiest ways to experiment with DeepSeek models on a native Windows system.

Text-generation Web UI (and similar) with CPU/backends: Tools like Oobabooga’s text-generation-webui can be set up on Windows, especially if running in CPU mode or using simple GPU offload via DirectML. If you install Python and the required packages on Windows, you can load a DeepSeek model in a web UI interface. This usually works for smaller models or quantized versions – the web UI’s Windows support has improved to handle basic cases.

Hugging Face Transformers on Windows: It is possible to load certain DeepSeek models through the Hugging Face Transformers library on Windows. PyTorch provides Windows binaries with CUDA support, which means an NVIDIA GPU can theoretically be used natively if the environment is configured correctly. For example, you could install the required libraries with pip install torch transformers and attempt to load a compatible DeepSeek model—such as one of the DeepSeek-R1 distilled checkpoints (for example deepseek-ai/DeepSeek-R1-Distill-Qwen-7B)—in a Python script. Basic experiments or CPU inference generally work without major issues. However, challenges tend to appear when working with larger models, advanced GPU optimizations, or specialized inference runtimes, which are often developed and tested primarily for Linux environments.

What often breaks or is tricky on native Windows:

GPU driver/toolchain mismatch: While PyTorch and TensorFlow support Windows, not all of the optimized libraries or drivers do. You might encounter issues like the GPU not being detected due to driver mismatches. Ensuring you have the correct NVIDIA CUDA DLLs and that your PyTorch version matches your installed CUDA toolkit is crucial. If DeepSeek relies on specific CUDA extensions (like custom kernels), those may not have official Windows versions. In contrast, under WSL2 or Linux, these pieces tend to align more easily.

Python package installation issues: Some Python packages used in the local LLM ecosystem still have uneven support on Windows or may require additional configuration. Although compatibility has improved in recent years, many libraries are developed and tested primarily on Linux environments first. For example, packages such as bitsandbytes historically lacked Windows support. While newer builds now exist for certain setups, compatibility can still depend on the backend, GPU stack, and runtime being used. In addition, most DeepSeek workflows rely on broader frameworks—such as PyTorch, Transformers, or specialized inference engines—rather than a single universal Python package. When installing these environments on Windows, pip may attempt to compile dependencies from source, which can require additional development tools such as Visual Studio Build Tools. Because of this, the “pip install everything” approach can sometimes lead to missing dependencies or build errors on Windows systems. In practice, many developers avoid these issues by using prebuilt environments, Conda distributions, packaged local-LLM tools like Ollama or LM Studio, or a Linux environment via WSL2, where compatibility with the broader LLM ecosystem is often more predictable.

Inconsistent GPU backend support: Many advanced LLM serving libraries (like vLLM, ExLlama, etc.) are developed on Linux and may not run on Windows. For example, vLLM – known for fast token generation – doesn’t officially support Windows at the time of writing. If DeepSeek’s best performance is achieved via such libraries, you’d be out of luck natively. Windows has its own GPU compute layer (DirectML); however, DeepSeek’s code isn’t likely to use it by default. Some community solutions exist to run models via DirectML or ONNX on Windows, but those are niche and often slower. In short, the full performance potential of DeepSeek is harder to unlock on native Windows due to gaps in library support.

Frequent environment quirks: Native Windows has case-insensitive file paths, different line endings, max path length issues, and other quirks that sometimes break scripts or model loaders expecting Linux conventions. For instance, a path like C:\Users\Name\My Documents\deepseek\model.bin with spaces might trip up poorly-configured code. Or file permission issues might arise when models are stored in protected directories. While these can be solved, they add to the “yak shaving” when running natively.

In summary, running DeepSeek natively on Windows can work for simpler use cases – especially CPU inference or via user-friendly apps – but it tends to break when you push into advanced territory (GPU acceleration, custom optimizations, large models). The official recommendation for Windows NVIDIA users is often to use WSL2 for a smoother ride. If you must stay native, be prepared to troubleshoot Python environments, install developer tools, and possibly accept some performance limitations.

When Ollama or llama.cpp Is the Easier Option (DeepSeek on Windows)

Sometimes the path of least resistance is to avoid complex setups altogether. This is where lightweight solutions like Ollama or llama.cpp come in. They offer a more straightforward way to run DeepSeek models on Windows, especially if you don’t need full-blown Linux environments or GPU acceleration. Here’s why these can be the easier option and what trade-offs to consider:

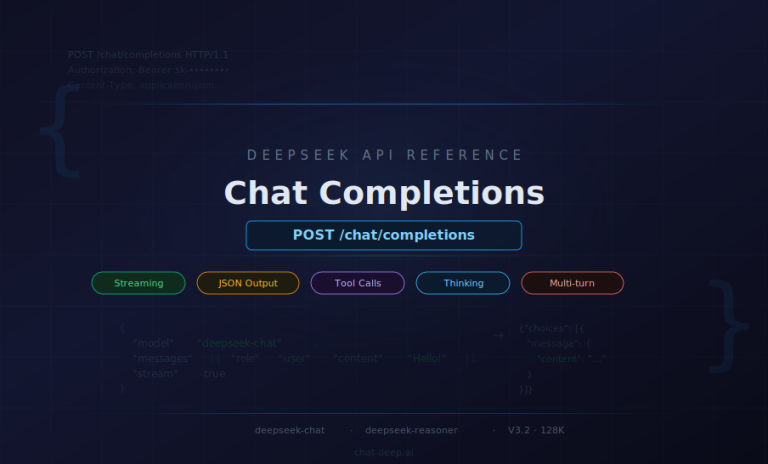

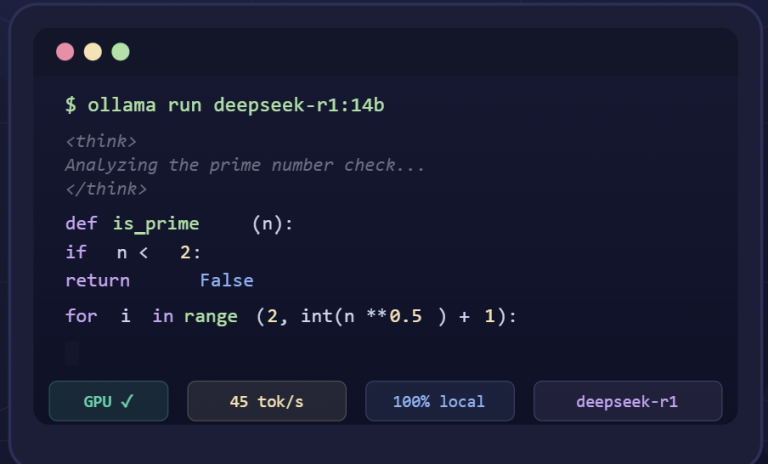

- Self-contained simplicity: Ollama and llama.cpp are designed to simplify local LLM deployment. With Ollama, for example, you install a single application and use commands like

ollama pullto download models andollama runto chat with them. In fact, obtaining a DeepSeek model can be as easy as one command (ollama pull deepseek-r1:32b) to fetch and prepare a 32B model. These tools handle all dependencies internally – no need to manage Python, CUDA, or compilers. This makes them very appealing for users who just want to use DeepSeek without becoming sysadmins. - Built on llama.cpp (efficient CPU inference): Both Ollama and many community UIs leverage llama.cpp under the hood, which is a highly optimized C++ implementation for running LLMs on CPU (and limited GPU offload). Llama.cpp is known for its low memory overhead and ability to run models in quantized form. What this means for DeepSeek: you can run surprisingly large models on a consumer PC by using 4-bit or 8-bit quantization (e.g., 32B parameters reduced via quantization to fit in <20GB RAM). The performance is slower than a true GPU, but it’s often good enough for non-production use and avoids the complexity of GPU drivers. Essentially, these tools let you trade some speed for a drastically simpler setup.

- Fewer points of failure: Because Ollama/llama.cpp come pre-built, you skip many of the failure points that plague traditional setups. You won’t have to compile code or install CUDA toolkits. There’s a huge community around llama.cpp that continuously improves Windows support, so it’s quite stable. If something does go wrong, it’s often a single issue (e.g. a bad model file) rather than a full stack trace of Python errors. For a lot of users, this “it just works” factor is worth the reduction in raw performance or flexibility. One community guide even rated Ollama as a five-star easy setup for DeepSeek, highlighting its beginner-friendliness.

- What you give up: The simplicity of these tools does come with some trade-offs. Performance: Without a compatible GPU, generation speed will be significantly slower. Tools built on llama.cpp can use techniques such as quantization and partial GPU layer offloading (via CUDA, Vulkan, or other backends depending on the build), but they generally do not match the throughput of fully optimized GPU runtimes designed for large-scale inference. Advanced serving features: Lightweight tools are typically optimized for local or small-scale usage. While some of them can expose a local API or support basic integrations, they usually lack advanced serving features such as high-concurrency request handling, dynamic batching, or multi-GPU scaling, which are commonly found in specialized inference engines like vLLM. Ecosystem lag: Packaged runtimes may also take time to adopt support for new model architectures, inference optimizations, or experimental features introduced in the broader LLM ecosystem. Although llama.cpp supports modern quantized formats such as GGUF, cutting-edge capabilities sometimes appear first in Linux-focused frameworks like PyTorch-based pipelines or optimized serving engines before they are integrated into simplified local tools.

- Model format considerations: DeepSeek models can be large (tens of GB) in their original form. Tools like Ollama require a model to be in a compatible format (usually a quantized GGML/GGUF file). Often, you’ll find that others have already converted popular DeepSeek releases into these formats and shared them, or the tool’s built-in commands will handle the download. But if you have to convert yourself, it’s an extra step – typically done with a script on Linux. The good news is once you have a quantized model, it’s very efficient to run on Windows and even modest hardware can handle it.

In short, Ollama/llama.cpp routes are ideal for ease and accessibility. If your primary goal is to get DeepSeek running locally for personal use (and you’re okay with CPU-level performance), this is probably the quickest path. You avoid 90% of the “it’s not working” issues by sidestepping the complexity entirely. Just remember that with great simplicity comes some loss of power – and if you outgrow what these tools offer, you may need to transition to the more involved setups discussed earlier.

GPU Reality on Windows: CUDA vs ROCm (DeepSeek Context)

When it comes to GPUs and DeepSeek on Windows, the story splits into two camps: NVIDIA (CUDA) vs AMD (ROCm/DirectML). Understanding the current state of each can help you decide how to proceed with GPU acceleration:

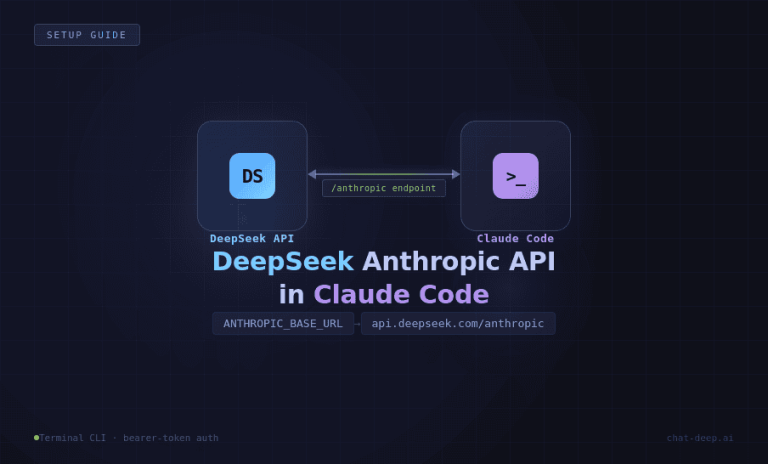

- NVIDIA and CUDA (the path of least resistance): NVIDIA’s ecosystem for machine learning is mature on Windows, largely thanks to CUDA and related libraries. You can install NVIDIA drivers and CUDA Toolkit on Windows and run many ML frameworks natively. In fact, NVIDIA has emphasized that RTX GPUs can run AI workloads on Windows via CUDA, including support for popular frameworks like PyTorch and TensorFlow. For DeepSeek, this means if you have, say, an RTX 3080 or 4090, you could attempt to run the model using PyTorch in Windows (ensuring you have the correct CUDA-enabled PyTorch build). However, as noted earlier, some specialized DeepSeek tooling (like vLLM) isn’t on Windows. Still, vanilla CUDA on Windows works, and WSL2 provides an even smoother Linux-layer option for CUDA. Most Windows-based DeepSeek users with NVIDIA cards either: (a) use WSL2 so they can run the Linux version of everything, or (b) use Docker Desktop with WSL2 backend, which similarly leverages CUDA in Linux containers. Both approaches lean on NVIDIA’s solid CUDA on WSL integration. The takeaway: an NVIDIA GPU gives you a fairly clear path to accelerate DeepSeek on Windows, with strong official support and community experience to fall back on.

- AMD and ROCm / HIP on Windows (more variable than NVIDIA): AMD support for local LLMs on Windows is improving, but it remains less predictable than the NVIDIA CUDA path. AMD now provides official Windows support for selected hardware through its HIP SDK and PyTorch-on-Windows paths, and it also documents ROCm support in WSL2 for supported devices. However, compatibility across the broader local-LLM ecosystem is still uneven, and some optimized inference engines remain Linux-first or require additional setup. In practice, AMD on Windows can work in supported configurations, but results depend heavily on the exact GPU, driver version, and runtime. For many users, it is best approached as a viable but more setup-sensitive path rather than a plug-and-play alternative to NVIDIA.

- Making the choice: If you haven’t bought hardware yet and your goal is local LLMs like DeepSeek, NVIDIA is generally the safer bet for Windows users. The tooling, drivers, and community guides skew heavily towards CUDA solutions. If you already have an AMD GPU, evaluate whether your model size and speed needs might be met with CPU or quantization. You could also consider setting up a dedicated Linux system (or dual-boot) for AMD, where ROCm is fully supported, as an alternative. Keep an eye on AMD’s developments though – they are actively improving ROCm and expanding support, so Windows AMD GPU acceleration might become more viable in the future. But as of now, DeepSeek on Windows + AMD is a frontier that requires patience and technical know-how.

In summary, NVIDIA GPUs offer a well-trodden path for DeepSeek on Windows (especially via WSL2), whereas AMD GPUs present an uphill climb with limited, experimental support. Plan accordingly: leverage CUDA if you can, and approach ROCm on Windows with tempered expectations.

Common DeepSeek-on-Windows Issues (Symptom → Likely Cause → First Fixes)

When running DeepSeek locally on Windows, you may encounter some recurring issues. Below is a list of common symptoms, their probable causes, and initial troubleshooting steps to get you unstuck:

Model won’t load: Likely cause: Insufficient memory (RAM/VRAM) to load the model, or the model file path is incorrect/corrupted. First fixes: Check that your system has enough RAM/VRAM for the model size – large models might simply not fit. Ensure the model files are present and the path is correct. If using a quantized format, verify that the runtime supports it. Try loading a smaller or quantized model to see if the issue persists (see the DeepSeek Quantization Guide for using smaller model files).

Out of memory error or crash: Likely cause: The model is too large for the available GPU memory or system RAM, causing allocation failure. This often happens with 30B+ models on GPUs with less than the required VRAM. First fixes: Use a lower precision or quantized model to reduce memory usage, or limit the context length. For GPU, make sure no other process is hogging VRAM. You can also run DeepSeek on CPU (it will be slower but confirms if memory was the issue). Ultimately, consider upgrading hardware or offloading some layers to CPU if the framework allows.

CUDA not detected (GPU not being used): Likely cause: The environment can’t find an NVIDIA CUDA installation or compatible driver. On Windows, it could mean the NVIDIA driver isn’t installed or the CUDA toolkit is missing. In WSL2, it might indicate you didn’t enable GPU support or install the right drivers on the Windows side. First fixes: On Windows, install the latest NVIDIA GPU driver (and CUDA toolkit if needed). Double-check that your PyTorch/Tensorflow is the CUDA-enabled version (for PyTorch, torch.cuda.is_available() should return True). In WSL2, run nvidia-smi – if it fails, install/update the NVIDIA drivers on Windows that support WSL2. Also ensure you launched WSL2 (not WSL1) if you had WSL already installed.

Very slow generation speed: Likely cause: Running on CPU instead of GPU, or using an overly large model on borderline hardware. If you expected GPU usage but it’s slow, the model might not actually be using the GPU (see “CUDA not detected” above). If you are on CPU by design, large models will be slow. First fixes: Confirm whether GPU acceleration is active. Monitor your GPU usage via Task Manager or nvidia-smi – if it’s near 0% during generation, you’re on CPU. To speed up: switch to a quantized model (to reduce computation), or try enabling GPU offload (some tools allow moving part of the model to GPU to accelerate critical parts). Reducing the context length or complexity of prompts can also make generation faster on limited hardware.

Garbled or nonsensical output (formatting issues): Likely cause: Using the wrong prompt template or an incompatible model variant. DeepSeek (especially instruction-tuned models) might expect a certain prompt format (system/user roles, etc.). If you feed it raw text or use a mismatched template, the replies can be incoherent or include token artifacts. First fixes: Review the model documentation for the expected input format. For example, some models need a specific prefix like <|user|>: ... <|assistant|>: .... Use the template or starter prompt recommended for DeepSeek. If you’re quantizing or converting models, ensure the tokenizer is correct. Another cause could be a buggy build of the runtime – updating llama.cpp or your inference engine might resolve generation glitches.

Local server not reachable (web UI or API): Likely cause: Networking setup issue between Windows and the service. If you’re running a web UI in WSL2 or Docker, the service might be binding to localhost in the VM, which sometimes isn’t accessible from the Windows host due to network isolation. Or the port might be blocked by Windows Firewall. First fixes: For WSL2, typically localhost:port on Windows should connect to localhost:port in WSL2 (Microsoft maps it), but if not, find WSL’s IP address with ifconfig and try that. In Docker Desktop, ensure you published the port (e.g., -p 8080:8080 as in the DeepSeek Docker example). Disable or add an exception in Windows Firewall for that port. Also verify the service is actually running (listen on the correct interface). If you still can’t reach it, it might be listening on 127.0.0.1 inside WSL only – adjusting it to 0.0.0.0 inside WSL can help.

Model file format mismatch (GGUF vs HF): Likely cause: Using a model file in a format not supported by your runtime. For instance, you try to load a deepseek.gguf (quantized llama.cpp format) into Transformers/PyTorch which expects a PyTorch .bin or .safetensors file – or vice versa. First fixes: Make sure you have the right model file for the tool: use Hugging Face format models for PyTorch/Transformers/vLLM, and use GGUF/GGML for llama.cpp-based tools. They are not interchangeable without conversion. If you accidentally downloaded the wrong format, get the correct one (or use a conversion script if available). In some cases, even quantized models have multiple variants (for different versions of llama.cpp), so double-check compatibility notes from the model provider.

Dependency installation errors (especially on Windows): Likely cause: Missing build tools or incompatible library versions when running installation scripts. This often appears as a long error log when pip installing something like torch. Windows might lack a C++ compiler or the specific version needed. First fixes: Install the Visual Studio Build Tools if you see compilation errors on Windows. For Python, consider using pre-built binaries whenever possible (e.g., use pip install torch with a version that has wheels, avoid forcing a source build). If a particular package has no Windows support, search for a fork or alternative. Using Anaconda or Miniconda on Windows can sometimes resolve dependency hell by providing pre-compiled packages. As a last resort, pivot to using WSL2, where these packages are more likely to install smoothly.

Permissions or path issues: Likely cause: Trying to read/write files in a location that requires admin rights, or path names that are not WSL-friendly. For example, if you point DeepSeek to a model path on a Windows drive (like C:\Models\DeepSeek) from inside WSL, you need to use the mounted path (/mnt/c/Models/DeepSeek). Or if you installed something as Administrator but running as a normal user, you might get access denied. First fixes: Always run with appropriate permissions – if a path requires admin, either run as admin or, better, choose a user-owned directory (like your Documents or a folder in WSL home). Keep paths simple: avoid spaces and special characters in folder names for your models and code. In WSL, place files in the Linux file system for fewer issues (Linux permissions don’t apply to Windows files mounted via /mnt, but performance is worse on mounted drives). Double-check file paths for typos and existence.

“429 Too Many Requests” or “503 Service Unavailable” from local API: Likely cause: Hitting the limits of a local DeepSeek server’s request handling. A 429 implies you’ve made more requests than the server can handle or it has rate-limiting (some local servers queue requests if a single GPU is busy). A 503 might indicate the server is overloaded or an internal error caused it to become unavailable. First fixes: If you’re sending automated rapid requests to a local DeepSeek API, add some delay or ensure you’re properly awaiting responses. Check if the server config has a max queue length or concurrency limit – for example, some web UIs only process one generation at a time. Restarting the server can clear stuck states. Also, ensure your system isn’t out of memory, as that can cause processes to start failing (check system resources). Essentially, treat your local server kindly: it’s not as robust as a cloud service, so pacing requests or upgrading hardware (more RAM/GPU) might be necessary for heavy usage.

This covers a range of issues you might face. If you’re still stuck after these first fixes, you might need a more in-depth guide. See the DeepSeek Troubleshooting Guide for advanced troubleshooting and specific error scenarios. And remember, many issues can be resolved by simplifying your setup (e.g., using a smaller model or a more supported environment) – so don’t hesitate to try an alternate approach if you keep hitting a wall.

Windows Security & Privacy Notes for Local DeepSeek

Running DeepSeek locally on Windows means you’re in control of your data, which is a big plus for privacy. Unlike cloud AI services, nothing you input into DeepSeek needs to leave your machine. However, “offline” doesn’t automatically equate to perfectly secure or private. Here are some considerations to keep your setup safe and your data truly private:

Data never leaves your PC (by default): DeepSeek’s computations happen on your hardware, so chat logs and prompts aren’t sent to any server. This is great for confidentiality – you can ask DeepSeek about sensitive code or documents without worrying about cloud leakage. Just be aware of what apps you use: if you’re using a community web UI or extension, ensure it’s not configured to phone home. The DeepSeek Offline VS Code extension, for example, explicitly runs everything locally for privacy. When in doubt, use network monitoring or firewall rules to block the program from any internet access, confirming it’s truly local.

Local logs and cache: Many local LLM tools keep a log or history of your conversations (so you can scroll up or reuse them). These logs are stored on disk. If you’re security-conscious, locate these files (for instance, a web UI might save chat histories under its program folder or in C:\Users\<Name>\AppData\...). You may periodically clear them or disable logging if the option exists. Similarly, model caches (e.g., Hugging Face transformers cache) can store model weights and outputs on disk. While not a privacy risk per se (they contain model data, not your prompts), they do consume space and could be analyzed to infer usage. Treat these like browser caches – mostly benign, but clearable if you desire.

Restrict network access: DeepSeek servers or UIs typically bind to localhost by default, meaning only you can access them from your machine. It’s wise to keep it that way. Do not expose the DeepSeek interface to the broader network unless you secure it. If you must access it from another device, consider setting up SSH port forwarding or a VPN rather than opening a port to all. If you open a firewall port for some reason, use Windows Firewall advanced settings to scope it to your IP or LAN as needed. An openly exposed AI service on your PC could be misused by others on your network or, worst-case, over the internet.

Authentication if serving beyond localhost: Some may experiment with accessing their local DeepSeek from a phone or another PC on the LAN. If you do this, see if the software supports a basic auth token or password. For example, certain web UIs allow an optional admin token. Lacking that, you could put a reverse proxy like Nginx or Caddy in front to require a password. While this is probably overkill for most, it’s worth mentioning: don’t assume others can’t connect just because it’s on your PC – with the wrong settings, they might. Always verify what IP/port your DeepSeek service is listening on (127.0.0.1 vs 0.0.0.0) and test from another machine if you’re unsure.

Malware and model downloads: This isn’t specific to Windows, but since Windows is a common malware target, be careful when downloading models or tools. Only download DeepSeek models from trusted sources (e.g., the official Hugging Face repository or DeepSeek Homepage links). There have been cases of poisoned model files or fake downloads laden with malware. Running local AI doesn’t increase security risk inherently, but downloading unknown large files from random links can.

In summary, local DeepSeek is as private as you make it. Keep everything on localhost, be mindful of logs, and practice standard security hygiene (updates, trusted sources). This way, you reap the privacy benefits of offline AI without introducing new vulnerabilities.

What Works / What Breaks (Summary Table)

To wrap up, here’s a quick-reference table summarizing different Windows setup options for DeepSeek, highlighting what each path works well for, where it often breaks, and the best next step if you hit a dead end:

| Environment | Works Well For | Common Breakpoints | Best Next Move if Issues Arise |

|---|---|---|---|

| WSL2 + NVIDIA GPU | – Using NVIDIA CUDA with performance close to native Linux– Running Linux-first DeepSeek tooling (vLLM, PyTorch, Transformers)– Developers comfortable with Linux environments | – Requires WSL2 setup and compatible NVIDIA drivers– Some overhead compared with native Linux depending on workload– GUI applications may require display forwarding | – Update Windows, WSL kernel, and NVIDIA drivers– Allocate more memory/CPU to WSL2 if needed– For critical workloads, consider running directly on Linux |

| Native Windows + NVIDIA GPU | – Basic usage with Windows-compatible libraries– Situations where WSL2 is restricted (corporate environments)– GPU acceleration with frameworks like PyTorch | – Dependency mismatches (CUDA, drivers, Python libraries)– Some optimized runtimes unavailable on Windows– Performance tuning can be harder than on Linux | – Use Conda or prebuilt environments to reduce dependency issues– Consider Docker Desktop with WSL2 backend– Move to WSL2 if compatibility problems persist |

| CPU-only Windows | – Maximum compatibility on any modern PC– Small models or quick testing– Situations without GPU access | – Very slow inference for larger models (13B+)– High CPU and RAM usage– Large models may fail to load if memory is insufficient | – Use quantized models (4-bit / 8-bit)– Reduce context length– Upgrade to GPU or use a more powerful machine |

| AMD GPU on Windows | – Possible with supported AMD hardware and drivers– Some workflows using PyTorch or DirectML– Enthusiasts experimenting with AMD acceleration | – Support varies widely depending on GPU generation and runtime– Some optimized inference engines are Linux-focused– Configuration can be more complex than NVIDIA | – Check AMD documentation for supported GPUs and drivers– Consider WSL2 for better compatibility with some runtimes– If issues persist, use CPU inference or a Linux setup |

| Windows + Ollama (or similar tools) | – Easiest local deployment– Quick experimentation with DeepSeek models– Users who prefer simple installation without manual setup | – Performance depends on available hardware– Limited control over advanced runtime configuration– Large models still require significant RAM even when quantized | – Use smaller model variants for responsiveness– Enable GPU acceleration if supported by your hardware– Move to WSL2 or a custom runtime if you need more control |

| Windows + llama.cpp CLI | – Lightweight local inference– Running quantized models efficiently– Maximum control with minimal dependencies | – Command-line interface only– Model format conversion may be required (GGUF)– Limited advanced serving features | – Use community frontends or GUIs built on llama.cpp– Enable GPU layer offloading if supported (CUDA/Vulkan builds)– Move to WSL2 with frameworks like PyTorch or vLLM for larger deployments |

Note: Choose the path that aligns with your expertise and hardware. Many users start with an easy route (like Ollama or WSL2 with a small model) and then iterate.

Wrap-up

Running DeepSeek on Windows is absolutely achievable – just tailor the approach to your hardware and goals. If you have a capable NVIDIA GPU, start with the most supported path (often WSL2 + Linux tooling) to minimize friction. If you’re on CPU or just exploring, a simpler app-based method might serve you well initially. In all cases, proceed incrementally: get a basic setup working, test with a small model, then scale up. This reduces the scope of troubleshooting at each step and keeps your progress testable and manageable.

FAQ

Can I run DeepSeek on Windows without WSL2?

Yes, you can run DeepSeek on Windows natively (without WSL2). This typically involves using a CPU-only approach or a Windows-specific tool. For instance, you could use a native app like LM Studio or the Windows version of Ollama to load a DeepSeek model. You can also install Python and try running DeepSeek via Hugging Face Transformers on Windows. However, keep in mind that without WSL2 you might miss out on easy GPU acceleration and may encounter more dependency issues. WSL2 is recommended for complex setups, but it’s not strictly required for basic usage – especially if you’re okay with CPU performance.

Is DeepSeek faster on WSL2 than on native Windows?

It depends on the runtime and hardware configuration. In many situations, WSL2 provides better compatibility for DeepSeek workloads because it allows you to run Linux-first tooling such as CUDA-based libraries, Docker containers, or runtimes like vLLM that are primarily developed for Linux environments. For GPU-accelerated workflows, WSL2 is often the more reliable option on Windows because it enables access to the same CUDA ecosystem used on Linux. In these cases, performance can be close to native Linux when drivers and configuration are correct. For CPU-only workloads, performance between native Windows and WSL2 is generally similar. WSL2 introduces a small virtualization layer, so results may vary depending on the workload, runtime, and system configuration. In practice, WSL2 is usually recommended not necessarily because it is always faster, but because it offers better compatibility with the Linux-centric tooling commonly used to run large language models such as DeepSeek.

How do I run DeepSeek on Windows with an NVIDIA GPU?

To run DeepSeek with an NVIDIA GPU on Windows, the recommended approach is to use WSL2 or Docker (which uses WSL2 under the hood) so you can leverage Linux CUDA support. Here’s a high-level plan: (1) Enable WSL2 and install Ubuntu (or your preferred distro). (2) Install the latest NVIDIA drivers on Windows (ensuring WSL CUDA support is enabled). (3) Inside WSL2, install the necessary packages (Python, CUDA toolkit, etc.) and then install DeepSeek or your chosen inference library (like vLLM or Transformers). (4) Launch DeepSeek within WSL2 – it should detect the NVIDIA GPU and use it for inference. Alternatively, you can use Docker Desktop: enable WSL2 backend, and run a DeepSeek Docker image which comes pre-configured for CUDA. If you prefer not to use WSL2 at all, you can try running DeepSeek with PyTorch on native Windows – just make sure to install the CUDA-enabled PyTorch build and have compatible drivers. This works, but you might miss out on some performance tuning that the WSL route offers. In all cases, verify the GPU is actually being utilized (monitor GPU usage) and that you’re not inadvertently falling back to CPU.

Can I run DeepSeek on Windows with an AMD GPU?

Running DeepSeek with an AMD GPU on Windows is possible, but support is less consistent than with NVIDIA GPUs. AMD now provides some Windows support through its HIP SDK and PyTorch-on-Windows paths for selected Radeon hardware, but compatibility across the broader local-LLM ecosystem is still uneven. In practice, some workflows may run natively on Windows using frameworks such as PyTorch or DirectML-based backends, while other tools and optimized inference engines are still primarily designed for Linux environments. Because of this, many users with AMD GPUs choose to run DeepSeek through WSL2 with a Linux-based stack, or rely on CPU inference or quantized models when GPU acceleration is not fully supported. In short, running DeepSeek on Windows with AMD hardware is possible in some configurations, but it remains more variable and setup-dependent than the NVIDIA CUDA path.

What DeepSeek model formats work best on Windows?

It depends on your setup. If you’re using WSL2 or native Python with GPU, the original Hugging Face Transformers format (usually a .bin or .safetensors file with FP16/FP32 weights) is suitable – that’s what the official code expects. On the other hand, if you are using llama.cpp, Ollama, or any CPU-focused tool, you’ll want a quantized GGML/GGUF format. These formats (like DeepSeek in 4-bit or 8-bit quantization) are optimized for CPU and use much less memory, making them ideal for Windows machines without huge VRAM. They come at a slight drop in model accuracy but are far more practical. In summary: use the standard model file for full-featured GPU setups, and use quantized model files (GGUF/GGML) for lightweight or CPU setups. Always ensure the format matches the tool – a GGUF file won’t load in PyTorch, and a raw PyTorch model won’t load in llama.cpp without conversion.

Why does DeepSeek crash on Windows during model load?

The most common reason is running out of memory (RAM or VRAM). When DeepSeek tries to allocate a large array for the model and the system can’t provide it, it may crash or throw an exception. This often looks like a crash during the initial load or the first query. Other causes could be incompatible libraries (e.g., a DLL issue with CUDA on Windows, or a missing dependency causing a hard fault). First steps: check your RAM and VRAM usage – if they spike to 100% before the crash, you’ve hit a limit. In that case, try a smaller model or a quantized version. If memory isn’t the issue, run the program in a console to see any error output. It might point to a missing library (“ImportError” or similar), in which case reinstalling or fixing your environment could help. If using WSL2, ensure you didn’t allocate too little memory to your Linux VM (by default it’s dynamic, but custom .wslconfig could cap it). Essentially, a crash on load points to a resource or environment problem – double-check all prerequisites and consider the model size relative to your system.

Do I need Docker to run DeepSeek on Windows?

No, Docker is not required. It is simply an optional way to package and run the environment more cleanly. On Windows, Docker Desktop typically works with WSL2, and GPU support in Docker Desktop for Windows is available through the WSL2 backend. You can still run DeepSeek without Docker by using WSL2 directly or native Windows tools such as Ollama, LM Studio, llama.cpp, or Transformers. In short, Docker can simplify setup, but it is not mandatory.

Does DeepSeek require Windows 11, or will it run on Windows 10?

You can run DeepSeek on both Windows 10 and Windows 11, but Windows 11 is the recommended baseline for new setups. The key requirement for many advanced workflows is WSL2, which is available on Windows 10 version 2004 and later (build 19041+) as well as Windows 11. Windows 11 generally provides a smoother experience because WSL2, GPU integration, and driver support are more actively maintained. It is also important to note that Microsoft ended official support for Windows 10 on October 14, 2025, which means new AI tooling and driver updates will increasingly target Windows 11. For this reason, most current DeepSeek guides assume a Windows 11 environment. That said, an up-to-date Windows 10 system can still run many local DeepSeek workflows, especially CPU-based setups or simpler runtimes such as Ollama, llama.cpp, or Python inference environments. If you plan to use GPU acceleration through WSL2, ensure your system is fully updated and that you install the latest compatible NVIDIA or AMD drivers. In short, Windows 11 is strongly recommended, but Windows 10 may still work for certain setups if properly updated.

How can I improve DeepSeek performance on my Windows PC?

There are several ways to improve DeepSeek performance on a Windows PC.

(1) Use a GPU if possible: If you are currently running on CPU, moving to a compatible GPU setup—especially an NVIDIA GPU using CUDA through WSL2 or a supported native Windows runtime—can provide a major speed increase.

(2) Use quantized models: Lower-bit versions of a model (for example, 4-bit or 8-bit quantized variants) use less memory and usually run faster, though they may involve some loss in accuracy or output quality.

(3) Optimize your runtime: If you are using llama.cpp, make sure you are using an appropriate build and enable available BLAS or GPU offload options where supported. If you are using PyTorch, ensure that you have the correct optimized math libraries and, if applicable, the proper GPU-enabled build installed.

(4) Reduce context length or output size: Longer prompts and longer generated responses increase compute cost. Keeping both within reasonable limits can noticeably improve speed, especially on modest hardware.

(5) Free up system resources: Close unnecessary applications so DeepSeek has more access to CPU, RAM, and GPU resources. On Windows, using a High Performance power plan can also help reduce throttling on some systems.

(6) Tune WSL2 resources if needed: If you are running DeepSeek inside WSL2, you can allocate more memory or CPU resources through the .wslconfig file if the default configuration is limiting performance.

Each of these steps can help, but hardware still sets the ceiling. A mid-range laptop will not perform like a high-end desktop, even with careful optimization, so it is important to set realistic expectations.