Last updated: May 14, 2026

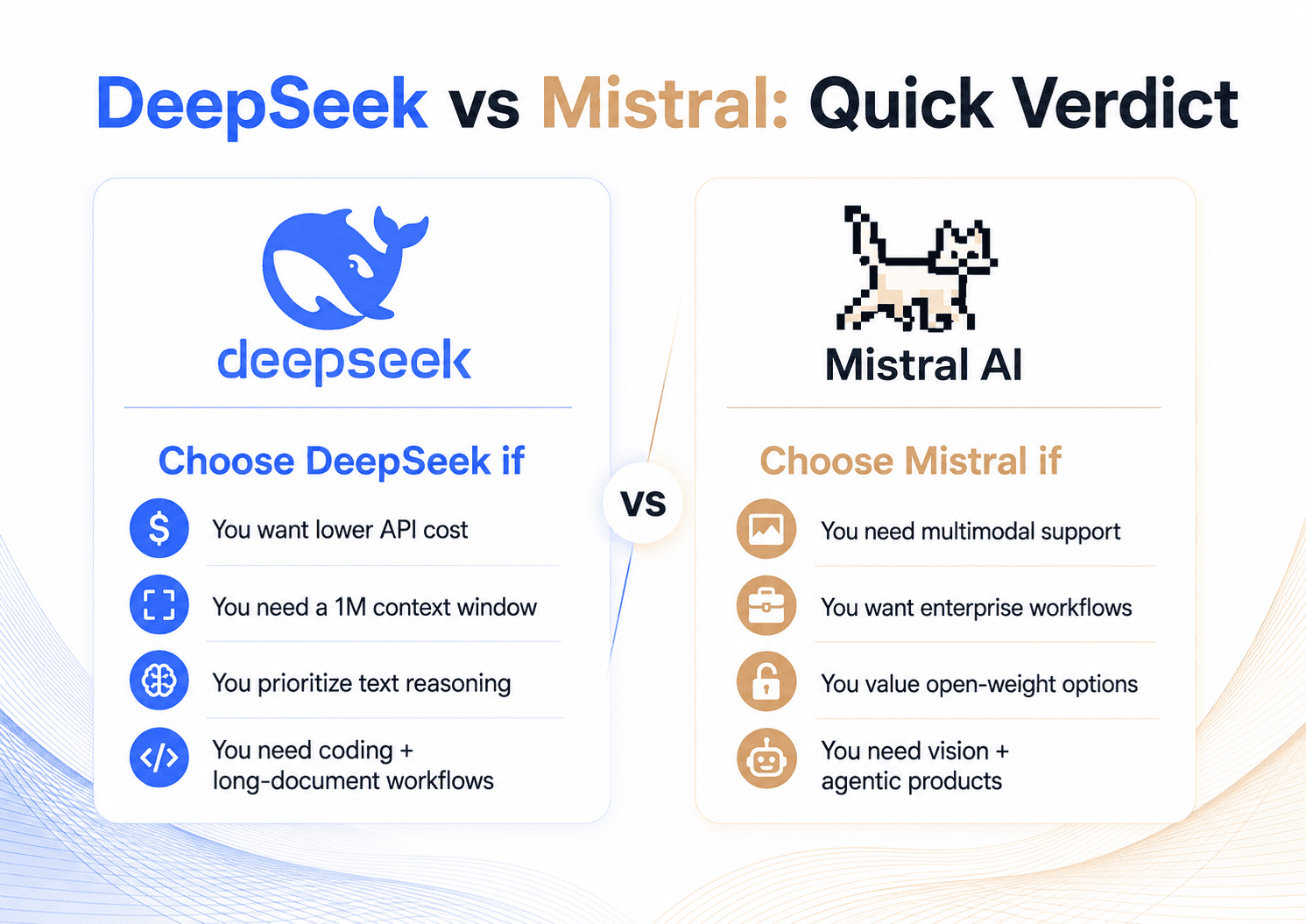

Choose DeepSeek if you want a low-cost API, a massive 1M-token context window, strong reasoning and coding value, and an OpenAI/Anthropic-compatible developer experience. DeepSeek V4-Pro and V4-Flash are especially attractive for long-context text work, codebase analysis, experimentation, and workloads where token cost matters heavily. DeepSeek’s current API pricing also includes very low cache-hit input pricing and a temporary discount on V4-Pro as of May 14, 2026.

Choose Mistral if you need multimodal capability, strong open-weight options, enterprise deployment flexibility, polished products such as Le Chat and Vibe, or European vendor positioning. Mistral Medium 3.5 is built for agentic coding and multimodal work, while Mistral Large 3 and Mistral Small 4 offer Apache 2.0 open-weight alternatives for teams that care about licensing and self-hosting.

The best choice is not “DeepSeek or Mistral” in the abstract. It depends on which model you compare and what you are building.

DeepSeek vs Mistral: Quick Comparison Table

| Category | DeepSeek | Mistral AI |

|---|---|---|

| Best for | Low-cost text API, long-context reasoning, coding-heavy workflows, high-volume experimentation | Multimodal apps, agentic products, enterprise workflows, self-hosted open-weight deployments |

| Latest relevant models | DeepSeek V4-Pro, DeepSeek V4-Flash; legacy deepseek-chat and deepseek-reasoner now map to V4-Flash modes | Mistral Medium 3.5, Mistral Large 3, Mistral Small 4, Devstral 2, Ministral 3 |

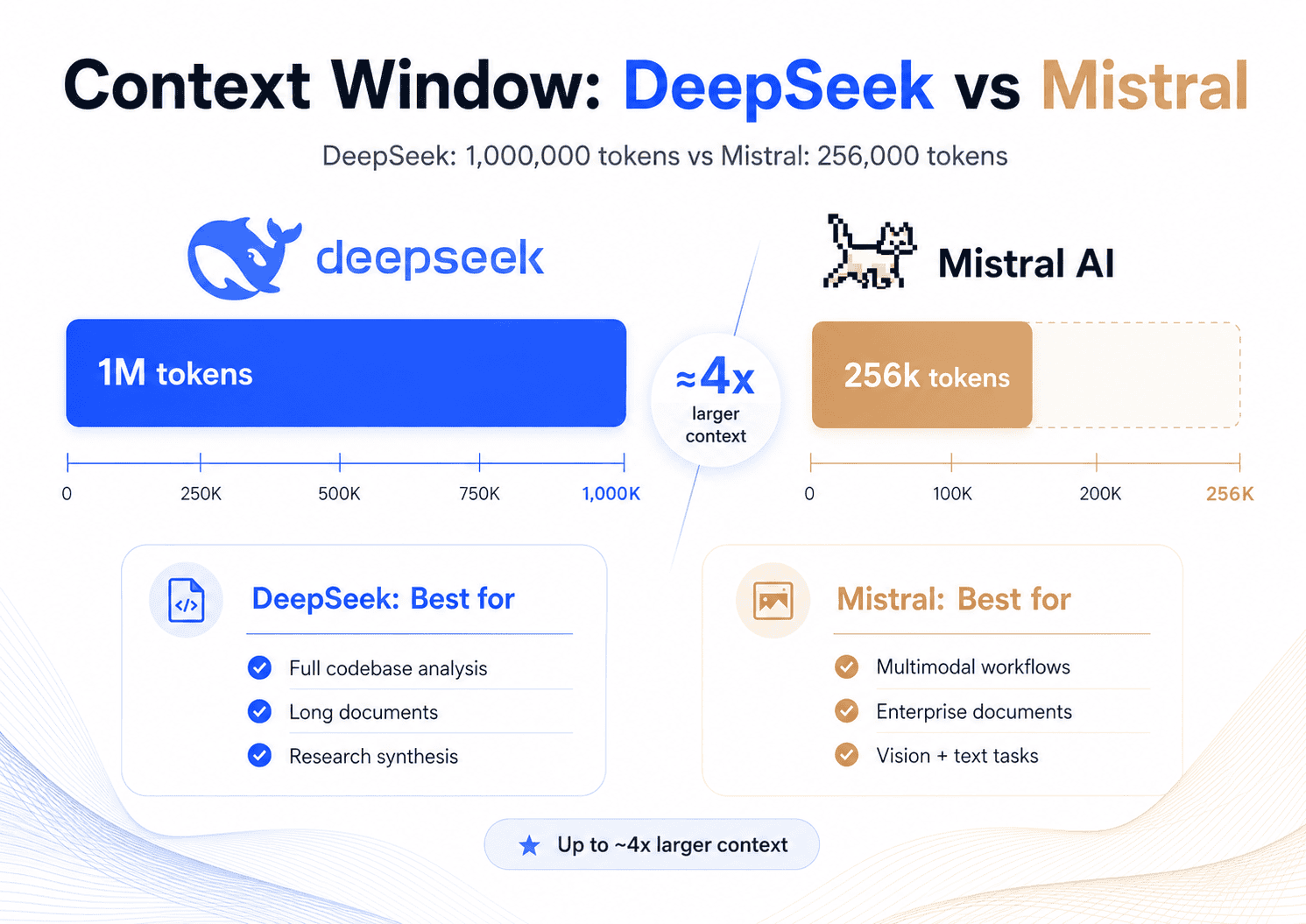

| Context window | 1M tokens on V4-Pro and V4-Flash | 256k tokens on Medium 3.5, Large 3, Small 4, Devstral 2 |

| Pricing | Very low Flash pricing; V4-Pro currently discounted until May 31, 2026 | Higher for Medium 3.5; competitive for Small 4 and Large 3 |

| Coding | Strong, especially V4-Pro reasoning modes and V4-Flash for cost-effective coding | Strong, especially Medium 3.5 and Devstral/Vibe workflows |

| Reasoning | Strong text reasoning with non-think, think, and max-thinking modes | Configurable reasoning in Medium 3.5 and Small 4 |

| Multimodal / vision | Official DeepSeek API is primarily text-focused; Anthropic compatibility docs list image input as not supported | Clear advantage: Medium 3.5, Large 3, and Small 4 support multimodal/vision workflows |

| Open weights / license | DeepSeek V4-Pro is listed on Hugging Face under MIT | Large 3 and Small 4 are Apache 2.0; Medium 3.5 uses a Modified MIT license with revenue restrictions |

| API ecosystem | OpenAI-compatible and Anthropic-compatible API endpoints | Native Mistral SDK plus OpenAI-compatible API option |

| Self-hosting | Possible via Hugging Face weights, vLLM, SGLang, Docker, but hardware needs are large | Strong self-hosting story, especially with Mistral Large 3, Small 4, Ministral, and Medium 3.5 |

| Enterprise fit | Strong for cost and long-context API use; evaluate governance and deployment needs | Strong for enterprise deployment, EU vendor preference, agents, Le Chat, Vibe, and custom deployments |

| Main weakness | Less mature multimodal/vision story through the official API | Higher API cost for Medium 3.5 and more complex licensing for that model |

DeepSeek’s official docs list V4-Pro and V4-Flash with 1M context, JSON output, tool calls, Anthropic/OpenAI-compatible endpoints, and current discounted V4-Pro pricing. Mistral’s docs list Medium 3.5, Small 4, Large 3, Devstral 2, and Ministral models with 256k-context multimodal capabilities across the latest lineup.

What Is DeepSeek?

DeepSeek is an AI lab known for cost-efficient large language models with strong reasoning, coding, and long-context capabilities. As of May 14, 2026, the key models for this comparison are DeepSeek V4-Pro and DeepSeek V4-Flash. DeepSeek describes V4-Pro as a 1.6T-parameter Mixture-of-Experts model with 49B active parameters, while V4-Flash is a lighter 284B-parameter MoE model with 13B active parameters. Both support a 1M-token context window.

DeepSeek’s biggest practical advantage is cost-to-context ratio. V4-Flash is extremely cheap for large text workloads, and V4-Pro is currently discounted on the official API until May 31, 2026. The API also supports JSON output, tool calls, OpenAI-format calls, and Anthropic-format calls, which makes it easier to plug into existing developer workflows.

A note on DeepSeek R1: if you are searching for “DeepSeek R1 vs Mistral,” the comparison is now partly historical. DeepSeek’s older deepseek-reasoner model name corresponds to the thinking mode of deepseek-v4-flash and is scheduled for deprecation on July 24, 2026. For current API users, the more relevant comparison is DeepSeek V4-Flash or V4-Pro thinking mode versus Mistral Medium 3.5 or Small 4 reasoning mode.

What Is Mistral AI?

Mistral AI is a European AI company focused on open-weight models, enterprise AI deployment, developer tooling, and integrated products such as Le Chat, Mistral Studio, and Mistral Vibe. Its latest model family is broader than one single chatbot model. The most relevant models for a DeepSeek vs Mistral comparison are Mistral Medium 3.5, Mistral Large 3, Mistral Small 4, Devstral 2, and Ministral 3.

Mistral Medium 3.5 is a dense 128B model with a 256k context window, multimodal capabilities, configurable reasoning effort, and a focus on agentic coding and productivity workflows. Mistral says it powers Le Chat, Vibe remote agents, and Work mode in Le Chat.

Mistral Large 3 is a 675B total / 41B active-parameter open-weight multimodal MoE model released under Apache 2.0, while Mistral Small 4 is a 119B total-parameter model with 6B active parameters, 256k context, image input support, and Apache 2.0 licensing.

DeepSeek vs Mistral: Model Lineup Comparison

| DeepSeek model | Closest Mistral comparison | Best comparison angle |

|---|---|---|

| DeepSeek V4-Pro | Mistral Medium 3.5 | High-end reasoning, coding, agentic work, API quality, cost |

| DeepSeek V4-Flash | Mistral Small 4 / Mistral Large 3 | Cost-efficient general work, long-context text, coding, self-hosting trade-offs |

| DeepSeek V4-Pro Max / thinking mode | Mistral Medium 3.5 reasoning mode | Hard reasoning, math, software engineering, multi-step analysis |

Legacy DeepSeek R1 / deepseek-reasoner | Mistral Medium 3.5 / Small 4 reasoning | Historical reasoning comparison; current DeepSeek API users should test V4 thinking modes |

| DeepSeek open-weight V4 | Mistral Large 3 / Small 4 / Medium 3.5 open weights | Licensing, self-hosting, model control, enterprise deployment |

Model-to-model matching matters because comparing “DeepSeek” to “Mistral” can be misleading. DeepSeek V4-Pro is not the same product category as Mistral Small 4, and Mistral Medium 3.5 is not the same deployment choice as Mistral Large 3. DeepSeek is strongest when judged by long-context text, current official API pricing, and reasoning/coding cost efficiency. Mistral is strongest when judged by multimodality, enterprise workflows, open-weight variety, and integrated tools.

Performance and Benchmarks

For coding and reasoning, both companies publish strong benchmark claims, but you should separate vendor-reported results from independent benchmark aggregators.

DeepSeek’s Hugging Face model card reports V4-Pro-Max results including 93.5 on LiveCodeBench, 90.1 on GPQA Diamond, 80.6 on SWE Verified, and 83.5 on MRCR 1M. It also lists V4-Pro and V4-Flash results across reasoning modes, including non-think, high-thinking, and max-thinking configurations. These are useful signals, but they are vendor-provided and should be validated on your own workload.

Mistral’s Medium 3.5 model card reports 77.6% on SWE-Bench Verified and 91.4% on τ³-Telecom, and Mistral says the model supersedes its previous coding models, including Devstral, across its benchmarks. Like DeepSeek’s published results, these are useful but should be treated as vendor-reported unless independently reproduced.

Independent benchmark pages add another view. Artificial Analysis lists DeepSeek V4 Pro Max with an Intelligence Index of 52, a 1M context window, and 30.1 output tokens per second, while its leaderboard lists DeepSeek V4 Pro and Mistral Medium 3.5 both at an Intelligence Index of 39 in the non-max comparison area, with Mistral Medium 3.5 showing much higher output speed.

Practical takeaway: DeepSeek V4-Pro looks stronger for maximum reasoning, very long context, and cost-sensitive coding. Mistral Medium 3.5 looks stronger for multimodal agentic workflows and faster interactive use. For production, run a private benchmark using your own prompts, repositories, documents, tool calls, latency targets, and failure cases.

Pricing and Cost Comparison

All prices below are as of May 14, 2026 and may change. DeepSeek’s V4-Pro price is currently discounted until May 31, 2026, according to its official pricing page. The same page says product prices may vary and recommends checking the pricing page regularly.

| Model | Input price / 1M tokens | Cached input / 1M tokens | Output price / 1M tokens | Context |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.0028 | $0.28 | 1M |

| DeepSeek V4-Pro, discounted | $0.435 | $0.003625 | $0.87 | 1M |

| DeepSeek V4-Pro, list price shown crossed out by DeepSeek | $1.74 | $0.0145 | $3.48 | 1M |

| Mistral Medium 3.5 | $1.50 | Not listed | $7.50 | 256k |

| Mistral Large 3 | $0.50 | Not listed | $1.50 | 256k |

| Mistral Small 4 | $0.15 | Not listed | $0.60 | 256k |

| Devstral 2 | $0.40 | Not listed | $2.00 | 256k |

Mistral Medium 3.5’s API pricing is stated by Mistral as $1.50 per million input tokens and $7.50 per million output tokens. Mistral Large 3, Small 4, and Devstral 2 model cards show their respective prices and 256k context windows.

Example Cost Scenarios

| Scenario | DeepSeek V4-Flash | DeepSeek V4-Pro discounted | Mistral Medium 3.5 | Mistral Large 3 | Mistral Small 4 |

|---|---|---|---|---|---|

| 10M input + 2M output, no cache | $1.96 | $6.09 | $30.00 | $8.00 | $2.70 |

| Coding assistant workload: 50M input + 10M output, no cache | $9.80 | $30.45 | $150.00 | $40.00 | $13.50 |

| Long-document workflow: 100M input + 5M output, assuming 70% DeepSeek cache hits | $5.80 | $17.65 | $187.50 | $57.50 | $18.00 |

These examples assume first-party listed API prices and simple token math: (input tokens / 1M × input price) + (output tokens / 1M × output price). The long-document scenario assumes repeated prefixes for DeepSeek cache hits; if your prompts are always unique, DeepSeek’s cache advantage will be smaller.

Pricing verdict: DeepSeek V4-Flash is the cheapest choice in most text-only scenarios. DeepSeek V4-Pro is also highly competitive while discounted. Mistral Small 4 is price-competitive against DeepSeek Flash for many general workloads, but Mistral Medium 3.5 is much more expensive and should be chosen when its multimodal, agentic, or enterprise advantages justify the cost.

Coding and Developer Workflows

DeepSeek is very strong for developers who want low-cost API access, large context windows, and compatibility with existing tools. The official API supports OpenAI-format calls, Anthropic-format calls, JSON output, tool calls, and agent/coding assistant integrations. DeepSeek’s docs also say the API can be used with popular AI agent and coding assistant tools.

Mistral is stronger when you want a more complete coding product ecosystem. Mistral Medium 3.5 powers Vibe remote agents, Le Chat Work mode, and cloud coding sessions that can run in parallel, inspect file diffs, use tools, and open pull requests. Mistral also has Devstral 2, a dedicated code-agent model for exploring codebases, editing files, and powering software engineering agents.

For raw code generation and repository analysis, DeepSeek V4-Pro and V4-Flash are compelling bec.ause of the 1M-token context and low input cost. For agentic coding where the model interacts with GitHub, issue trackers, cloud sandboxes, and human approval flows, Mistral’s Vibe integration may be more production-ready out of the box.

Developer verdict: choose DeepSeek for cost-efficient coding and long-context repository work. Choose Mistral for integrated agentic coding workflows, multimodal coding tasks, and enterprise coding products.

Reasoning, Math, and Analysis

DeepSeek V4-Pro and V4-Flash support multiple reasoning effort modes: non-think, think, and max-thinking. In practice, this lets you trade latency and cost for better reasoning on hard tasks. DeepSeek’s model card reports major gains when moving from non-thinking to high or max-thinking modes on GPQA Diamond, LiveCodeBench, SWE Verified, MRCR 1M, and other reasoning-heavy benchmarks.

Mistral Medium 3.5 and Small 4 also support configurable reasoning. Medium 3.5 is designed to merge instruction-following, reasoning, and coding into a single dense 128B model, while Small 4 unifies instruct, reasoning, coding, and multimodal capabilities in one efficient model.

Reasoning verdict: DeepSeek has the edge for text-only reasoning at very long context and lower cost. Mistral is a better fit when reasoning must happen alongside images, tools, and enterprise workflow integrations.

Context Window and Long-Document Work

Context window is one of the clearest DeepSeek advantages. DeepSeek V4-Pro and V4-Flash support a 1M-token context window. Mistral Medium 3.5, Large 3, Small 4, and Devstral 2 support 256k context windows.

A 1M context window is useful for:

- Full-codebase review

- Large legal or financial documents

- Research synthesis across many papers

- Large internal policy manuals

- Multi-document comparison

- Long-running text-only agent sessions

That said, bigger context is not always better. Long-context prompting can become expensive, slow, and noisy. For recurring knowledge tasks, retrieval-augmented generation may still be better than dumping everything into the prompt. DeepSeek’s low cached-input pricing makes repeated long-prefix workflows unusually attractive, but you should still test recall, citation quality, latency, and cost.

Long-context verdict: DeepSeek is the better choice for very large text-only context. Mistral is still sufficient for many enterprise document workflows at 256k, especially when multimodal or built-in document features matter.

Multimodal and Vision

Mistral has the clear advantage for multimodal work. Mistral Medium 3.5 supports image analysis, multilingual text, function calling, JSON output, and 256k context. Mistral Small 4 also supports both text and image inputs, and Mistral Large 3 is described as a general-purpose multimodal open-weight model.

DeepSeek V4 is much stronger as a text model than as a multimodal platform. DeepSeek’s Anthropic compatibility documentation explicitly lists image message content as not supported. Artificial Analysis also describes DeepSeek V4-Pro Max as supporting text input and text output, not image input.

Multimodal verdict: choose Mistral for image understanding, visual document analysis, OCR-adjacent workflows, and multimodal assistants. Choose DeepSeek when your workload is text-only and context/cost matter more.

Open Weights, Licensing, and Self-Hosting

DeepSeek V4-Pro is listed on Hugging Face under an MIT license, and the license text allows use, copying, modification, merging, publishing, distribution, sublicensing, and selling copies, subject to the license notice and warranty disclaimer.

Mistral’s licensing is more varied. Mistral Large 3 is Apache 2.0, and Mistral’s Mistral 3 announcement says Large 3 and the Ministral 3 family were released under Apache 2.0. Mistral Small 4 is also announced under Apache 2.0.

Mistral Medium 3.5 is different. It is open weights under a Modified MIT License, but the Hugging Face license text says users are not authorized under that license if their company or employer exceeded $20 million in global consolidated monthly revenue during the preceding month. Large organizations should review the license carefully or contact Mistral for commercial terms.

Licensing verdict: DeepSeek V4-Pro and Mistral Large 3/Small 4 are the simpler choices for permissive open-weight use. Mistral Medium 3.5 may be excellent technically, but its Modified MIT terms require more careful review for larger companies. This is not legal advice; always review the current license before commercial deployment.

API and Developer Experience

DeepSeek’s API is attractive because it supports both OpenAI-compatible and Anthropic-compatible formats. Developers can point existing OpenAI SDK usage to https://api.deepseek.com, or use the Anthropic-compatible endpoint at https://api.deepseek.com/anthropic. DeepSeek also supports tool calls, JSON output, and reasoning configuration.

Mistral’s API follows a similar Chat Completions structure to OpenAI for migrations, and Mistral provides a native SDK, OpenAI-compatible base URL option, function calling, structured outputs, Agents, and Conversations APIs.

API verdict: DeepSeek is easier if you want to swap into existing OpenAI or Anthropic-style tooling at low cost. Mistral is stronger if you want a broader managed ecosystem with agents, conversations, built-in workflows, and enterprise-facing products.

Enterprise, Privacy, and Compliance

Mistral has a strong enterprise story because it offers open-weight models, self-hosting paths, integrated products, and enterprise deployment options. Mistral Medium 3.5 is positioned for agentic workflows, Vibe remote coding agents, and Le Chat Work mode, while Mistral Large 3 and Small 4 give organizations Apache 2.0 open-weight options.

DeepSeek is compelling for enterprise teams that prioritize low token cost, long-context API workflows, and text reasoning. However, teams with strict governance, regional hosting, auditability, support, or on-prem requirements should evaluate vendor risk, deployment model, data handling, and support terms before standardizing on either provider.

Enterprise verdict: Mistral is usually the safer first test for multimodal, self-hosted, and European enterprise deployment needs. DeepSeek is usually the better first test for low-cost long-context text reasoning and high-volume coding workloads.

DeepSeek Pros and Cons

DeepSeek Pros

- 1M-token context window on V4-Pro and V4-Flash

- Very low V4-Flash pricing

- Current V4-Pro discount makes it highly cost-effective as of May 14, 2026

- Strong reasoning and coding benchmarks, especially in thinking modes

- OpenAI-compatible and Anthropic-compatible API options

- JSON output, tool calls, and agent integrations

- MIT-licensed V4-Pro weights on Hugging Face

DeepSeek Cons

- Weaker official multimodal/vision support

- V4-Pro discount is temporary and may change after May 31, 2026

- Large models can be difficult and expensive to self-host

- Some benchmark claims are vendor-reported

- Legacy

deepseek-chatanddeepseek-reasonernames are being deprecated

Mistral Pros and Cons

Mistral Pros

- Strong multimodal support across current models

- Mistral Medium 3.5 is optimized for agentic coding and productivity

- Large 3 and Small 4 are Apache 2.0 open-weight options

- Strong enterprise positioning and European vendor profile

- Mature product ecosystem: Le Chat, Studio, Vibe, Agents, Conversations

- Good self-hosting and deployment story across model sizes

- Small 4 is competitively priced for a multimodal reasoning model

Mistral Cons

- Mistral Medium 3.5 is more expensive than DeepSeek for API usage

- Medium 3.5’s Modified MIT license has revenue restrictions

- 256k context is smaller than DeepSeek’s 1M context

- The model lineup can be confusing because the best model depends heavily on use case

- Some coding and agentic benchmark claims are vendor-reported

Use-Case Recommendation Table

| Use case | Better choice | Why |

|---|---|---|

| Cheapest API chatbot | DeepSeek V4-Flash | Very low input/output pricing and 1M context |

| Coding assistant | DeepSeek V4-Pro or Mistral Medium 3.5 | DeepSeek for cost/context; Mistral for integrated agentic coding |

| Agentic coding | Mistral Medium 3.5 / Vibe | Strong product integration, remote agents, PR-oriented workflows |

| Enterprise deployment | Mistral | Stronger enterprise product stack and open-weight deployment options |

| Multimodal assistant | Mistral | Medium 3.5, Large 3, and Small 4 support vision/multimodal workflows |

| Long-context document analysis | DeepSeek | 1M context and cheap cached input pricing |

| Local/self-hosted use | Mistral Large 3 / Small 4 or DeepSeek V4 | Mistral has more size/licensing variety; DeepSeek is strong but heavier |

| Multilingual app | Mistral Medium 3.5 or Large 3 | Mistral emphasizes multilingual and multimodal support |

| Startup prototyping | DeepSeek V4-Flash | Lowest cost for fast experimentation |

| Regulated enterprise use | Mistral, with license review | Better enterprise deployment story, but review model license and hosting terms |

Final Verdict: DeepSeek vs Mistral

DeepSeek is the better first choice for cost-efficient reasoning, long-context document work, text-only coding workflows, and high-volume experimentation. Its 1M context window, low V4-Flash pricing, current V4-Pro discount, OpenAI/Anthropic API compatibility, and strong reasoning modes make it one of the most compelling text-model options in 2026.

Mistral is the better first choice for multimodal assistants, enterprise deployment, self-hosting flexibility, agentic coding products, and polished workflows through Le Chat, Vibe, Studio, Agents, and Conversations. Mistral Medium 3.5 is especially relevant if you need one model for reasoning, coding, tool use, and image understanding; Mistral Large 3 and Small 4 are better when permissive Apache 2.0 open weights matter.

The most practical answer: test both. Run DeepSeek V4-Pro or V4-Flash against Mistral Medium 3.5, Large 3, or Small 4 on your own prompts, latency targets, token budgets, tool calls, code repositories, and failure cases before committing.

FAQs

Is DeepSeek better than Mistral?

DeepSeek is better for low-cost text API usage, 1M-token context, and cost-efficient reasoning or coding. Mistral is better for multimodal work, enterprise deployment, open-weight variety, and integrated products such as Le Chat and Vibe.

Is Mistral better than DeepSeek for coding?

Not always. DeepSeek V4-Pro is strong for coding and long-context repository work, while Mistral Medium 3.5 is strong for agentic coding workflows and powers Mistral Vibe. Choose DeepSeek for cost/context and Mistral for integrated coding agents.

Which is cheaper, DeepSeek or Mistral?

DeepSeek is generally cheaper, especially V4-Flash. As of May 14, 2026, DeepSeek V4-Flash costs $0.14 per 1M input tokens and $0.28 per 1M output tokens, while Mistral Medium 3.5 costs $1.50 input and $7.50 output per 1M tokens.

Which has a larger context window?

DeepSeek has the larger context window. DeepSeek V4-Pro and V4-Flash support 1M tokens, while Mistral Medium 3.5, Large 3, Small 4, and Devstral 2 are listed at 256k tokens.

Is DeepSeek open source?

DeepSeek V4-Pro is available as open weights on Hugging Face and is listed under the MIT license. For production use, check the current model card and license text directly before deployment.

Is Mistral open source?

Some Mistral models are open weights under permissive licenses. Mistral Large 3 and Small 4 are Apache 2.0, while Mistral Medium 3.5 uses a Modified MIT license with a revenue restriction.

Which is better for enterprise?

Mistral is usually better for enterprise deployment, especially when multimodal support, European vendor positioning, self-hosting, and integrated products matter. DeepSeek may be better for enterprises focused on low-cost, long-context text processing.

Which is better for multimodal or image tasks?

Mistral is better for multimodal and image tasks. Mistral Medium 3.5 supports vision, and Mistral Small 4 supports both text and image inputs. DeepSeek’s current API documentation lists image input as not supported in its Anthropic compatibility layer.

DeepSeek R1 vs Mistral: which should I use?

For current API use, compare Mistral Medium 3.5 or Small 4 against DeepSeek V4 thinking modes rather than old DeepSeek R1 branding. DeepSeek’s deepseek-reasoner currently maps to V4-Flash thinking mode and is scheduled for deprecation on July 24, 2026.

DeepSeek V4 vs Mistral Medium 3.5: what is the difference?

DeepSeek V4 focuses on text reasoning, long context, low cost, and OpenAI/Anthropic-compatible API access. Mistral Medium 3.5 is a multimodal 128B dense model focused on agentic coding, reasoning, image understanding, Le Chat, and Vibe workflows.