Learning how to set up deepseek on janitor ai is mostly about entering three things correctly: the API key, the URL, and the model name. Most setup errors happen when users mix a DeepSeek API key with an OpenRouter endpoint, paste a base URL into a full proxy URL field, or use an old model name from an outdated tutorial.

This guide shows the current 2026 DeepSeek Janitor AI setup in plain English, including direct DeepSeek API settings, OpenRouter/provider proxy differences, recommended models, roleplay settings, and fixes for common errors.

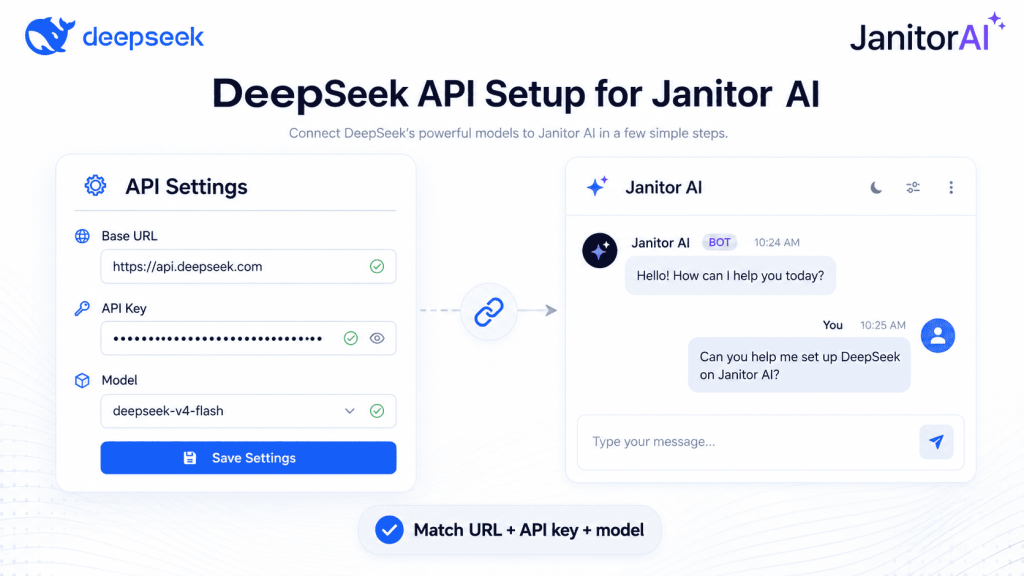

Quick answer: To set up DeepSeek on Janitor AI, create a DeepSeek API key, open Janitor AI’s API or Proxy Settings, choose a Custom/OpenAI-compatible API route, enter the correct DeepSeek base URL or full chat-completions endpoint, paste your API key, enter a current DeepSeek model ID, save the settings, refresh the chat, and send a short test message. For direct DeepSeek, use https://api.deepseek.com as the base URL and a current model such as deepseek-v4-flash or deepseek-v4-pro. DeepSeek’s official documentation lists those model IDs and confirms the OpenAI-compatible API format.

Quick Setup: DeepSeek on Janitor AI

| Route | URL field | API key source | Model name | Best for |

|---|---|---|---|---|

| Direct DeepSeek API | Base URL: https://api.deepseek.com / full endpoint: https://api.deepseek.com/chat/completions | DeepSeek Platform | deepseek-v4-flash or deepseek-v4-pro | Users who want fewer middle layers and direct DeepSeek billing |

| OpenRouter/provider proxy | Full endpoint: https://openrouter.ai/api/v1/chat/completions | OpenRouter or selected provider | Exact provider model slug from the dashboard | Users who want one key for many models |

| Old tutorial values | Verify before using | Depends on route | deepseek-chat, deepseek-reasoner, or old provider slugs | Only for troubleshooting old guides, not the safest new setup |

The key rule is simple: URL + API key + model name must all belong to the same route. A DeepSeek key should go with the DeepSeek endpoint and a DeepSeek model ID. An OpenRouter key should go with the OpenRouter endpoint and an OpenRouter model slug. OpenRouter’s documentation shows requests going to /api/v1/chat/completions and says the model value should be selected from its supported models, usually with the provider prefix.

Before You Start

Before changing Janitor AI settings, prepare these items:

- A Janitor AI account.

- A DeepSeek Platform account.

- A Janitor AI DeepSeek API key from DeepSeek Platform.

- Enough balance or credits if your selected route requires payment.

- A stable browser session.

- One character or chat to test with.

- The exact model name for the route you are using.

Janitor AI is commonly used as a character chat platform, so the setup should be tested inside a chat rather than only inside an account dashboard. Current integration tutorials for Janitor-style proxy setups commonly use the flow of opening a character chat, going to API Settings, choosing Proxy or Custom API, adding a proxy configuration, saving, and refreshing the page.

How to Set Up DeepSeek on Janitor AI Step by Step

1. Create or sign in to DeepSeek Platform

Go to DeepSeek Platform and sign in. Create an API key from the platform dashboard. Keep the key private because anyone who has it may be able to use your account balance.

2. Copy your API key

Copy the DeepSeek API key once and store it safely. Do not paste it into a public prompt, character card, comment, screenshot, or shared preset.

3. Open Janitor AI and enter a chat

Open Janitor AI, choose the character you want to test, and start or open a chat. Many Janitor AI integrations expose API Settings from inside the chat interface rather than as a separate developer page.

4. Open API Settings or Proxy Settings

Look for an option such as API Settings, Proxy Settings, Add Configuration, Custom API, or OpenAI-compatible API. The exact label may change as Janitor AI updates its interface, but the fields usually ask for a URL, API key, and model.

5. Choose Custom or OpenAI-compatible API

DeepSeek is compatible with OpenAI-style API usage, so choose the Janitor AI option that allows a custom OpenAI-compatible endpoint. DeepSeek’s documentation states that the API can be accessed through OpenAI-compatible software by modifying the configuration.

6. Enter the correct URL

Use the field label carefully.

For a Base URL field, enter:

https://api.deepseek.comFor a full proxy URL or chat-completions endpoint field, use the route documented by the provider. For direct DeepSeek, the current official chat-completions path is:

https://api.deepseek.com/chat/completionsSome OpenAI-compatible tools or older proxy examples may expect a /v1/chat/completions shape, but do not assume that format for direct DeepSeek unless the exact provider or UI you are using documents it. DeepSeek’s current API reference lists POST /chat/completions, and its quick-start example calls https://api.deepseek.com/chat/completions.

For OpenRouter, use:

https://openrouter.ai/api/v1/chat/completions7. Enter the model name

For direct DeepSeek, start with one of these:

deepseek-v4-flashor:

deepseek-v4-proUse deepseek-v4-flash for general chat, roleplay, and faster responses. Use deepseek-v4-pro for more complex writing, reasoning-heavy chats, or longer planning. DeepSeek’s current model list shows deepseek-v4-flash and deepseek-v4-pro as available model IDs.

Older names such as deepseek-chat and deepseek-reasoner still appear in many tutorials, but DeepSeek says those names are legacy compatibility names scheduled to be discontinued on July 24, 2026. For a new Janitor AI DeepSeek setup, verify the live model list before copying an old model name.

8. Paste the API key

Paste your DeepSeek API key into the private API key field. Make sure you are not pasting an OpenRouter key into a DeepSeek route or a DeepSeek key into an OpenRouter route.

9. Save settings

Click Save Settings or the equivalent button. Do not change the temperature, memory, prompt style, context size, and model all at once. First, prove that the connection works.

10. Refresh the page

Hard refresh or reload the Janitor AI chat after saving. Some proxy guides specifically recommend saving the proxy configuration and refreshing Janitor AI before testing.

11. Send a simple test prompt

Use a boring test prompt first:

Reply in one sentence if the DeepSeek API is connected.When that works, continue with your normal character chat or roleplay settings.

Base URL vs Proxy URL: Do Not Mix Them Up

A Base URL is the root API address. For DeepSeek, that is:

https://api.deepseek.comA full proxy URL usually includes the full request path for chat completions. For direct DeepSeek, that is currently:

https://api.deepseek.com/chat/completionsFor OpenRouter, it is:

https://openrouter.ai/api/v1/chat/completionsDo not put the model name inside the URL. This is wrong:

https://api.deepseek.com/chat/completions?model=deepseek-v4-flashThe model belongs in the Model field, not in the proxy URL. A clean configuration separates the URL, the key, and the model name.

Which DeepSeek Model Should You Use in Janitor AI?

deepseek-v4-flash

Use deepseek-v4-flash when you want faster replies, lower cost, and smooth character chat. It is a strong starting point for most roleplay and storytelling conversations.

deepseek-v4-pro

Use deepseek-v4-pro when you want more advanced reasoning, stronger planning, or higher-quality long-form responses. It may be better for complex characters, multi-step scenes, lore-heavy roleplay, and story arcs.

deepseek-chat

This name still appears in older tutorials. DeepSeek currently treats it as a compatibility alias, but it is scheduled for deprecation. Do not make it your first choice for a new setup.

deepseek-reasoner

This also appears in older guides and compatibility examples. Use current DeepSeek model IDs instead unless your provider dashboard specifically tells you to use this name.

Provider slugs are different

OpenRouter and other providers may use model slugs that look different from direct DeepSeek model IDs. For example, a provider may add a prefix, suffix, or route label. Copy the exact slug from that provider’s model catalog rather than guessing.

Direct DeepSeek vs OpenRouter: Which Setup Is Better?

A direct DeepSeek setup is cleaner when your goal is to use DeepSeek’s own endpoint, DeepSeek’s own API key, and DeepSeek’s own billing rules. It has fewer middle layers and makes troubleshooting easier because you only need to check one provider.

OpenRouter or another provider proxy can be useful when you want one account for many models, fallback routing, or access to models through a single provider dashboard. OpenRouter lists multiple DeepSeek models through its unified API, including DeepSeek V4 Pro and DeepSeek V4 Flash.

Neither route is automatically “better” for every user. Direct DeepSeek is simpler. OpenRouter is more flexible. Free or promotional provider routes can change, run out of quota, or require credits, so do not build your entire setup around a free label without checking the provider page at the time of setup.

Best Janitor AI Settings for DeepSeek

Start with conservative settings, then adjust after the API connection works.

| Setting | Practical starting point | When to adjust |

|---|---|---|

| Temperature | 0.7–1.0 for creative roleplay | Lower it for more consistent factual replies |

| Max tokens | 1024–4096 depending on desired response length | Increase for longer scenes, reduce if errors occur |

| Context/memory | Moderate at first | Increase gradually for long roleplay |

| Streaming | Try on or off | Toggle it when replies hang or cut off |

| System/character prompt | Clear and specific | Improve character consistency before changing every API setting |

For roleplay, temperature around 0.7–0.9 is a reasonable starting range. Some Janitor AI integration guides recommend 0.7–0.9 for roleplay and explain that higher values create more variety but can reduce coherence.

DeepSeek models may also support thinking-related behavior depending on the model and route. Some reasoning or thinking modes may handle sampling settings differently, so do not assume every parameter behaves exactly like it does with another model.

DeepSeek Not Working on Janitor AI: Error Fixes

| Error | Likely cause | Fix |

|---|---|---|

| 400 invalid format | Request body or URL shape is wrong | Check whether the field expects a base URL or full endpoint. Remove unsupported query parameters. |

| 401 authentication/API key error | Wrong, expired, or mismatched API key | Create a new key and make sure it belongs to the same provider as the endpoint. |

| 402 insufficient balance | No available DeepSeek balance or provider credits | Check your DeepSeek or provider account balance. |

| 404 model or endpoint not found | Wrong endpoint path or model name | Verify the exact model ID and endpoint for the route you selected. |

| 429 rate limit | Too many requests or provider quota limit | Wait, reduce retries, shorten responses, or check provider limits. |

| 500 server error | Provider-side issue | Retry later and avoid changing all settings at once. |

| 503 server overload | High traffic on the provider side | Wait and retry later. |

| Janitor AI network error DeepSeek | Browser session, proxy mode, or route mismatch | Save settings, refresh the page, reopen the chat, and test again. |

| Empty response | Model, streaming, or token issue | Toggle streaming, reduce context, and test with a short prompt. |

| Slow replies | Model overload, long context, or high max tokens | Lower max tokens, reduce memory/context, or try deepseek-v4-flash. |

DeepSeek’s official error-code page lists 400 for invalid request format, 401 for authentication failure, 402 for insufficient balance, 429 for rate limit, 500 for server error, and 503 for server overload.

Common Mistakes to Avoid

Do not use deepseek-chat just because an old Reddit thread or video tutorial says so. Check the current model list first.

Do not use an OpenRouter model slug with the direct DeepSeek endpoint. Provider model names are not universal.

Do not use a DeepSeek API key with the OpenRouter URL. The key and endpoint must belong to the same provider.

Do not paste a base URL into a full proxy field unless the UI clearly asks for a base URL.

Do not add ?model=... to the URL. Put the model in the model field.

Do not forget to refresh Janitor AI after saving the proxy configuration.

Do not post screenshots that expose your API key.

Do not start troubleshooting character prompts until a simple one-sentence API test works.

Is DeepSeek Good for Janitor AI Roleplay?

DeepSeek can be a good fit for Janitor AI roleplay because it supports character dialogue, long-form interaction, and creative text generation. The best experience depends on the model, the route, the quality of the character card, the system prompt, the context length, and the generation settings.

For general roleplay, deepseek-v4-flash is a practical first choice because it is built for fast, efficient responses. For more complex scenes, detailed lore, or advanced reasoning, deepseek-v4-pro may be better. DeepSeek’s pricing page also lists both V4 models with a 1M context length, though pricing and discounts can change, so always check the latest provider page before assuming final cost.

The setup matters as much as the model. A strong character card will not fix a mismatched URL, API key, or model name.

Privacy and Safety Notes

Never share your API key publicly.

Never upload screenshots that show your key.

Use official dashboards whenever possible.

Be careful with third-party proxies because they may be able to process or log traffic depending on their policies.

Do not paste personal information, payment details, passwords, or private files into character chats.

Avoid jailbreak or safety-bypass prompts. For better roleplay quality, focus on character consistency, scene rules, tone examples, memory settings, and generation settings instead.

Final Checklist

Before testing DeepSeek on Janitor AI, confirm:

- The route is direct DeepSeek or a provider proxy, not both.

- The API key comes from the same provider as the URL.

- The model name belongs to the same provider as the URL.

- The URL field uses the correct base URL or full endpoint.

- The model is entered in the model field, not in the URL.

- The Janitor AI settings were saved.

- The chat was refreshed after saving.

- The first test prompt is short and simple.

FAQ

How do I set up DeepSeek on Janitor AI?

To set up DeepSeek on Janitor AI, create a DeepSeek API key, open Janitor AI’s API or Proxy Settings, choose a Custom/OpenAI-compatible API option, enter the DeepSeek URL, paste the API key, enter a current model such as deepseek-v4-flash or deepseek-v4-pro, save, refresh, and test with one short message.

What is the correct DeepSeek proxy URL for Janitor AI?

For a base URL field, use:https://api.deepseek.com

For a direct DeepSeek full chat-completions endpoint, use:https://api.deepseek.com/chat/completions

For OpenRouter, use:https://openrouter.ai/api/v1/chat/completions

The correct DeepSeek proxy URL for Janitor AI depends on whether the field asks for a base URL or a full endpoint.

What model name should I use for DeepSeek on Janitor AI?

For direct DeepSeek, use deepseek-v4-flash for general chat and roleplay, or deepseek-v4-pro for more advanced responses. Avoid starting new setups with old names such as deepseek-chat or deepseek-reasoner unless the live provider documentation tells you to use them.

Is DeepSeek free to use on Janitor AI?

DeepSeek on Janitor AI is not automatically free. Janitor AI may let you configure external providers, but DeepSeek or a proxy provider may charge for API usage. Check your DeepSeek or provider dashboard before long roleplay sessions.

Why is DeepSeek not working on Janitor AI?

DeepSeek is usually not working on Janitor AI because the API key, URL, and model name do not match the same route. Other common causes include an expired key, insufficient balance, wrong endpoint shape, outdated model name, rate limits, or not refreshing Janitor AI after saving settings.

Can I use OpenRouter instead of the direct DeepSeek API?

Yes. Use OpenRouter when you want a provider proxy route and one key for many models. In that case, use the OpenRouter endpoint, OpenRouter API key, and OpenRouter’s exact model slug. Do not mix OpenRouter settings with direct DeepSeek settings.

Do I need coding skills to connect DeepSeek to Janitor AI?

No. You usually only need to copy and paste the URL, API key, and model name into Janitor AI’s API or Proxy Settings. Coding is only needed when testing the same API route outside Janitor AI.

Is it safe to use a third-party proxy with Janitor AI?

A third-party proxy can be convenient, but it adds another layer between Janitor AI and the model provider. Read the provider’s privacy and logging policies, avoid sending sensitive personal data, and never share your API key publicly.

What is the fastest way to learn how to set up deepseek on janitor ai?

The fastest way is to pick one route first. For direct DeepSeek, use the DeepSeek base URL, a DeepSeek API key, and a DeepSeek model ID. For OpenRouter, use the OpenRouter endpoint, an OpenRouter key, and an OpenRouter model slug. Then save, refresh, and test with one short message.

Conclusion

Setting up DeepSeek on Janitor AI is simple once the route is consistent. The URL, API key, and model name must all come from the same provider. Direct DeepSeek uses DeepSeek’s endpoint, DeepSeek’s key, and DeepSeek’s model IDs. OpenRouter uses OpenRouter’s endpoint, OpenRouter’s key, and OpenRouter’s model slugs.

Start with one short test message before changing roleplay prompts, temperature, memory, or token settings. When the connection works, tune the character and generation settings for better storytelling.