You can use DeepSeek V4 in VS Code by getting a DeepSeek API key, installing a compatible VS Code or coding-agent integration, setting the base URL to https://api.deepseek.com, and selecting either deepseek-v4-pro or deepseek-v4-flash. For most developers, the easiest DeepSeek V4 VSCode setup is Copilot Chat integration; Continue, Cline, Kilo Code, and Deep Code are better for different workflows. DeepSeek’s official docs list both V4 model IDs and the unchanged OpenAI-format base URL.

Quick Answer

- Create a DeepSeek API key from the DeepSeek Platform.

- Install a compatible VS Code integration: Copilot Chat provider, Continue, Cline, Kilo Code, or Deep Code.

- Use the OpenAI-format base URL:

https://api.deepseek.com.- Choose

deepseek-v4-profor complex coding, large refactors, architecture, and agent tasks.- Choose

deepseek-v4-flashfor everyday chat, quick edits, autocomplete, and cost-sensitive work.- Test with a small read-only prompt before allowing any tool to edit files.

DeepSeek V4 Preview was released on April 24, 2026, with two API models: DeepSeek-V4-Pro and DeepSeek-V4-Flash. The official release notes say the API is available now, the base URL stays the same, and users should update the model parameter to deepseek-v4-pro or deepseek-v4-flash.

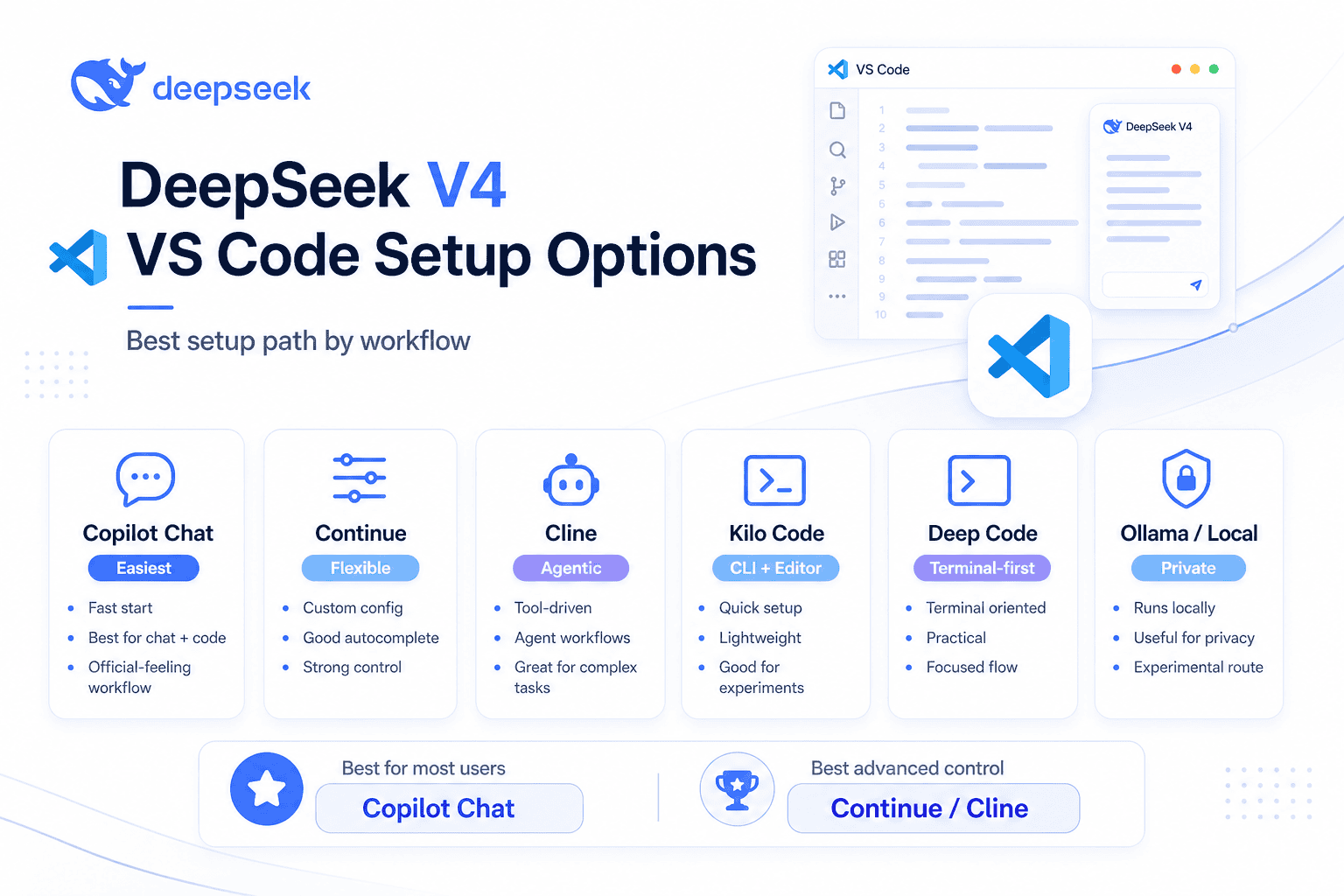

DeepSeek V4 VSCode Setup Options Compared

| Setup method | Best for | Requires Copilot? | Supports agent workflows? | Difficulty | Recommended model |

|---|---|---|---|---|---|

| DeepSeek V4 for Copilot Chat | Developers already using Copilot Chat | Yes | Yes | Easy | Flash for daily work, Pro for agents |

| Continue | Chat, edits, autocomplete, simple project help | No | Yes, if tools are configured | Medium | Flash for autocomplete, Pro for reviews |

| Cline | Multi-file autonomous coding in VS Code | No | Yes | Medium | Pro |

| Kilo Code | CLI/editor agent workflows | No | Yes | Easy-medium | Pro for agents, Flash for quick work |

| Deep Code CLI / VS Code extension | DeepSeek-first terminal agent | No | Yes | Medium | Pro |

| Local / Ollama-based DeepSeek alternatives | Privacy, offline-style workflows, experimentation | No | Depends on model/tool support | Medium-hard | Not identical to official API unless using verified V4 weights or cloud entries |

DeepSeek says both V4 Pro and V4 Flash support a 1M context length, thinking and non-thinking modes, JSON output, tool calls, and beta completion features; the pricing page also lists a maximum output of 384K tokens.

What Is DeepSeek V4 in VS Code?

DeepSeek V4 in VS Code means using DeepSeek’s latest V4 models as the AI backend for coding chat, code edits, inline help, or agentic development inside Visual Studio Code. The two main model IDs are:

deepseek-v4-flash

deepseek-v4-proDeepSeek V4 Flash is the practical default for everyday coding: quick explanations, summaries, small edits, and lower-cost iteration. DeepSeek V4 Pro is better for difficult reasoning, multi-file refactors, debugging, architecture planning, and agentic workflows where the model must inspect files, call tools, and revise its plan.

DeepSeek describes V4 Pro as the stronger agentic and reasoning model, while V4 Flash is positioned as smaller, faster, and more economical; both are part of the same V4 release family.

DeepSeek V4 also supports thinking mode and non-thinking mode. In the API, thinking can be set to enabled or disabled, and reasoning_effort currently supports high and max. DeepSeek’s API reference says thinking mode is enabled by default, while non-thinking mode can be used for faster, simpler responses.

One important limitation: DeepSeek V4 is text-only in the official Copilot Chat integration notes. The Copilot extension can proxy images through another installed Copilot model to describe an image before sending text to DeepSeek, but that is not native DeepSeek vision.

Prerequisites

Before you start, prepare the following:

- Updated VS Code.

- A DeepSeek account and API key.

- Billing balance or credits if your account requires paid API usage.

- A GitHub Copilot subscription if you want to use the Copilot Chat method.

- Node.js where required by CLI tools such as Kilo Code or Deep Code.

- A small test project so you can verify behavior safely.

For the official Copilot Chat method, DeepSeek’s docs specify VS Code 1.116 or later, a GitHub Copilot subscription, and the DeepSeek V4 for Copilot Chat extension. The same page says the Copilot free tier works for this integration.

Step 1 — Create and Secure Your DeepSeek API Key

Create your API key from the DeepSeek Platform, then store it securely. Do not paste it into a public GitHub issue, commit it to a repository, or save it in a shared settings.json file.

For terminal testing, store the key as an environment variable:

export DEEPSEEK_API_KEY="sk-your-key-here"On Windows PowerShell:

$env:DEEPSEEK_API_KEY="sk-your-key-here"Then run a small smoke test:

curl https://api.deepseek.com/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer ${DEEPSEEK_API_KEY}" \

-d '{

"model": "deepseek-v4-flash",

"messages": [

{

"role": "user",

"content": "Reply with OK if the API works."

}

],

"thinking": {

"type": "disabled"

},

"max_tokens": 20,

"stream": false

}'The official DeepSeek quick start uses the OpenAI-compatible /chat/completions endpoint with Authorization: Bearer, model, messages, optional thinking, reasoning_effort, and stream.

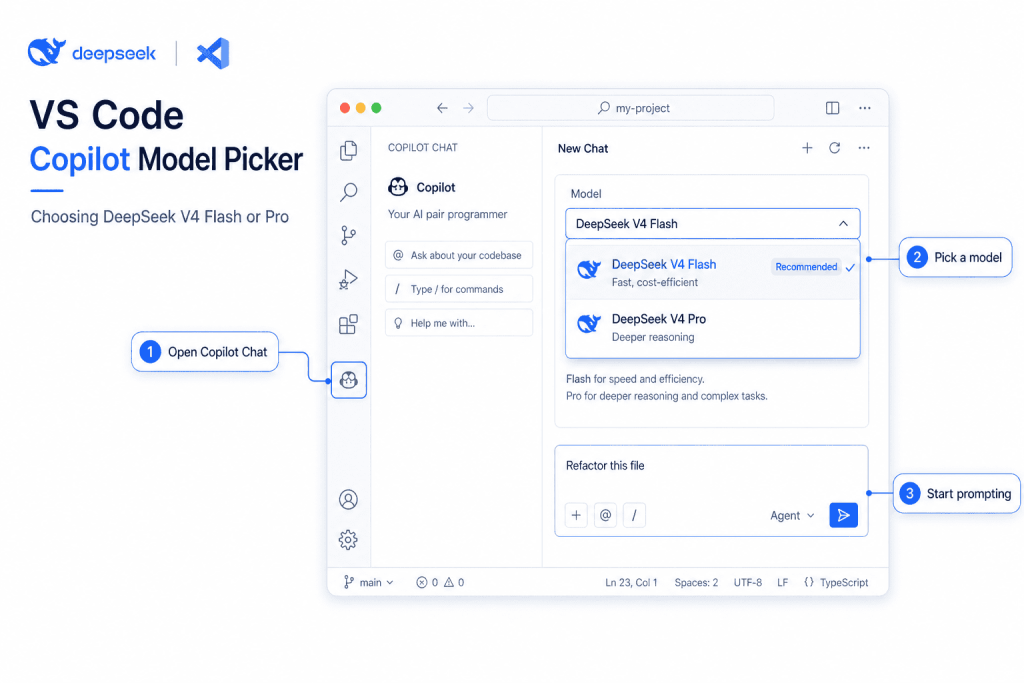

Method 1 — Use DeepSeek V4 in VS Code with Copilot Chat

This is the best default option if you already use Copilot Chat in VS Code.

DeepSeek’s official GitHub Copilot integration page says the DeepSeek V4 for Copilot Chat extension adds DeepSeek V4 Pro and DeepSeek V4 Flash directly into the GitHub Copilot Chat model picker while keeping Copilot agent mode, tool calling, skills, and MCP.

Setup Steps

- Update VS Code to version 1.116 or later.

- Make sure GitHub Copilot Chat is installed and active.

- Install DeepSeek V4 for Copilot Chat from the VS Code Marketplace.

- Open the Command Palette with

Cmd+Shift+PorCtrl+Shift+P. - Run:

DeepSeek: Set API Key- Paste your DeepSeek API key.

- Open Copilot Chat with

Cmd+Shift+IorCtrl+Shift+I. - Click the model picker.

- Choose DeepSeek V4 Flash or DeepSeek V4 Pro.

- Start with a safe prompt:

Read the open file and explain what it does. Do not edit anything.The Marketplace listing for the Vizards extension says the API key is stored in VS Code SecretStorage / OS keychain, not in settings.json, and lists DeepSeek V4 Flash for fast everyday coding and DeepSeek V4 Pro for complex refactors, agent tasks, and deep reasoning.

Thinking Effort

In the Copilot model picker, DeepSeek’s docs describe three thinking effort options: None, High, and Max. Use None or Flash for fast routine work, High for balanced coding, and Max for difficult debugging or larger refactors.

When to Use This Method

Use Copilot Chat integration when you want the most familiar VS Code workflow: chat panel, model picker, agent mode, tools, MCP, and project context inside Copilot.

The main limitation is dependency on Copilot and the extension’s compatibility with current VS Code/Copilot APIs. If the model picker does not show DeepSeek, update VS Code, update the extension, confirm Copilot is enabled, and re-run the API key command.

Method 2 — Use DeepSeek V4 with Continue in VS Code

Continue is a good choice if you want a flexible open-source coding assistant for chat, edit, apply, autocomplete, and agent-like workflows.

Continue’s DeepSeek provider documentation uses provider: deepseek, model, and apiKey in config.yaml. Continue’s config reference also supports model roles such as chat, autocomplete, edit, apply, and summarize.

Create or edit your Continue config.yaml:

name: DeepSeek V4

version: 1.0.0

schema: v1

models:

- name: DeepSeek V4 Flash

provider: deepseek

model: deepseek-v4-flash

apiKey: <YOUR_DEEPSEEK_API_KEY>

roles:

- chat

- edit

- apply

- autocomplete

- name: DeepSeek V4 Pro

provider: deepseek

model: deepseek-v4-pro

apiKey: <YOUR_DEEPSEEK_API_KEY>

roles:

- chat

- edit

- apply

capabilities:

- tool_useUse Flash for autocomplete and routine chat. Use Pro for code review, complex debugging, and multi-file planning. If Continue does not recognize the latest V4 model capabilities, its docs say capabilities can be added manually for newer models or custom deployments; tool_use is the capability required for tool/function calling.

For local alternatives, Continue can also connect to Ollama and auto-detect installed models, but local Ollama setups depend on the exact model tag, local hardware, context configuration, and tool support.

Method 3 — Use DeepSeek V4 with Cline

Cline is best when you want an AI agent that can inspect a project, propose changes, edit files, run commands, and iterate. It is powerful, so start conservatively.

Cline’s documentation says the OpenAI Compatible provider requires three main settings: base URL, API key, and model ID.

Use these values:

API Provider: OpenAI Compatible

Base URL: https://api.deepseek.com

API Key: your DeepSeek API key

Model ID: deepseek-v4-proFor cheaper routine tasks, switch the model ID to:

deepseek-v4-flashStart with this read-only prompt:

Read the project structure and summarize what this repository does. Do not modify files.Only allow edits after Cline has produced a plan you understand. For larger jobs, ask it to edit specific files instead of the entire repository:

Plan a fix for the failing auth tests. Do not edit files yet.Then:

Apply the smallest safe change only in src/auth/session.ts and tests/auth/session.test.ts.Use V4 Pro for Cline when the task involves multiple files, unclear bugs, refactoring, or test failures. Use Flash for explanation, quick cleanup, and low-risk changes.

Method 4 — Use DeepSeek V4 with Kilo Code or Deep Code

Kilo Code and Deep Code are better when you want a terminal-first or agent-first workflow rather than a simple VS Code chat panel.

Kilo Code

DeepSeek’s official Kilo Code integration says Kilo Code is available as a CLI and editor extension. The documented setup is to install the CLI, run it inside your project, connect the DeepSeek provider, and select DeepSeek V4 Flash or DeepSeek V4 Pro from the model selector.

Install:

npm install -g @kilocode/cli

kilo --versionRun it inside a project:

cd /path/to/my-project

kiloThen use:

/connectSearch for DeepSeek, enter your API key, then use:

/modelsChoose DeepSeek V4 Pro for agentic coding or DeepSeek V4 Flash for faster tasks.

Deep Code

DeepSeek’s Deep Code page describes Deep Code as an open-source terminal AI coding assistant for DeepSeek V4 with deep thinking, reasoning effort control, and Agent Skills. It requires Node.js 18+ and uses @vegamo/deepcode-cli.

Install:

npm install -g @vegamo/deepcode-cli

deepcode --versionCreate:

~/.deepcode/settings.jsonAdd:

{

"env": {

"MODEL": "deepseek-v4-pro",

"BASE_URL": "https://api.deepseek.com",

"API_KEY": "sk-your-key-here"

},

"thinkingEnabled": true,

"reasoningEffort": "max"

}Then run:

cd /path/to/my-project

deepcodeDeepSeek’s docs note that the same settings file is shared with the Deep Code VSCode extension, which makes this method useful if you switch between terminal and editor workflows.

Which DeepSeek V4 Model Should You Use in VS Code?

| Task | Recommended model | Why |

|---|---|---|

| Tab autocomplete | V4 Flash | Faster and cheaper for frequent small completions |

| Quick code explanation | V4 Flash | Usually enough for straightforward understanding |

| Unit test generation | V4 Flash or Pro | Flash for simple tests, Pro for complex cases |

| Small bug fix | V4 Flash | Good for narrow, low-risk changes |

| Large refactor | V4 Pro | Better fit for planning and multi-step reasoning |

| Architecture planning | V4 Pro | Stronger reasoning and broader context use |

| Multi-file agent task | V4 Pro | Better for tool loops and project-level tasks |

| Cost-sensitive daily use | V4 Flash | Lower token cost |

| High-risk production change | V4 Pro, with human review | Use stronger reasoning, but still review every diff |

A practical rule: default to Flash, escalate to Pro when the task becomes ambiguous, multi-file, expensive to get wrong, or agentic.

Pricing and Cost Control

The extension may be free, but DeepSeek API calls are token-billed. DeepSeek’s pricing page says billing is based on input and output tokens, and it lists prices per 1M tokens. At the time checked, V4 Flash was listed at $0.0028 per 1M cache-hit input tokens, $0.14 per 1M cache-miss input tokens, and $0.28 per 1M output tokens. V4 Pro was listed with a temporary 75% discount through 2026-05-31 15:59 UTC: $0.003625 cache-hit input, $0.435 cache-miss input, and $0.87 output per 1M tokens.

Cost-control tips:

- Use Flash for routine chat and autocomplete.

- Use Pro only when the task requires stronger reasoning.

- Avoid asking tools to read the whole repository unless necessary.

- Start agent tasks with “inspect and plan only.”

- Limit output tokens when your extension supports it.

- Ask for diffs, not full rewritten files, when possible.

- Review every file before accepting changes.

Agent loops can become expensive because the tool may repeatedly send file contents, command outputs, errors, and revised prompts. Output tokens are often the most expensive part of a long refactor, especially when thinking mode is enabled.

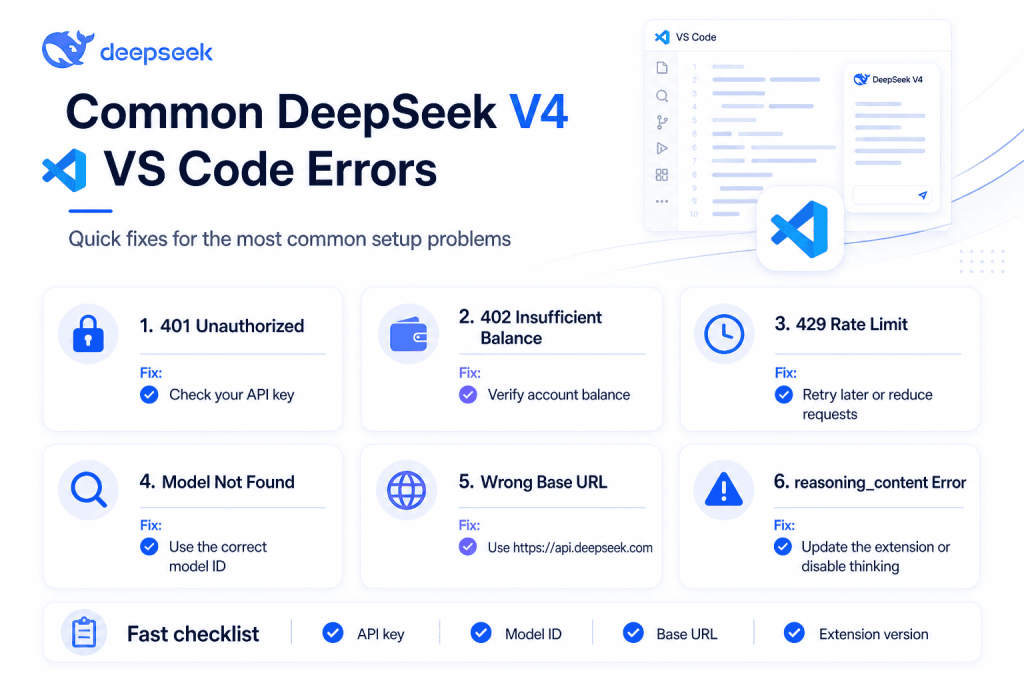

Common Errors and Fixes

| Error / Symptom | Likely cause | Fix |

|---|---|---|

| 401 Unauthorized / authentication failed | Wrong or missing API key | Recreate the key, paste it again, check DEEPSEEK_API_KEY |

| 402 or insufficient balance | No API balance | Top up or check account credits |

| 429 rate limit | Too many requests | Slow down, reduce autocomplete, retry later |

| Model not found | Wrong model ID | Use deepseek-v4-pro or deepseek-v4-flash |

| Wrong base URL | Used OpenAI URL or old proxy URL | Use https://api.deepseek.com for OpenAI format |

| Extension cannot find DeepSeek model | Extension/Copilot not updated | Update VS Code, Copilot Chat, and the provider extension |

| Autocomplete is expensive | Too many token calls | Use Flash, reduce context, disable autocomplete in large files |

| Context appears lower than 1M | Client-side cap | Adjust extension context settings if available |

reasoning_content must be passed back to the API | Client drops DeepSeek thinking history | Update extension, use native DeepSeek V4 provider, disable thinking temporarily |

| Image upload does not work | DeepSeek V4 is text-only | Use Copilot vision proxy if your extension supports it |

| Copilot model picker does not show DeepSeek | Copilot/provider setup incomplete | Confirm Copilot is active and rerun the provider API key command |

API key saved in settings.json | Unsafe manual setup | Rotate the key and move it to SecretStorage, environment variables, or keychain |

DeepSeek’s error-code page lists 401 for authentication failure, 402 for insufficient balance, 429 for rate limit, and 500/503 for server-side issues.

The reasoning_content error is not usually an API-key problem. DeepSeek’s Copilot CLI docs say OpenAI-type integrations can trigger a 400 error when thinking-mode reasoning_content is not echoed back on later requests. A Marketplace DeepSeek V4 provider page describes the same issue with generic OpenAI-compatible bridges dropping reasoning_content in tools-enabled thinking conversations.

Security and Privacy Notes

Any API-based DeepSeek V4 setup sends your prompts, selected code context, and tool outputs to the configured model provider. Do not send private keys, production secrets, customer data, proprietary files, or regulated data unless your organization allows it.

Use .gitignore, secret scanning, environment variables, OS keychain storage, and extension secret storage. The Copilot Chat extension listing says its API key is stored in VS Code SecretStorage / OS keychain rather than settings.json; prefer that kind of storage whenever possible.

If privacy is the top priority, consider local models. DeepSeek’s Hugging Face page says official DeepSeek V4 weights are available and includes local-running guidance, but local deployment is not the same experience as the hosted API unless you reproduce compatible serving, context, quantization, and hardware conditions.

Ollama also lists DeepSeek V4 Pro and Flash cloud entries, but the visible tags are deepseek-v4-pro:cloud and deepseek-v4-flash:cloud, so treat them as cloud-model routes rather than assuming an offline local model.

Best Workflow for Real Projects

Use this workflow for safer DeepSeek V4 VSCode development:

- Start with a read-only prompt.

- Ask for a plan before edits.

- Limit the scope to specific files.

- Let the agent edit only the files you name.

- Review the diff.

- Run tests locally.

- Ask the model to explain failures, not blindly retry.

- Commit manually.

- Use V4 Flash for daily work.

- Use V4 Pro for hard bugs, architecture, and multi-file changes.

A good first agent prompt is:

Inspect the project and create a plan to fix the failing tests. Do not edit files or run destructive commands.A good second prompt is:

Apply only the smallest safe change. Edit only the files you named in the plan. After editing, summarize the diff.FAQ

Can I use DeepSeek V4 in VS Code?

Yes. You can use DeepSeek V4 in VS Code through Copilot Chat integration, Continue, Cline, Kilo Code, Deep Code, or another OpenAI/Anthropic-compatible tool. The official model IDs are deepseek-v4-pro and deepseek-v4-flash.

What is the best way to use DeepSeek V4 in VSCode?

For most users, the best DeepSeek V4 VSCode method is the Copilot Chat integration because it adds DeepSeek V4 Pro and Flash into the Copilot Chat model picker while preserving agent mode and tool calling.

Is DeepSeek V4 free in VS Code?

The VS Code extension may be free, but DeepSeek API usage is generally token-billed. Always check the official pricing page before running large agent tasks.

What is the DeepSeek V4 API base URL?

For OpenAI-compatible tools, use https://api.deepseek.com. For Anthropic-compatible tools, DeepSeek documents https://api.deepseek.com/anthropic.

Should I use deepseek-v4-pro or deepseek-v4-flash?

Use deepseek-v4-flash for fast, lower-cost everyday work. Use deepseek-v4-pro for complex debugging, large refactors, architecture, and agentic coding.

Does DeepSeek V4 work with GitHub Copilot Chat?

Yes. DeepSeek’s official Copilot integration says the DeepSeek V4 for Copilot Chat extension adds V4 Pro and V4 Flash to the Copilot Chat model picker.

Can DeepSeek V4 edit files in VS Code?

Yes, when used through an agent-capable integration such as Copilot Chat agent mode, Cline, Continue Agent mode, Kilo Code, or Deep Code. Always review diffs before accepting changes.

Why am I getting the reasoning_content error?

This is usually a client compatibility issue with DeepSeek V4 thinking mode and multi-turn/tool-call history. Update the extension, use a native DeepSeek V4 provider if available, disable thinking temporarily, or switch to Flash/non-thinking for routine workflows.

Does DeepSeek V4 support images in VS Code?

DeepSeek V4 is text-only in the official Copilot integration notes. Some extensions can proxy images through another vision-capable Copilot model, but that is not native DeepSeek vision.

Are deepseek-chat and deepseek-reasoner still safe to use?

Use the new V4 IDs instead. DeepSeek says deepseek-chat and deepseek-reasoner are compatibility aliases and will be discontinued on 2026-07-24.

Conclusion

The best way to use DeepSeek V4 in VS Code depends on your workflow. Use DeepSeek V4 for Copilot Chat if you already work inside Copilot Chat and want the simplest setup. Use Continue for flexible chat, edit, and autocomplete. Use Cline, Kilo Code, or Deep Code when you want agentic workflows that inspect files, plan changes, and work across a project.

Default to deepseek-v4-flash for everyday coding and cost control. Switch to deepseek-v4-pro for complex reasoning, multi-file refactors, architecture planning, and high-risk changes. The core setup is simple: API key, compatible VS Code integration, base URL https://api.deepseek.com, and the right V4 model ID.