DeepSeek vs Claude is not a typical “which AI is better?” showdown. This is an unofficial technical comparison aimed at developers, technical founders, and AI engineers trying to decide which model fits their application architecture best.

We focus primarily on DeepSeek’s design philosophy and capabilities, using Claude as a contextual reference point. The goal is a structural, fact-based comparison that clarifies where DeepSeek may be a better fit, and where Claude might serve better — without hype or unsubstantiated claims.

In short, think of this as a guide for someone asking: “Should I integrate DeepSeek or Claude into my app, and why?” We’ll start by understanding what each model is designed for, then delve into key architectural differences, and finally outline scenarios favoring each.

(Note: chat-deep.ai is an independent community resource, not an official DeepSeek site.)

What DeepSeek Is Designed For

DeepSeek is an advanced open-weight large language model (LLM) family, purpose-built for transparency, strong reasoning, and developer flexibility. It originated as an open-source project and has rapidly evolved through versions (V3, R1, V3.1, V3.2), each pushing the envelope in reasoning capabilities and performance.

Open-Source and Self-Hostable

Perhaps the biggest distinguishing factor: DeepSeek’s model weights are openly available. The current flagship, DeepSeek V3.2 (released December 2025), is a 685-billion-parameter Mixture-of-Experts model with 37 billion active parameters per token, released under the MIT License.

This means developers can download and run DeepSeek on their own infrastructure with no reliance on a third-party service. Teams can deploy it on-premises or in their cloud, ensuring data stays within their environment for privacy or compliance needs.

DeepSeek is a great choice if you require full control over deployment, need to work offline, or have sensitive data that cannot be sent to an external API — a common requirement under strict data sovereignty rules like GDPR or HIPAA.

Reasoning-First Design with Transparent Chain-of-Thought

DeepSeek is engineered for complex reasoning tasks and provides a window into its thought process. The DeepSeek-R1 model family pioneered explicit chain-of-thought reasoning, and V3.2 has unified this capability into a single model.

In practice, developers can toggle reasoning on or off via a simple parameter. When enabled, the model generates step-by-step thoughts exposed through a dedicated reasoning_content field in the API response, alongside the final answer in the content field.

For example, when solving a math or coding problem, DeepSeek will explain how it got the answer rather than just outputting the result. This is invaluable for debugging complex queries, verifying accuracy, or building agent-like applications that require decision traces.

DeepSeek V3.2 achieved gold-medal results on both the 2025 International Mathematical Olympiad (IMO) and International Olympiad in Informatics (IOI), demonstrating frontier-class reasoning capability.

Developer Flexibility and Customization

Being open-weight, DeepSeek offers a high degree of flexibility. You can integrate it via libraries like Hugging Face Transformers, deploy it behind an OpenAI-compatible API endpoint, or expose it through custom pipelines. Existing applications can call DeepSeek as if it were an OpenAI model, with minimal code changes. This means you can swap in DeepSeek for testing or integration with near-zero friction.

Developers can fine-tune DeepSeek on domain-specific data since the model weights are accessible. If your application has specialized needs — medical vocabulary, legal terminology, a unique style or tone — you can adapt DeepSeek by training or prompt-tuning, leveraging the open ecosystem. This kind of customization is impossible with a fully closed model like Claude.

The open-source ecosystem is vibrant: community-created distilled versions (down to a few billion parameters), specialized variants for theorem proving and multimodal tasks, and optimized inference configurations all exist. Some teams have created domain-specific fine-tunes for industries like finance, healthcare, and law — sharing them on Hugging Face for the community. You are not just a user of a black-box API; you can become a co-creator by modifying how the model is used or even improving it.

Self-Hosting and Infrastructure Control

DeepSeek lets you own the whole stack. You choose the hardware, scale as needed, and optimize inference using GPU acceleration, quantization, or custom kernels. Tools like vLLM enable high-throughput serving, and one-click cloud deployment templates simplify setup.

By self-hosting, you avoid external rate limits or service outages and can integrate the model tightly with your architecture — bringing it physically closer to your data for lower latency. You can co-locate the model with your database, build custom caching layers for model responses, or deploy on edge devices for ultra-low-latency inference.

Of course, with great power comes responsibility: running DeepSeek means you are responsible for maintaining uptime, applying updates (or choosing not to, if you prefer a stable version), and enforcing any usage policies or safety mitigations. But for many developers, that control is exactly the appeal.

The cost structure is different too: instead of paying per API call, you pay for compute infrastructure. DeepSeek’s official API is priced at $0.28 per million input tokens and $0.42 per million output tokens, with a 90% cache discount ($0.028/M for cached inputs). Self-hosting converts these to fixed infrastructure costs, which can be significantly more cost-efficient at scale. If you handle millions of requests, owning the model saves costs in the long run — though it requires upfront investment and ML ops know-how. You can explore costs using the DeepSeek API Cost Calculator.

In essence, DeepSeek is designed for openness, reasoning transparency, and flexible deployment. Whether you need to embed AI deeply into your platform, ensure every answer can be traced, or meet strict data-control requirements, DeepSeek was built with those scenarios in mind.

What Claude Is Designed For

Claude, developed by Anthropic, represents a different approach: a managed AI assistant platform built on proprietary large language models with a heavy emphasis on safety, alignment, and enterprise-grade tooling.

Claude Opus 4.6: The Current Flagship

Anthropic released Claude Opus 4.6 on February 5, 2026, followed by Claude Sonnet 4.6 on February 17, 2026. Opus 4.6 is Anthropic’s most advanced model, featuring:

- 1M token context window (in beta on the Claude Platform) — a 5x increase over previous models

- 128K max output tokens — enabling comprehensive, long-form responses

- Adaptive thinking — the model dynamically decides when and how much to reason based on query complexity

- Agent Teams — multiple Claude agents working together on complex tasks within Claude Code

- 65.4% on Terminal-Bench 2.0 — the highest score in the industry for agentic coding

- 90.2% on BigLaw Bench — leading legal reasoning performance

- 72.7% on OSWorld — best-in-class computer use capabilities

Opus 4.6 is priced at $5 per million input tokens and $25 per million output tokens, with up to 90% savings via prompt caching and 50% with batch processing. Sonnet 4.6 is the balanced option at $3/$15 per million tokens.

Managed Service, Not Self-Hosted

Claude is offered as a cloud-based AI service. Unlike DeepSeek, Claude models are fully closed-source and proprietary to Anthropic. You cannot download or run Claude on your own servers — the only way to use it is through API calls to Anthropic’s service or via applications that integrate that API.

The upside is convenience: no deployment needed, no model files to manage, no ML infrastructure to maintain. You get started by obtaining API access and can send prompts and receive completions immediately. Anthropic handles scaling, updates, and reliability behind the scenes.

This model fits well for teams who want quick integration or do not have the capacity to handle hosting a large model. It is beneficial for use cases where scaling up or down quickly is important — the provider handles scaling automatically. For startups in prototype or pilot phase, this convenience can be the difference between shipping in weeks versus months.

The trade-off is that you cede control over many aspects: you must abide by Anthropic’s usage policies, you are subject to their pricing and rate limits, and you rely on their uptime. If Anthropic has an outage or you hit a quota, your application is impacted. Claude is designed for those who prefer a fully-managed solution where it just works out of the box.

Alignment-First Approach

A hallmark of Claude’s design is Anthropic’s approach to AI safety. The company employs Constitutional AI, guiding the model with principles encoding helpfulness, honesty, and avoidance of harm. Anthropic has published Claude’s guiding constitution openly, which is unusual among AI providers and speaks to their transparency about the model’s intended behavior.

For enterprise users, this alignment focus is a major draw: Claude aims to be genuinely helpful, honest, and harmless in its responses, reducing the risk of problematic outputs. Claude’s character and behavior are carefully tuned through this alignment process — you can think of it as Anthropic having hardwired a certain philosophy and set of guardrails into the AI.

This is different from DeepSeek, which, while it has an RLHF-tuned chat model, does not impose a published moral framework. The alignment is baked into Claude and not directly adjustable by developers — which is a feature for risk-conscious organizations and a limitation for those wanting full behavioral control.

Enterprise Platform and Tooling

Claude has evolved beyond a simple chat API into a full enterprise platform. Key capabilities in the current ecosystem include:

- Claude Code — an agentic command-line tool for coding tasks, widely considered the leading AI coding assistant. In February 2026, 16 Claude Opus 4.6 agents wrote a complete C compiler in Rust from scratch.

- Claude for Excel — builds, updates, and analyzes spreadsheets directly inside Excel

- Claude for PowerPoint — creates and edits presentations (research preview)

- Cowork — a desktop tool where Claude can multitask autonomously on file and task management

- Computer Use — Claude can operate computers, navigate interfaces, complete forms, and move data across applications (72.7% on OSWorld)

- Data Residency Controls — US-only inference available at 1.1x pricing for sensitive workloads

Claude is available through Anthropic’s platform, Amazon Bedrock, Google Vertex AI, and Microsoft Foundry. Enterprise users benefit from SOC 2 and ISO certifications, SLA-backed reliability, and compliance documentation.

Reasoning: Adaptive Thinking

Claude Opus 4.6 introduced adaptive thinking, replacing the earlier extended thinking mode. The model dynamically decides when and how much to think based on contextual clues about query complexity. This represents a significant shift from the previous approach where developers had to manually set thinking budgets.

At the default effort level (high), Claude almost always thinks. At lower effort levels, it may skip thinking for simpler problems. A new “max” effort level provides the absolute highest capability. Developers can combine effort levels with adaptive thinking for optimal cost-quality tradeoffs — using lower effort for simple queries and higher effort for complex reasoning tasks.

Unlike DeepSeek’s transparent reasoning_content field, Claude’s thinking process is internal — developers see a “thinking” indicator but not the raw reasoning tokens. Claude may show an upfront plan of its thinking so you can adjust course mid-response, but the detailed chain-of-thought remains behind the scenes.

Multimodal Capabilities

Claude supports text and image input with text output. It can analyze and describe images, interpret charts and diagrams, understand screenshots, and process document images. However, it does not generate images and does not offer native audio or video processing. Claude’s computer use capability (72.7% on OSWorld) allows it to interact with software interfaces, navigate applications, complete forms, and move data between programs — a form of visual understanding applied to real-time interaction.

DeepSeek’s V3.2 is primarily text-focused, with separate research models (DeepSeek-VL2, Janus) targeting vision and image generation tasks. These operate alongside the main chat model rather than being integrated into a single multimodal pipeline. For most developer use cases, both are predominantly language-first systems — with Claude offering a more integrated vision-as-input path when image understanding is needed.

In summary, Claude is designed for ease-of-use, safety, enterprise tooling, and a managed experience. If DeepSeek is about giving developers the steering wheel, Claude is about providing a reliable AI chauffeur — you tell it where to go, and it handles the driving within Anthropic’s guardrails.

Architectural Differences

Let’s directly compare DeepSeek vs Claude across the key architectural dimensions that matter to developers.

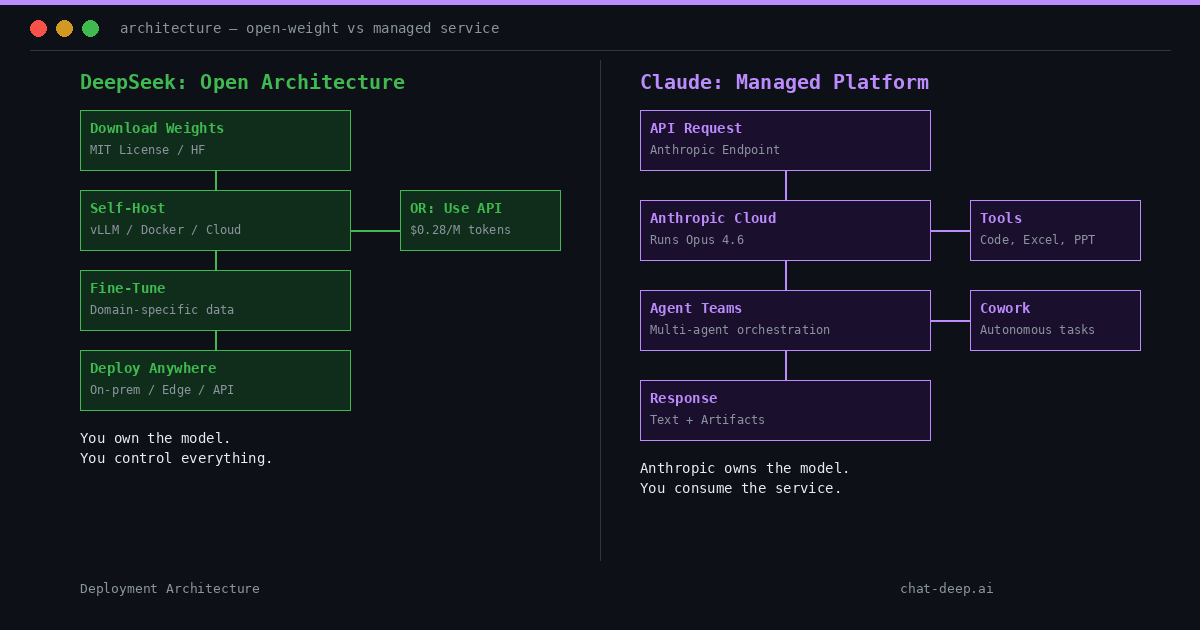

Deployment Model

DeepSeek: Self-hosted deployment. You obtain the model from Hugging Face and run it on your infrastructure — local servers, cloud instances, or specialized platforms. Deploy with Docker, vLLM, or custom pipelines. You manage scaling, availability, and the full lifecycle. Community guides and one-click deployment templates make setup increasingly accessible even for teams without deep ML ops experience.

Claude: Fully managed cloud service. Anthropic runs the model on their servers; you make API calls to their endpoint. No option to self-host. Deployment is tied to Anthropic’s cloud, with additional availability through Amazon Bedrock, Google Vertex AI, and Microsoft Foundry. Anthropic manages all infrastructure, scaling, model updates, and operational concerns on your behalf.

In short: DeepSeek = bring the model into your stack. Claude = call the model in Anthropic’s stack. If your application needs on-premises processing or a highly customized inference pipeline, Claude is not an option. If you want zero infrastructure overhead, DeepSeek’s self-hosted path requires more work.

API and Integration Structure

DeepSeek: Integration is very flexible. Self-hosted deployments can expose an OpenAI-compatible REST API, meaning you can swap out calls to OpenAI or Claude with calls to your DeepSeek service with minimal code changes. No external rate limits beyond what your hardware can handle. You can also use the official DeepSeek API for convenience, which follows a similar OpenAI-compatible format.

You are not limited by vendor-specific interfaces — you can integrate at the model level using libraries like Hugging Face Transformers, at the service level using community APIs, or through any custom pipeline you design. Self-hosting also means you set your own rate limits, token budgets, and access controls.

Claude: Accessed through Anthropic’s proprietary API. You must adhere to their format, rate limits, and token limits. Anthropic imposes per-plan quotas on requests per minute and maximum prompt sizes. Integration is straightforward (just call the cloud API), but you relinquish control — you cannot alter the API beyond what Anthropic allows.

You must also handle network latency and external service reliability. If Anthropic has an outage or you hit a quota, your application is impacted. Claude’s API does offer features like prompt caching and batch processing for cost optimization, but these operate within Anthropic’s framework.

Developers can also route Claude Code through DeepSeek, using its Anthropic-compatible API layer while benefiting from DeepSeek’s pricing and open deployment flexibility.

Reasoning Handling: Transparency vs Adaptive

DeepSeek: Explicitly exposes reasoning. In reasoning mode, the model outputs a chain-of-thought alongside the final answer via a structured reasoning_content field. Developers can see intermediate steps, verify logic, and build audit trails. The reasoning might include identifying the problem type, selecting an approach, working through intermediate calculations, catching and correcting errors, and arriving at the final answer. You choose whether to expose reasoning or suppress it.

Claude: Uses adaptive thinking (introduced with Opus 4.6). The model dynamically decides how much to reason based on query complexity. The thinking process is internal — you see a “thinking” indicator and can control the effort level (low, medium, high, max), but the raw reasoning tokens are not exposed in the same structured way as DeepSeek’s reasoning_content. Claude’s thinking can be extended significantly for hard tasks without timing out, and the model does a better job keeping track of what it has already done across long reasoning chains.

The bottom line: DeepSeek offers built-in reasoning transparency for audit and debugging. Claude prioritizes polished answers with configurable but opaque reasoning depth. If your architecture benefits from capturing the AI’s decision path — for audit trails, debugging, regulatory compliance, or feeding reasoning steps into another process — DeepSeek has the advantage. If you prefer the AI to reason quietly and deliver a refined answer, Claude’s adaptive approach suits that.

Context Window and Output Capacity

DeepSeek V3.2: 128K token context window with DeepSeek Sparse Attention (DSA) for efficient long-context handling. This is roughly equivalent to 80,000 words or hundreds of pages of text — sufficient for large codebases, lengthy conversations, and most document analysis tasks.

Claude Opus 4.6: 1M token context window (in beta on the Claude Platform), roughly 8x larger than DeepSeek’s. Plus 128K max output tokens — far more than most models offer. This enables processing of entire books, massive codebases, or very long multi-step workflows in a single request. Claude’s context pricing is flat at $5/M input tokens regardless of prompt length.

For most applications, DeepSeek’s 128K context is more than sufficient. Claude’s 1M context is decisive for niche use cases requiring extreme input length, such as legal e-discovery, full-repository code analysis, comprehensive document review, or maintaining very long conversation histories without truncation.

Model Availability and Versioning

DeepSeek: Open model availability. You choose which version to use, run multiple versions simultaneously, or modify them. Community distilled versions and specialized fine-tunes are available on Hugging Face. If the project ceased updates, weights are out there for the community to carry forward. You can inspect the model architecture, examine training details from published technical reports, and contribute to an open research community.

Claude: Model availability is entirely at Anthropic’s discretion. You select from available model IDs (e.g., claude-opus-4-6, claude-sonnet-4-6). Anthropic controls the full lifecycle — older models are deprecated and retired on Anthropic’s schedule. As of early 2026, Claude Sonnet 3.5, Sonnet 3.7, and Haiku 3.5 have been retired, with Haiku 3 retirement scheduled for April 2026. You cannot fork or maintain older versions yourself.

This difference affects long-term strategy. With DeepSeek, you have full autonomy over version management. With Claude, you are tied to Anthropic’s roadmap. Anthropic continuously enhances Claude, but you have less control over when and how changes affect your application. From a licensing perspective, DeepSeek uses the MIT License (no per-use fees), while Claude requires agreeing to terms of service with ongoing usage fees.

Governance and Control

DeepSeek: Control rests with you. You decide which prompts to allow, whether to filter outputs, and how to respond to misuse. You can integrate your own moderation system and control the update schedule. An open model will faithfully follow your prompts to the best of its ability, even if that means producing unfiltered content — unless you implement checks. The flexibility is high, but you shoulder full responsibility for the model’s outputs.

Claude: Anthropic enforces governance policies at the model and service level. Claude is trained to refuse outputs violating its ethical guidelines, and Anthropic’s acceptable use policy forbids certain uses. For enterprise users, this is reassuring: built-in guardrails reduce the risk of problematic outputs. Any improvements to safety — better filters, reduced hallucination, patched jailbreaking methods — are handled by Anthropic’s updates automatically.

The downside is lack of customization in governance. You cannot override Claude’s refusals or fine-tune its policy. If Claude decides a query violates its guidelines and refuses, your recourse is limited to rephrasing the query or requesting Anthropic for policy changes. With DeepSeek, you can choose a different checkpoint or apply your own prompt techniques to adjust behavior.

DeepSeek offers full freedom and demands responsibility. Claude offers a safety net and imposes constraints. It is worth noting that self-hosting an open model shifts the full burden of compliance and liability to you — you are accountable for ensuring the model is used legally and ethically. Using Claude does not remove that responsibility, but the provider has taken steps to mitigate obvious issues.

Pricing Comparison

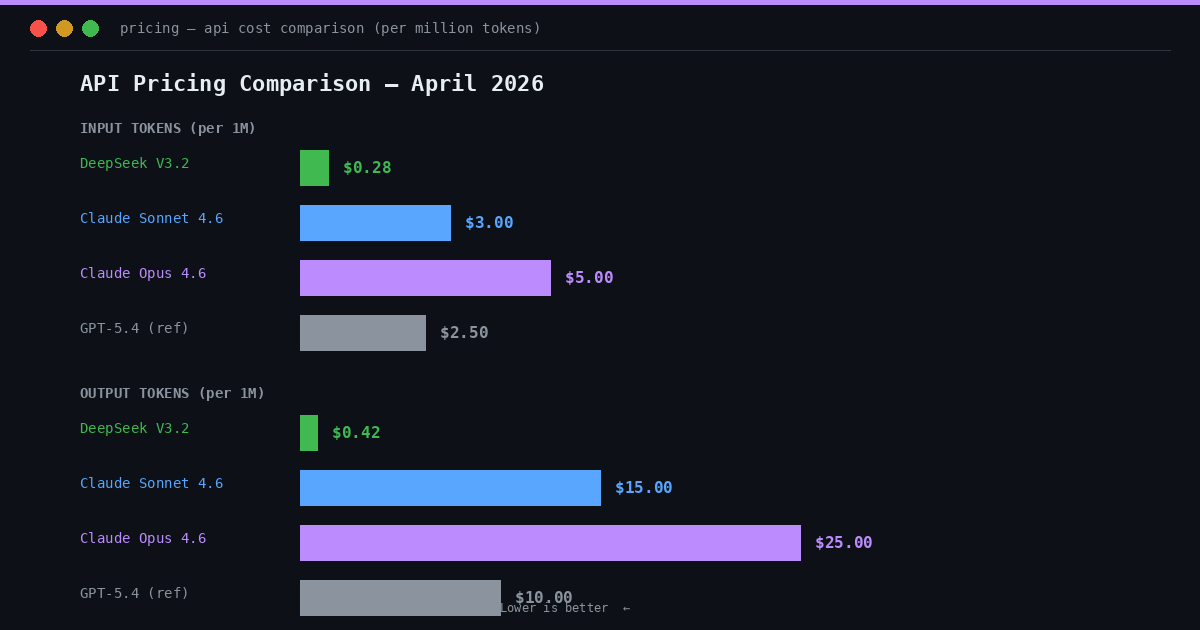

The pricing difference between DeepSeek and Claude is one of the most striking contrasts in this comparison:

| Model | Input (per M tokens) | Output (per M tokens) | Notes |

|---|---|---|---|

| DeepSeek V3.2 | $0.28 | $0.42 | Cached: $0.028 (90% off). Self-hosting: $0. |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Balanced speed/intelligence |

| Claude Opus 4.6 | $5.00 | $25.00 | Up to 90% off with caching, 50% with batch |

DeepSeek is 18x cheaper on input and 60x cheaper on output compared to Claude Opus 4.6. Even against the more affordable Sonnet 4.6, DeepSeek is 11x cheaper on input and 36x cheaper on output.

For high-volume applications, this difference is transformative. A workload costing $25,000/month on Claude Opus would cost approximately $420/month on DeepSeek’s API — and even less with self-hosting. Even against the more affordable Sonnet 4.6, DeepSeek is 11x cheaper on input and 36x cheaper on output.

Claude does offer cost optimization features: prompt caching can reduce costs by up to 90%, and batch processing offers 50% savings. But even with these optimizations, DeepSeek’s base pricing remains substantially lower. The DeepSeek pricing page has full details, and you can model your specific workload using the API Cost Calculator.

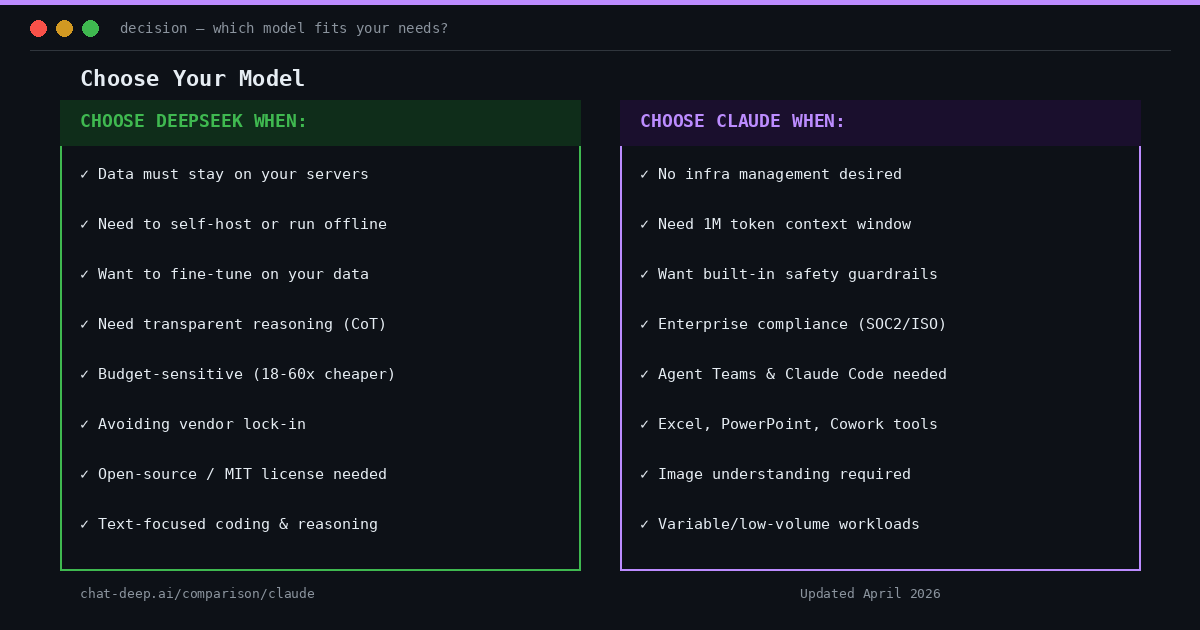

When DeepSeek Is Preferable

DeepSeek shines in several scenarios due to its open, flexible nature:

Self-Hosting or On-Premises Requirements: If your organization requires AI models to run in-house, DeepSeek is the clear choice. Working with confidential enterprise data — health records, financial data, proprietary code — where regulations prohibit sending data to external services? DeepSeek deploys within your secure environment. Data never leaves your servers. You can even run it on isolated networks with no internet access. This is crucial for industries like banking, defense, and healthcare that demand complete control over their IT stack. Claude, being an external service, simply cannot be used in these fully offline or on-prem situations.

Transparent Reasoning and Auditing: For applications where understanding how the AI arrived at an answer matters — medicine, law, scientific research, financial modeling — DeepSeek’s reasoning_content field is ideal. Developers can trace the model’s logic step by step, verify correctness, and build audit trails for compliance. Claude’s adaptive thinking does not expose reasoning at the same granularity.

Full Control over Model Behavior: DeepSeek lets you fine-tune with your own data, apply custom moderation, adjust responses via prompt engineering, and control when to upgrade versions. Nothing changes unless you change it. You can stick with a known stable version of DeepSeek for as long as you wish, or test a new version before switching.

Claude’s updates are managed by Anthropic — you might not know when the model’s internals change beyond version announcements. Teams building long-term products might prefer DeepSeek to avoid surprises. Also, if your infrastructure requires tight integration — custom caching layers for model responses, co-locating the model on edge devices, or specialized inference pipelines — DeepSeek allows that flexibility. When you want the AI to be a component you fully own and can tweak, DeepSeek wins out.

Cost Efficiency at Scale: At 18-60x cheaper than Claude Opus, DeepSeek’s cost advantage is dramatic. Self-hosting eliminates per-token fees entirely after the infrastructure investment. For applications processing thousands of completions per minute, owning the model can save costs by orders of magnitude compared to per-request API billing.

Consider a concrete example: an application generating 100 million output tokens per month. On Claude Opus 4.6, that costs $2,500/month. On DeepSeek’s API, it costs $42/month. Self-hosted, it costs only the operational expense of your GPU infrastructure. For startups or projects operating at scale with tight budgets, DeepSeek offers a path to growth without proportional cost increase.

Open Ecosystem and No Vendor Lock-in: With DeepSeek’s MIT License, you own the model indefinitely. Changes in vendor pricing, service shutdowns, or policy changes do not affect your deployment. The vibrant open-source community provides distilled versions, specialized fine-tunes, and community tools that are not gated by a company’s decisions.

Some developers and organizations have a philosophical or strategic preference for open-source solutions. You can see discussion of model development, access independent evaluations, and know that you are not dependent on a single vendor. Choosing DeepSeek means joining an ecosystem of open AI development where community contributions, plugins, and integrations benefit everyone.

There is also the advantage of building internal expertise. Your team gets to learn how to work with the model intimately — understanding its strengths, weaknesses, and optimization opportunities — which gives you a competitive edge compared to treating the AI as a distant, opaque service.

Custom Integration Patterns: DeepSeek’s OpenAI-compatible API means you can swap it into existing pipelines with minimal code changes. You can co-locate the model on edge devices, build custom caching layers, or create highly specialized inference pipelines — flexibility that a closed API cannot offer.

In essence, choose DeepSeek when control, transparency, cost efficiency, and flexibility are your top priorities.

Once you’ve decided that DeepSeek’s open deployment model fits your stack better than Claude, the practical question becomes which DeepSeek family to deploy. Use our DeepSeek model comparison hub to choose between V3.2, R1, Coder, Math, VL, and other options based on reasoning depth, specialization, and deployment needs.

When Claude May Be Preferable

Claude, as a managed platform, comes with its own advantages:

No Infrastructure or ML Ops Overhead: For lean startups or small teams, spinning up a hundred-billion-parameter model cluster is a heavy lift. Claude abstracts all that away — you start in minutes by calling an API. Focus on building features while Anthropic handles model performance, scaling, and uptime.

For many applications, especially those in prototype or pilot phase, this convenience is key. You can iterate on your product without being slowed down by infrastructure tasks. As one guide on AI strategy noted, going with a cloud API is ideal when you have limited ML infrastructure expertise or need rapid iteration and experimentation. If your project needs to demonstrate value quickly — say, in an enterprise proof-of-concept — Claude gets you there without the DevOps overhead that DeepSeek would entail.

Cutting-Edge Model Quality and Features: Claude Opus 4.6 holds the highest score on Terminal-Bench 2.0 for agentic coding (65.4%) and leads on Humanity’s Last Exam for complex multidisciplinary reasoning. Its 1M token context window, agent teams, and enterprise tools (Excel, PowerPoint, Cowork) represent capabilities that would require significant engineering effort to replicate with any open model.

Anthropic’s full-time job is keeping Claude at the cutting edge. They continuously train and fine-tune the model on massive data and human feedback, meaning you benefit from improvements immediately through the API. If having the absolute best model performance from day one is crucial — in terms of natural language understanding, creativity, coding ability — Claude provides that edge without you lifting a finger.

Moreover, because Claude is delivered as a managed service, Anthropic can roll out model improvements and new versions without requiring you to deploy new weights or upgrade infrastructure. The transition from Opus 4.5 to Opus 4.6, for example, brought a 190-point Elo improvement on economically valuable knowledge work tasks — and API users gained access immediately.

Enterprise Compliance and Support: Enterprises need SLAs, support contracts, and compliance certifications. Anthropic offers SOC 2 and ISO certifications, documentation on data handling, data residency controls (US-only inference at 1.1x pricing), and enterprise support. If your organization requires a vendor partner with formal accountability, Claude meets those requirements.

If something goes wrong — the model outputs something harmful, or there is a service disruption — you have a vendor to contact for support. This level of accountability is appealing for large companies. By contrast, self-hosting DeepSeek means your team handles all operational issues without external vendor support. Claude’s alignment and guardrails also help with enterprise compliance out-of-the-box, reducing the risk of the AI producing unethical or defamatory responses.

Real-world enterprise adoption is substantial. Norway’s $2.2 trillion sovereign wealth fund began using Claude to screen its portfolio for ESG risks in February 2026. During a two-week scan, Claude found over 100 bugs in the Mozilla Firefox web browser, 14 of which were considered high severity. These examples demonstrate Claude’s capability for high-stakes enterprise work.

Built-in Safety and Content Moderation: If your application serves a broad user base, Claude’s Constitutional AI guardrails provide baseline content moderation without building your own pipeline. Claude will refuse or sanitize problematic responses out of the box — including extreme violence, hate speech, self-harm instructions, and other harmful content according to Anthropic’s policies.

For public-facing chatbots where safety matters, this accelerates development significantly. You are leveraging Anthropic’s extensive work on keeping outputs appropriate, rather than building and maintaining your own AI safety layer. With DeepSeek, you would need to implement your own moderation system to filter potentially problematic outputs — which requires additional engineering effort and ongoing maintenance.

Variable Workloads and Experimentation: Not every project has steady high-volume usage. Claude’s pay-per-use model is more cost-effective for low or spiky workloads than maintaining GPU servers 24/7. A small web app seeing a few hundred queries per day runs cheaply on Claude, whereas setting up even one GPU server for DeepSeek would be overkill.

Similarly, if your usage might spike unpredictably (say your app goes viral temporarily), Claude’s cloud can automatically handle that scale. With DeepSeek, you would have to anticipate and provision hardware for peak load. For startups in discovery phase or products with uncertain usage patterns, Claude reduces financial risk. You can test and iterate without sunk infrastructure costs — if the AI feature does not pan out, you have not invested in hardware.

Agentic Workflows and Claude Code: Claude Code is widely considered the leading AI coding assistant as of early 2026. It allows developers to delegate coding tasks directly from their terminal, with support for VS Code, JetBrains, and web interfaces. Agent teams allow multiple Claude instances to work in parallel on complex tasks.

In a notable demonstration, 16 Opus 4.6 agents wrote a complete C compiler in Rust from scratch — the first model capable of this feat, at a cost of approximately $20,000. Opus 4.6 has also been demonstrated autonomously closing 13 GitHub issues and assigning 12 issues to the right team members in a single day, managing a ~50-person organization across 6 repositories.

If your workflow depends on sophisticated agent orchestration — multi-step coding tasks, repository-wide refactoring, automated code review — Claude’s ecosystem is significantly more mature than what is currently available with DeepSeek. DeepSeek’s function calling provides the building blocks, but the orchestration layer must be built by the developer.

In essence, choose Claude when you value managed convenience, enterprise-grade tooling, cutting-edge agentic capabilities, and built-in safety — and are willing to trade control and pay premium pricing for those benefits.

Comparison Summary

| Aspect | DeepSeek (V3.2) | Claude (Opus 4.6) |

|---|---|---|

| Deployment | Self-hosted or cloud API | Managed cloud service only |

| Model Weights | Open-weight (MIT License) | Proprietary (closed) |

| Parameters | 685B total / 37B active (MoE) | Not publicly disclosed |

| Context Window | 128K tokens | 1M tokens (beta) |

| Max Output | 8K tokens | 128K tokens |

| Reasoning | Transparent CoT via reasoning_content | Adaptive thinking (internal, configurable effort) |

| Agent Capabilities | Function calling (developer-mediated) | Agent Teams, Claude Code, Cowork, Computer Use |

| Enterprise Tools | None (build your own) | Excel, PowerPoint, Cowork, Chrome extension |

| Multimodal | Text-focused (separate VL models) | Text + image input, text output |

| Input Price (per M tokens) | $0.28 | $5.00 |

| Output Price (per M tokens) | $0.42 | $25.00 |

| Fine-Tuning | Full fine-tuning supported | Not available |

| Safety Guardrails | User-implemented | Built-in Constitutional AI |

| Vendor Lock-in | None | High (proprietary, cloud-dependent) |

Conclusion

Both DeepSeek and Claude are powerful AI models, but they cater to fundamentally different philosophies and needs.

DeepSeek, with its open-source roots, gives developers the keys to the kingdom. You get transparency, control, and the ability to shape the model’s deployment and behavior to your liking. V3.2’s unified reasoning, gold-medal benchmarks, and pricing at a fraction of competitors make it compelling for teams who value autonomy. In return, you take on more responsibility to manage the model.

Claude, offered as a managed platform by Anthropic, provides a ready-to-use intelligent assistant with enterprise-grade tooling. Opus 4.6’s 1M context, agent teams, adaptive thinking, and industry-leading agentic coding performance represent the cutting edge of managed AI services. It abstracts away technical complexity and emphasizes safety and consistency — at the cost of control and significantly higher pricing.

This comparison is not about declaring an absolute “winner.” Many organizations find uses for both approaches in different contexts. You might use Claude for agentic coding workflows where its agent teams and Claude Code excel, while using DeepSeek on-prem for privacy-sensitive data processing where cost and control matter most.

The decision ultimately comes down to your specific requirements: Do you prioritize independence, flexibility, and cost efficiency (DeepSeek)? Or convenience, enterprise tooling, and cutting-edge managed capabilities (Claude)?

From an architectural standpoint, DeepSeek aligns with scenarios of infrastructure control, reasoning transparency, and open innovation. Claude aligns with managed delivery, strong alignment, and enterprise-grade tooling. Neither model is fundamentally superior in all aspects — each is preferable for different use cases without ideological judgment.

As the AI landscape evolves rapidly in 2026, both platforms continue advancing. DeepSeek’s upcoming models may narrow Claude’s advantages in context length and multimodal capabilities, while Claude’s agentic features and enterprise integrations continue to expand. Developers who understand the structural differences between these approaches will be best positioned to choose — and adapt — as these platforms evolve.

By understanding these structural differences, you can make an informed choice that best supports your application’s goals. For more on DeepSeek’s capabilities, explore our API documentation, chat interface, and model guides.

Frequently Asked Questions

Is DeepSeek better than Claude?

Neither is universally “better.” DeepSeek excels in cost efficiency (18-60x cheaper), self-hosting capability, reasoning transparency, and full customization. Claude excels in managed convenience, enterprise tooling (agent teams, Excel, PowerPoint), safety guardrails, and agentic coding performance. The best choice depends on your specific requirements.

Can I self-host Claude?

No. Claude is a proprietary, closed-source model available only through Anthropic’s cloud API and partner platforms (Amazon Bedrock, Google Vertex AI, Microsoft Foundry). DeepSeek is the self-hostable option in this comparison, with full model weights available under the MIT License.

How much cheaper is DeepSeek than Claude?

DeepSeek V3.2 is approximately 18x cheaper on input tokens ($0.28 vs $5.00 per million) and 60x cheaper on output tokens ($0.42 vs $25.00 per million) compared to Claude Opus 4.6. Against Claude Sonnet 4.6, DeepSeek is 11x cheaper on input and 36x cheaper on output. Self-hosting DeepSeek eliminates per-token costs entirely.

Which has a larger context window?

Claude Opus 4.6 offers a 1M token context window (in beta), while DeepSeek V3.2 supports 128K tokens. Claude’s context is roughly 8x larger. However, 128K tokens covers hundreds of pages of text and is sufficient for most practical applications.

Which is better for coding?

Claude Opus 4.6 leads on agentic coding benchmarks (65.4% Terminal-Bench 2.0) and offers Claude Code with agent teams. DeepSeek V3.2 achieved gold on the 2025 IOI and offers transparent reasoning for debugging. Choose Claude for sophisticated agent-driven coding workflows; choose DeepSeek for cost-efficient coding assistance with full reasoning visibility.

What is Claude Code?

Claude Code is Anthropic’s agentic command-line coding tool, released in February 2025 and widely considered the leading AI coding assistant as of early 2026. With Opus 4.6, it supports agent teams where multiple Claude instances work in parallel. It is available as a CLI tool, web version, VS Code extension, and JetBrains extension.