DeepSeek is an open-weight, developer-oriented AI platform known for its transparency and flexibility. In this comparison, we evaluate DeepSeek relative to Grok, a frontier model from xAI (now a SpaceX subsidiary), focusing on their architectures, deployment models, and features.

All observations are based on documented capabilities, avoiding speculation or marketing claims. The goal is to help developers understand how Grok differs from DeepSeek’s design without favoring either, using DeepSeek as the primary reference point.

(Note: chat-deep.ai is an independent community resource, not an official DeepSeek site.)

Documentation Notice:

This analysis is based on publicly documented capabilities as of March 2026. Model specifications, pricing, context limits, and tool availability may change over time. Developers should consult official documentation from DeepSeek and xAI before making production decisions.

What DeepSeek Is Designed For (Current State)

DeepSeek is built to be an open and adaptable large language model platform for developers. It provides open-weight models, meaning the actual model weights are openly released under permissive licenses.

This allows anyone to download and run DeepSeek models locally or on their own servers, enabling complete deployment autonomy. At the same time, DeepSeek offers an official cloud API, giving developers the choice between self-hosting or using a managed service.

This dual approach emphasizes deployment flexibility. You can integrate DeepSeek via API for convenience or deploy it on-premises for privacy and control. No other frontier model offers this level of choice between managed and self-hosted deployment.

DeepSeek V3.2: The Current Flagship

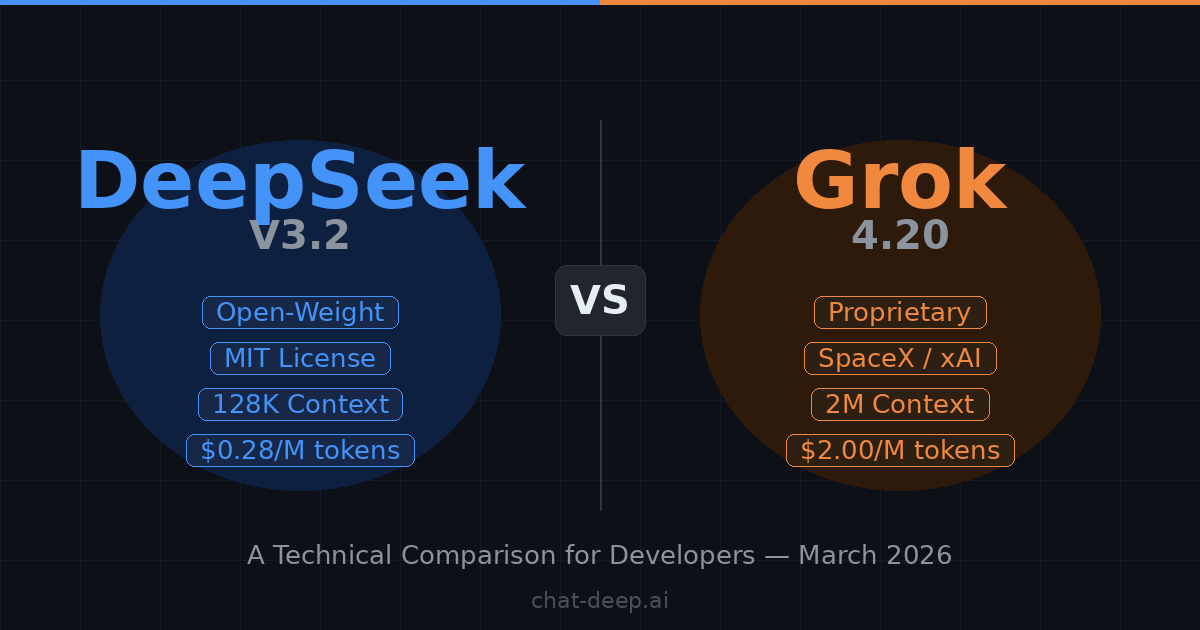

The current flagship model is DeepSeek V3.2, released in December 2025. It represents a major leap over its predecessors in both architecture and capability. Here are the key specifications:

- 685 billion total parameters with only 37 billion active per token (Mixture-of-Experts architecture)

- 128K token context window for lengthy conversations, code files, and documents

- DeepSeek Sparse Attention (DSA) — a fine-grained sparse attention mechanism that reduces training and inference cost while preserving quality in long-context scenarios

- Gold-medal results on both the 2025 International Mathematical Olympiad (IMO) and the International Olympiad in Informatics (IOI)

- MIT License permitting unrestricted commercial use, modification, and redistribution

The MoE architecture is what keeps V3.2 practical despite its massive parameter count. Rather than activating the entire 685B-parameter model on every token, MoE routes each input to a small subset of specialized “expert” sub-networks. This means inference costs stay manageable, and the model can run on hardware configurations that would be impossible for a dense model of equivalent capability. The DeepSeek Sparse Attention (DSA) mechanism is another key innovation. Traditional transformer attention scales quadratically with sequence length, making long contexts expensive. DSA reduces this by selectively attending to relevant portions of the input, cutting both training and inference costs while preserving output quality. This is particularly important for the 128K context window, where full attention would be prohibitively expensive.

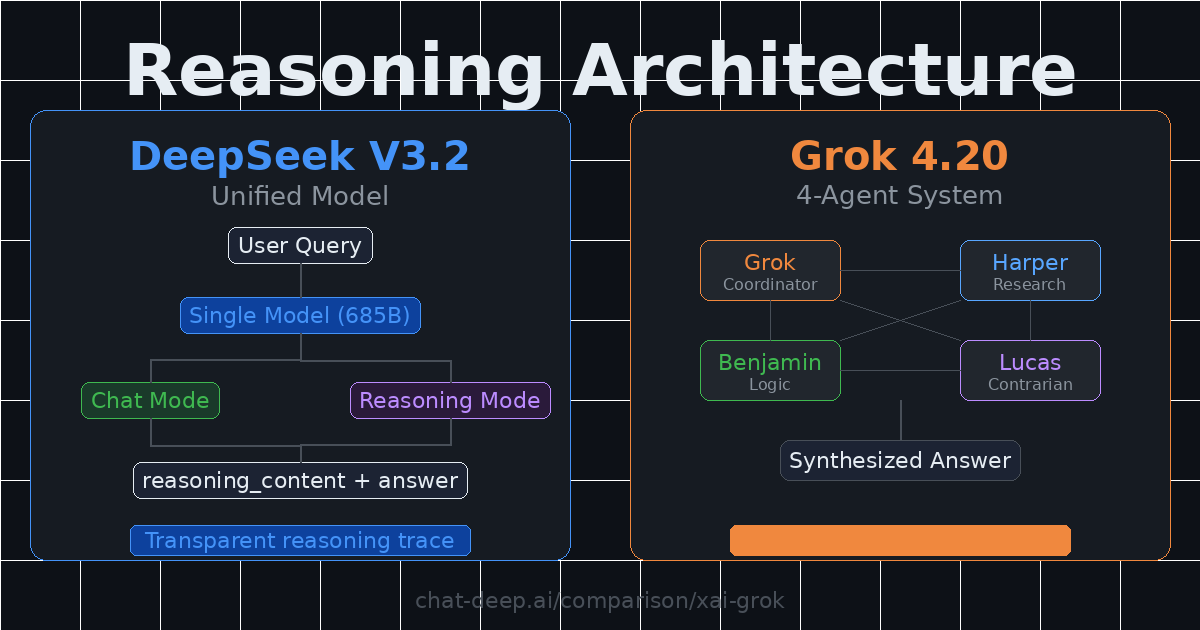

Unified Chat and Reasoning

One of V3.2’s most significant advances is the unification of chat and reasoning into a single model. Previous releases required developers to choose between separate endpoints: deepseek-chat for direct answers and deepseek-reasoner for chain-of-thought reasoning.

V3.2 merges both capabilities. Developers can toggle reasoning on or off via a simple parameter. When enabled, the model generates step-by-step thoughts exposed through a dedicated reasoning_content field in the API response, alongside the final answer in the content field.

This transparency is invaluable for debugging, auditing, and building trust in high-stakes applications. For example, when solving a complex coding problem, the reasoning trace shows the model’s approach, intermediate steps, and self-corrections — enabling developers to verify correctness before trusting the output.

Pricing and Function Calling

DeepSeek’s API pricing is among the most competitive in the industry: $0.28 per million input tokens and $0.42 per million output tokens. Cached input tokens cost just $0.028/M — a 90% discount for workloads with shared prefixes like system instructions or document templates.

This means a production application with well-structured prompts can see effective input costs below $0.05 per million tokens. For budget-conscious projects or applications with massive token throughput, this pricing is essentially unmatched among frontier models.

The API also supports function calling with JSON schemas, similar to OpenAI’s interface. Developers define custom tools, and the model can output structured JSON to invoke them. Both chat and reasoning modes support this, providing the building blocks for agent-like behavior while leaving tool implementations up to the developer.

What Grok Is Designed For (Current State)

Grok, developed by xAI, is a proprietary large language model positioned as a cutting-edge reasoning AI system and chatbot. Unlike DeepSeek, Grok is not open-weight. It is available only through a managed API and consumer-facing products, with no publicly released model weights.

Grok is designed to be a cloud-based AI assistant with an emphasis on autonomous tool use, real-time information access, and multi-agent reasoning. It is essentially xAI’s answer to models like OpenAI’s GPT series, focusing on high-end capabilities delivered as a service.

SpaceX Acquisition

In February 2026, SpaceX announced its acquisition of xAI, merging the AI company into Elon Musk’s aerospace enterprise. This gives xAI access to SpaceX’s substantial infrastructure resources and signals a long-term commitment to Grok’s development.

The acquisition also means Grok’s future is now tied to a larger corporate entity’s strategic decisions — a consideration for developers evaluating long-term platform dependencies.

Grok 4.20: The Current Flagship

The current flagship model is Grok 4.20, launched as a public beta on February 17, 2026. Elon Musk announced it on X, specifying that users must manually select “Grok 4.2” from the model menu to activate it. It introduces two major architectural innovations.

4-Agent Collaboration System: Four specialized AI agents work in parallel. Grok acts as the coordinator, Harper handles research, Benjamin manages logic and mathematics, and Lucas provides contrarian analysis. These agents think independently, cross-verify outputs, and synthesize conclusions into a single high-quality response. This delivers a significant boost on complex reasoning tasks compared to single-model approaches.

Rapid Learning Architecture: Grok 4.20 incorporates user feedback and improves capabilities on a weekly cadence, with release notes accompanying every update. Unlike every previous Grok version, which were static after release, 4.20 updates through continuous feedback integration. This makes it the first model in the Grok series to iterate in near real-time post-launch.

Built-in Agent Tools

Grok has native support for web browsing, searching X (Twitter) posts, running Python code, and other tools via xAI’s Agent Tools API.

The model autonomously decides when to invoke these tools when answering a query. Developers do not need to implement them; they simply enable them via API options and Grok handles the rest.

Grok 4.20 also introduced medical document analysis via photo upload. Users can photograph physical medical documents and receive AI-generated interpretations, though xAI has not published formal clinical validation for this feature.

On the API side, the Grok 4 Fast models now ship as two variants: grok-4-fast-reasoning and grok-4-fast-non-reasoning, each with a 2M token context window. This allows developers to tune the amount of test-time compute applied to their use cases. Previously, reasoning was always on with no way to disable it, which consumed extra tokens and added latency for simple queries.

On the consumer side, Grok is accessible for free on grok.com with usage limits. SuperGrok costs $30/month for higher limits, and the new SuperGrok Heavy tier at $300/month provides access to the most powerful Grok 4 Heavy variant with much higher rate limits for enterprise and research workloads.

Context, Multimodal, and Reach

The Grok 4 Fast models offer a 2-million-token context window, one of the largest documented context windows in production. This enables workflows like reviewing very large document collections within a single session or supporting multi-step agent planning that spans thousands of intermediate tokens.

Grok also supports multimodal interactions. It accepts image inputs alongside text for visual analysis, offers voice capabilities integrated into Tesla vehicles and mobile apps, and provides image and video generation APIs.

xAI’s reach is substantial: approximately 600 million monthly active users across the X and Grok apps. The company raised $20 billion in its Series E funding round and continues expanding its Colossus supercomputer infrastructure toward over one million GPU equivalents.

Looking ahead, Grok 5 is expected in Q2 2026 with 6 trillion parameters — double the rumored 3 trillion in Grok 4 — and native video understanding capabilities. Musk has claimed a “10% and rising” probability of Grok 5 achieving artificial general intelligence.

Technical Differences (DeepSeek vs Grok)

Below, we compare DeepSeek and Grok across several key dimensions. In each aspect, DeepSeek’s approach is described first, followed by how Grok differs.

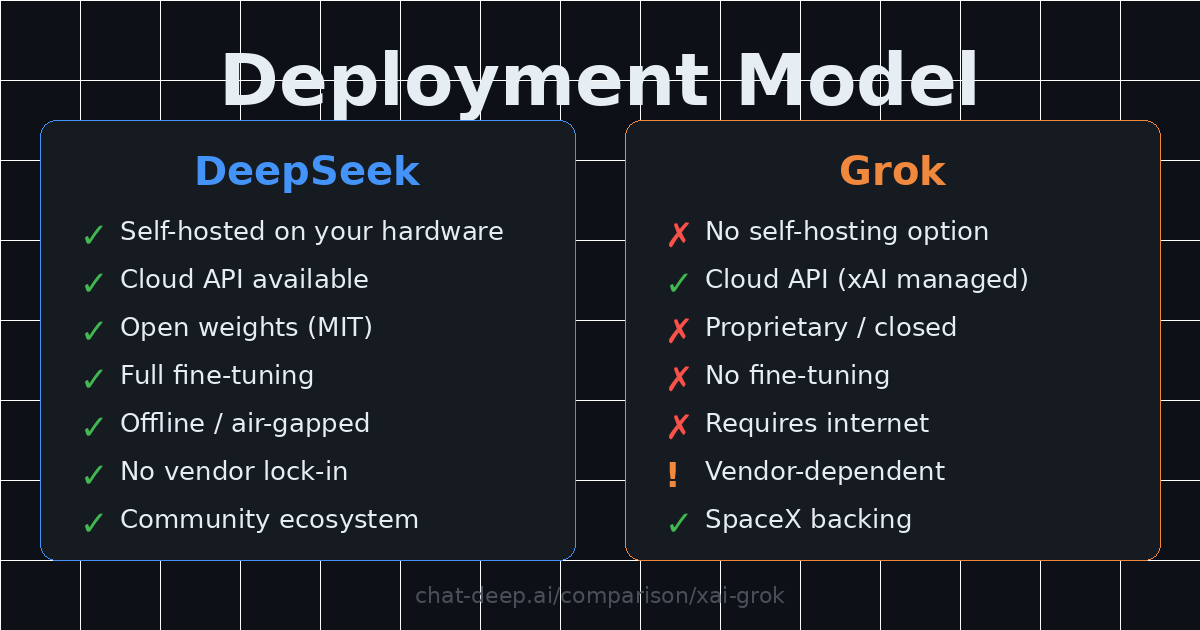

Deployment Model

DeepSeek: Offers maximum deployment flexibility. You can run DeepSeek models through the official API service or by self-hosting the open-weight models on your own hardware or cloud.

Organizations can deploy DeepSeek in private environments for complete data control. The MIT License ensures you can always take the model in-house. Fine-tuning is fully supported, and community-created distilled versions (down to a few billion parameters) already demonstrate the ecosystem’s vitality.

Self-hosting tools and community guides make deployment increasingly accessible. You can spin up DeepSeek with an OpenAI-compatible API endpoint using frameworks like vLLM, so existing applications can call DeepSeek as if it were an OpenAI model with minimal code changes.

Grok: Provided as a managed API service by xAI, with no public open-weight download. Developers access Grok through xAI’s cloud endpoints, and some third-party routing platforms like OpenRouter also offer access.

Because inference runs on xAI’s infrastructure, Grok is not designed for local or on-prem deployment. This introduces vendor dependency and may require additional review for sensitive or regulated data. Customization is primarily done via prompting and API configuration, with no generally available public fine-tuning workflow.

API and Tool Execution Model

DeepSeek: Uses a standard chat completion API with developer-defined function calling. Developers specify a list of tools with JSON schemas, and the model may output a structured JSON call for the developer’s application to execute.

This approach is modular and transparent. The model explicitly outputs its intended function call (e.g. {"function": "lookupWeather", "arguments": {"city": "London"}}), making it clear what it wants to do. You decide which tools to expose, and you handle execution and any external effects.

DeepSeek does not spontaneously browse the web or run code — it acts only through the function-calling mechanism you set up. This is ideal for custom tool integration and security-sensitive environments where you need to sandbox tool execution. However, it requires additional engineering on your side to implement functions and manage the call loop.

It is worth noting that DeepSeek’s function calling works with an OpenAI-compatible API format. This means if you have existing tooling built for OpenAI’s function calling, migrating to DeepSeek is often a matter of changing the API endpoint and model name, with minimal code changes required.

Grok: Implements an autonomous tool execution model built into the service. You enable or disable pre-built tools (web_search, x_search, code_execution, etc.) in your API call, and Grok’s agent decides when to invoke them internally on xAI’s servers.

With the 4-agent system in Grok 4.20, tool usage becomes more sophisticated. Multiple agents can invoke different tools in parallel, cross-verify findings, and synthesize results. Grok also supports structured outputs and Collections search for querying uploaded knowledge bases.

Unlike DeepSeek’s function calling where the model asks the caller to run a function, Grok just does it and gives you the outcome. This is convenient but also a closed loop: you entrust xAI’s system to execute code or retrieve information on your behalf, with limited visibility into the process.

In summary, DeepSeek’s API model is tool-aware but developer-mediated, offering flexibility and oversight. Grok’s API model is agent-like and automated, reducing developer effort for certain tasks. The right choice depends on whether you need granular control over tool execution (DeepSeek) or prefer a fully managed agent that handles everything autonomously (Grok).

Reasoning Transparency

DeepSeek: Prioritizes transparency in the reasoning process. With V3.2’s unified model, developers toggle reasoning via a simple parameter. When enabled, a dedicated reasoning_content field in the response JSON contains the model’s step-by-step thoughts.

These might include logic for solving a math problem, steps considered in a coding task, or evaluations when weighing multiple options. This feature stems from DeepSeek’s training focus on explicit reasoning, originally pioneered in the R1 model.

If you prefer not to see reasoning, disabling it suppresses the output while the model still reasons internally. DeepSeek effectively gives developers a window into the “black box” when desired — valuable for research, compliance, and debugging.

Grok: Has evolved its approach to reasoning transparency. The original Grok 4 treated reasoning as always-on and hidden, with no user-configurable toggle. The newer Grok 4 Fast models now ship as two variants: grok-4-fast-reasoning and grok-4-fast-non-reasoning, allowing developers to enable or disable reasoning via API.

When reasoning is enabled, Grok generates internal thinking tokens. On OpenRouter, these are tracked separately from completion tokens. However, the full chain-of-thought trace is not as directly accessible or structured as DeepSeek’s reasoning_content field.

On the consumer side, Grok 4.20 on grok.com uses automatic routing: simple queries get instant responses while complex ones trigger extended reasoning, handled transparently by the 4-agent system behind the scenes.

Context Window

DeepSeek: Supports a 128K token context window in V3.2. This is roughly equivalent to 80,000 words or hundreds of pages of text. DeepSeek achieved this through specialized training and the DSA sparse attention mechanism.

In practical terms, 128K allows ingestion of long documents, entire codebases, or very lengthy conversations without truncation. The upcoming V4 is expected to expand this to 1 million tokens via the Engram conditional memory architecture.

Grok: Offers a 2-million-token context window on the Grok 4 Fast models, currently one of the largest documented context windows in production.

This enables workflows like reviewing massive document collections within a single session, maintaining extensive conversational state across multi-hour interactions, or supporting multi-step agent planning that accumulates thousands of intermediate tokens.

However, practical considerations apply to both models: larger contexts increase latency, memory usage, and cost. Effective long-context usage often requires structured prompting or segmentation strategies to maintain answer quality. DeepSeek’s self-hosted deployment allows teams to pair the model with custom retrieval or optimization strategies, while Grok handles everything server-side.

Governance and Customization

DeepSeek: Being open-weight, DeepSeek gives users a high degree of governance over model behavior. You can fine-tune on your own datasets, create domain-specific variants, or implement custom content moderation. Community-created distilled versions and specialized fine-tunes already exist across the Hugging Face ecosystem.

DeepSeek also publishes documentation on its training approach, aiding transparency and trust. Customization extends to embedding DeepSeek in offline applications, edge devices, or highly customized pipelines. Because you run the model, you control content moderation, update schedules, and version management.

Grok: As a closed service, Grok’s behavior is governed by xAI. Users cannot fine-tune or alter the model’s training. Customization is limited to prompt engineering, tool selection, and using the Files API or Collections retrieval system for custom knowledge.

Governance is centralized: xAI sets content moderation rules and has historically adjusted guardrails in response to incidents. Developers have limited ability to override refusals, maintain older model versions, or adjust the model’s fundamental behavior. For organizations needing strict, customizable control, this is a disadvantage.

Pricing Comparison

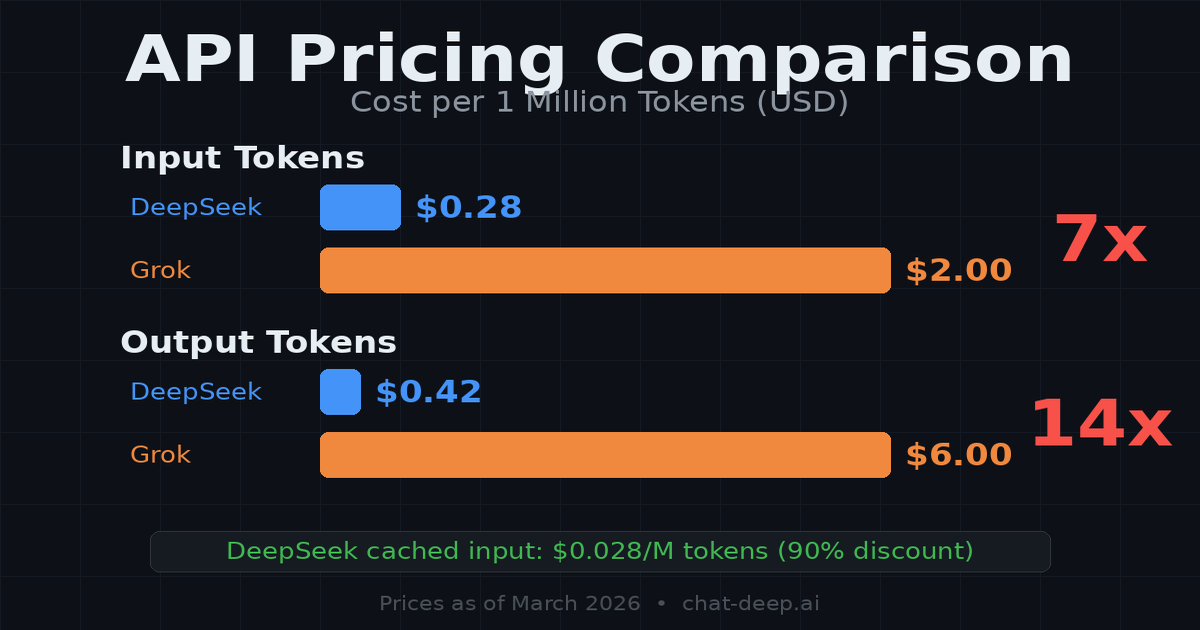

The cost difference between DeepSeek and Grok is substantial and can be a deciding factor for many projects:

| Cost Factor | DeepSeek V3.2 | Grok 4.20 |

|---|---|---|

| Input (per M tokens) | $0.28 | $2.00 |

| Output (per M tokens) | $0.42 | $6.00 |

| Cached Input | $0.028 (90% discount) | Not documented |

| Web Search | N/A (developer-implemented) | $5 per 1K calls |

| Self-Hosting | Yes (fixed infrastructure cost) | Not available |

| Free Tier | 5M tokens on signup | Free on grok.com (limited) |

| Consumer Plans | Free web chat | SuperGrok $30/mo, Heavy $300/mo |

DeepSeek is roughly 7x cheaper on input and 14x cheaper on output compared to Grok. For high-volume applications, this difference translates to thousands of dollars in monthly savings. You can estimate your specific costs using the DeepSeek API Cost Calculator.

Beyond API pricing, self-hosting DeepSeek eliminates per-token fees entirely. After the fixed infrastructure investment, marginal cost per request can be extremely low in high-volume setups — though you still pay for compute, operations, maintenance, and capacity planning.

Licensing and Control

DeepSeek: Released under the MIT License, among the most permissive open-source licenses available. Companies can deploy commercially, derive new models, or redistribute modifications without licensing fees. No vendor lock-in: even if DeepSeek Inc. changes direction, the community can continue using and improving existing models.

Grok: Proprietary and subject to xAI’s terms of service. No transfer of model weights to the user. Prompts sent to Grok go to xAI’s servers under their data processing addendum. You cannot redistribute Grok or integrate it offline.

The SpaceX acquisition adds infrastructure stability but also ties Grok’s future to a larger corporate entity’s strategic decisions. For organizations with strict regulatory requirements or desire for solution longevity, DeepSeek’s open licensing represents a more controllable investment.

When DeepSeek Is More Suitable

Certain scenarios naturally favor DeepSeek’s open, flexible approach:

Data Privacy and Sovereignty: If you work with sensitive or regulated data that cannot leave your environment, DeepSeek is the clear choice. Deploy it locally so all prompts and completions stay on your own servers, meeting compliance for healthcare, finance, or government sectors.

Self-Hosting and Offline Use: For applications that need to run offline or on the edge — private networks, IoT devices, or air-gapped systems — DeepSeek’s open-weight model is one of the few high-end options available. You have no dependency on an internet service once the model is running. Community tools for deploying with frameworks like vLLM make self-hosting increasingly accessible. There are already tools and guides for running DeepSeek with optimized inference engines, one-click cloud deployment templates, and even consumer-grade GPU configurations for the distilled smaller models.

Customization and Fine-Tuning: When you need a model adapted to domain-specific knowledge, terminology, or style, DeepSeek allows full fine-tuning, custom system prompts, architecture modifications, or distilled versions optimized for specific hardware. This is essential for research or specialized industry use cases that generic models do not handle well out of the box.

Transparent Reasoning and Auditing: DeepSeek’s structured reasoning_content field lets developers and auditors inspect exactly why the model gave a certain answer. This is crucial for legal analysis, medical research, financial modeling, or any field where accountability and explainability matter.

Cost Efficiency at Scale: At $0.28/$0.42 per million tokens — 7-14x cheaper than Grok — DeepSeek dramatically reduces API costs. Self-hosting eliminates per-token fees entirely after the fixed infrastructure investment. Budget-conscious projects or applications processing millions of requests daily will find DeepSeek significantly more economical.

No Vendor Lock-in: DeepSeek ensures you won’t be stranded if a service changes pricing, policies, or shuts down. You have full control and could continue improving the model independently. You can also route development tools like Claude Code through DeepSeek, leveraging its Anthropic-compatible API layer while benefiting from DeepSeek’s pricing and open deployment flexibility.

In essence, DeepSeek is more suitable when control, transparency, and flexibility are top priorities — for example, an enterprise deploying an internal chatbot that must run on-premises, or a developer building a custom AI tool that needs tuning and full model access.

If DeepSeek wins this trade-off for privacy, cost, or customization, don’t default to a single checkpoint blindly. The DeepSeek Models Hub helps you compare V3.2 with R1, Coder, Math, VL, and experimental branches so you can pick the model family that actually matches your workload.

When Grok May Be Suitable

There are scenarios where Grok’s managed service aligns better with a project’s needs:

Turnkey Tool Integration: If your priority is to quickly build an application leveraging web browsing, X search, code execution, or real-time data without implementing those yourself, Grok offers a ready-made solution. The 4-agent system in Grok 4.20 coordinates multiple specialized agents that cross-verify information, reducing hallucination risk for complex queries.

Extremely Long Context Tasks: With a 2-million-token context window, Grok currently offers the largest production context in this comparison. This is decisive for reviewing massive document sets in a single session or supporting multi-step agent planning that spans thousands of intermediate tokens. DeepSeek’s V4 is expected to close this gap with 1M tokens, but Grok holds the advantage today.

Multimodal and Specialized Features: Grok provides image understanding, voice integration (including Tesla vehicles), image/video generation, and medical document analysis under one API umbrella. DeepSeek V3.2 is primarily text-focused, with multimodal capabilities expected in V4.

Minimal Infrastructure Management: Teams that do not want to manage servers, GPUs, or model updates will appreciate Grok’s managed approach. With the rapid learning architecture, the model improves weekly based on user feedback, meaning your application benefits from continuous enhancement with zero effort on your part.

Real-Time Data and Social Media: Grok’s native integration with X (Twitter) gives it a unique advantage for applications requiring real-time social media analysis, trend monitoring, or content referencing current public discourse. Replicating this with DeepSeek would require significant custom engineering, including building your own X API integration and search pipeline.

In short, Grok may be suitable if convenience, cutting-edge features as a service, and massive context outweigh the need for control — provided you are comfortable with the closed, cloud-based nature of the solution.

Developer Considerations

For a developer or team evaluating DeepSeek vs Grok, several practical trade-offs come into play.

Data Governance

With DeepSeek, you keep full governance of data. Queries and responses can remain in your domain, especially when self-hosted. This is crucial for confidentiality and regulatory compliance.

Grok requires sending data to xAI’s cloud. While they provide a data processing addendum and security documentation, this is a consideration for highly sensitive data. Evaluate whether your project can tolerate external data processing or requires on-prem control.

Integration Effort

DeepSeek may involve more initial work, especially if self-hosting: setting up inference servers, connecting tools via function calls, and managing infrastructure requires engineering resources.

Grok provides out-of-the-box functionality with built-in search, code execution, and the 4-agent system, reducing backend complexity. If rapid development with minimal overhead is a goal, Grok reduces time-to-market. DeepSeek gives you more freedom to customize every aspect of the integration.

Performance and Context Needs

Consider the typical context length your application truly requires. DeepSeek’s 128K context is sufficient for most applications, covering hundreds of pages of text. Grok’s 2M-token context is relevant for niche cases like legal e-discovery across millions of tokens or extremely long multi-step agent sessions.

Both are top-tier in reasoning and coding, but their architectures differ. DeepSeek excels at structured, step-by-step problem solving with transparent reasoning. Grok’s multi-agent approach may handle broad research queries more effectively. Prototyping with both on your specific workload is worthwhile, since benchmarks do not always predict real-world fit.

Cost Projection

At 7-14x lower pricing, DeepSeek’s cost advantage compounds rapidly at scale. Self-hosting eliminates per-token fees entirely after the fixed infrastructure investment.

Grok’s pricing includes the value of integrated tools and managed infrastructure, which may justify the premium for teams that would otherwise spend engineering resources building equivalent capabilities. For budget planning, project your expected monthly token usage and compare: DeepSeek API, DeepSeek self-hosted (infrastructure cost), and Grok API (including tool usage charges).

Ecosystem and Support

DeepSeek benefits from a growing open-source community with Hugging Face integrations, community fine-tunes, and transparent model documentation. Troubleshooting is easier with open models where many developers contribute to the ecosystem.

Grok benefits from xAI’s substantial backing (SpaceX acquisition, $20B funding, 600M MAU across X), direct vendor support, and tight X platform integration. Your choice depends on whether you value community independence (DeepSeek) or corporate backing and platform integration (Grok).

It is also worth considering the broader trajectory. DeepSeek’s open-source approach means the model will persist regardless of corporate changes. Grok’s proprietary nature means you are dependent on xAI’s continued operation and strategic alignment. The SpaceX acquisition reduces the risk of xAI shutting down, but it also means Grok’s direction could shift based on SpaceX’s broader priorities rather than pure AI development goals.

Comparison Summary

The following table summarizes the key structural differences as of March 2026:

| Feature | DeepSeek (V3.2) | Grok (4.20 / 4 Fast) |

|---|---|---|

| Deployment | Self-hosted or cloud API | Cloud API only (managed by xAI) |

| Model Weights | Open-weight (MIT License) | Proprietary (closed) |

| Parameters | 685B total / 37B active (MoE) | Not publicly disclosed |

| Context Window | 128K tokens (V4: ~1M expected) | 2M tokens (Grok 4 Fast) |

| Reasoning Toggle | Yes, with exposed chain-of-thought | Yes (4 Fast), limited trace visibility |

| Tool Execution | Developer-mediated (function calling) | Autonomous (built-in agent tools) |

| Multi-Agent | No (single model) | Yes (4-agent system in 4.20) |

| Multimodal | Text-focused (V4: text+image+video) | Text, image, voice, video |

| Input Price (per M tokens) | $0.28 | $2.00 |

| Output Price (per M tokens) | $0.42 | $6.00 |

| Fine-Tuning | Full fine-tuning supported | Not available |

| Vendor Lock-in | None | High (proprietary, cloud-dependent) |

Conclusion

Both DeepSeek and Grok represent powerful advancements in AI, but they cater to fundamentally different priorities.

DeepSeek reinforces the open AI ecosystem. It gives developers the ability to deploy a state-of-the-art model on their own terms, inspect its workings, and build custom solutions with fine-grained control. Its 128K context, transparent reasoning, unified architecture, gold-medal benchmarks, and pricing at a fraction of competitors make it compelling for developers who value autonomy and cost efficiency.

Grok delivers a turn-key experience with the industry’s largest context window, a novel 4-agent collaboration system, integrated tools from web search to code execution, and the backing of SpaceX’s infrastructure. It appeals to teams that prefer a unified, managed service and are willing to trade control for convenience and cutting-edge features.

The decision is not about which model is “better” universally. It is about which is better suited for your specific needs. Many teams may even use both in complementary roles: DeepSeek for cost-efficient, privacy-sensitive, or custom workloads, and Grok for tasks that benefit from its integrated tool ecosystem and massive context.

As the AI landscape evolves rapidly in 2026, both platforms continue advancing. DeepSeek’s upcoming V4 with 1M context and multimodal capabilities could narrow several of Grok’s current advantages, while Grok 5’s rumored 6-trillion-parameter architecture may push reasoning capabilities further. Developers who evaluate both models now and understand their structural differences will be best positioned to adapt as these platforms evolve.

For a deeper technical breakdown of DeepSeek’s architecture, reasoning configuration, and deployment options, explore our API documentation, chat interface, and model guides.

Frequently Asked Questions

Is DeepSeek free to use?

DeepSeek’s open-weight models can be downloaded and self-hosted at no licensing cost under the MIT License. The official API offers a free tier with 5 million tokens upon signup, after which pricing starts at $0.28 per million input tokens. The web chat interface is also free with usage limits.

Is Grok free to use?

Grok is available for free on grok.com and within the X app with usage limits. SuperGrok costs $30/month for higher limits and advanced features. SuperGrok Heavy at $300/month provides access to the most powerful Grok 4 Heavy variant. API access is priced at $2/$6 per million input/output tokens.

Can I self-host Grok?

No. Grok is a proprietary, closed-source model available only through xAI’s cloud API and consumer products. There are no publicly available model weights for download. DeepSeek is the self-hostable option in this comparison.

Which model has a larger context window?

As of March 2026, Grok 4 Fast offers a 2-million-token context window, while DeepSeek V3.2 supports 128K tokens. DeepSeek’s upcoming V4 is expected to support 1 million tokens. For most practical applications, DeepSeek’s 128K context is more than sufficient.

Which is better for coding tasks?

Both are strong for coding. DeepSeek V3.2 achieved gold-medal results on the 2025 IOI and supports transparent reasoning that helps developers trace and debug the model’s coding logic. Grok 4.20’s multi-agent system coordinates research and logic agents for complex engineering problems. The choice depends on whether you value reasoning transparency and cost efficiency (DeepSeek) or integrated tool execution and massive context (Grok).

What happened to xAI?

In February 2026, SpaceX acquired xAI, merging it into Elon Musk’s aerospace enterprise. Grok continues to operate as a product under this new structure, with xAI’s team and Colossus supercomputer infrastructure now part of SpaceX.