DeepSeek API Token Usage Explained

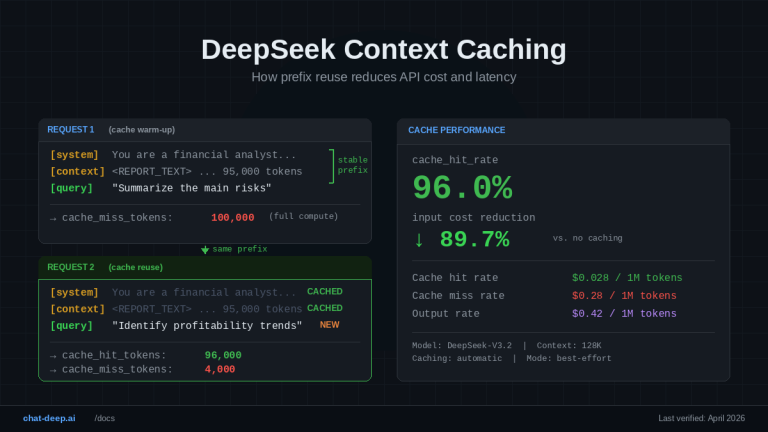

DeepSeek token usage refers to the actual input and output tokens recorded by the API for each request, and these values are the only source of truth for billing. Unlike rough character-based estimates, real cost is calculated directly from the…