DeepSeek is no longer just a story about one Chinese chatbot that briefly shocked the market in early 2025. It is now a fast-moving model family and platform with a documented history that runs from DeepSeek-V2 in May 2024 to DeepSeek-V3.2 in December 2025, alongside a public API, mobile and web apps, open-weight releases, and a more complicated privacy and licensing picture than many older explainers suggest. Reuters identifies DeepSeek as a Hangzhou-based startup created in 2023 under the leadership of Liang Wenfeng, who also co-founded the quantitative hedge fund High-Flyer.

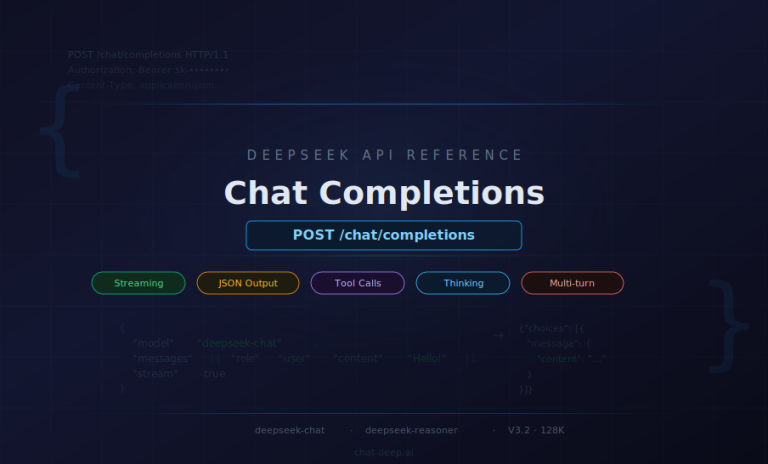

As of 5 April 2026, the public DeepSeek API documentation lists DeepSeek-V3.2, released on 1 December 2025, as the latest flagship general API model in the official release stream. Reuters reported on 3 April 2026 that a V4 launch was being prepared, but that report described an upcoming model rather than a released one, and it was not yet reflected in DeepSeek’s public API news or change log at the time of review.

That matters because many older articles about DeepSeek are not completely wrong, but they are frozen in time. They often stop at V3 and R1, or they treat the API aliases deepseek-chat and deepseek-reasoner as if they always refer to the same underlying models. Today, DeepSeek’s own documentation says those aliases map to DeepSeek-V3.2 in non-thinking and thinking modes, and that the API version differs from the App/Web version.

Quick take

- DeepSeek-V2 launched on 7 May 2024, not in “late 2024.” The old chat-deep.ai explainer misdates that milestone, while the V2 paper and model card place it in May 2024.

- V2.5 was the first real consolidation step. It merged the V2 chat line and the coder line into one model, and V2.5-1210 later refined math, coding, file upload, and webpage summarization.

- V3 and R1 were important, but they were not the end of the story. DeepSeek later shipped V3-0324, R1-0528, V3.1, V3.1-Terminus, V3.2-Exp, and V3.2.

- In the API today,

deepseek-chatanddeepseek-reasonerboth point to DeepSeek-V3.2. The first is the non-thinking mode; the second is the thinking mode; the documented context limit is 128K. - DeepSeek does not use one license across the whole family. Earlier releases such as V2/V2.5/V3 use separate model-license terms, while R1, V3.1, and V3.2 list the repository and weights under MIT.

- Privacy is a first-order issue, not a footnote. DeepSeek’s current policy says personal data is collected, processed, and stored in the People’s Republic of China, and Reuters has documented regulatory scrutiny and restrictions in countries including South Korea, Australia, Taiwan, and Italy.

The model timeline, rebuilt from primary sources

DeepSeek-V2 and V2-0517: the efficiency turn

The first milestone that still matters today is DeepSeek-V2, introduced on 7 May 2024. The paper and official model card describe it as a Mixture-of-Experts (MoE) model with 236B total parameters, 21B activated per token, and 128K context. Just as important, V2 introduced Multi-head Latent Attention (MLA) and DeepSeekMoE, a combination designed to reduce KV-cache cost and improve inference efficiency. The V2 paper says it reduced KV cache by 93.3% and increased maximum generation throughput by 5.76x relative to the older 67B line.

The API alias deepseek-chat then moved to DeepSeek-V2-0517 on 17 May 2024. DeepSeek’s change log says this update substantially improved instruction following and JSON output, which matters because older explainers often discuss V2 only as a research milestone, not as the beginning of DeepSeek’s API-era usability improvements.

V2.5 and V2.5-1210: from split chat/code lines to one general model

DeepSeek formally launched DeepSeek-V2.5 on 5 September 2024. The official release note says V2.5 combined DeepSeek-V2-0628 and DeepSeek-Coder-V2-0724, keeping general chat ability while retaining stronger coding performance, and it remained backward compatible through the deepseek-coder and deepseek-chat endpoints. That made V2.5 more than a minor refresh: it was DeepSeek’s first clear all-in-one model for general use and programming.

On 10 December 2024, the API alias deepseek-chat moved again, this time to DeepSeek-V2.5-1210. DeepSeek’s change log and release note describe better math, better coding, and a more polished experience for file upload and webpage summarization. That detail is easy to miss, but it helps explain why DeepSeek’s app and web product later leaned so hard into document and search workflows.

V3 and R1: scale first, reasoning second

DeepSeek then upgraded deepseek-chat to DeepSeek-V3 on 26 December 2024. The official release note and model card describe V3 as a 671B-parameter MoE model with 37B activated per token, trained on 14.8T tokens, while keeping API usage unchanged. In other words, V3 was not a new product surface so much as a much larger base under the same surface.

Less than a month later, on 20 January 2025, DeepSeek introduced DeepSeek-R1 via the deepseek-reasoner alias. The R1 release note presented it as a reasoning-first model; the Hugging Face model card says DeepSeek open-sourced R1-Zero, R1, and six distilled models, and licensed the repository and weights under MIT, while also explicitly allowing commercial use and distillation. This is the point where DeepSeek split clearly into a general chat branch and a reasoning branch.

V3-0324, R1-0528, V3.1, Terminus, V3.2-Exp, and V3.2: the agent turn

From March 2025 onward, DeepSeek’s releases increasingly focused on tool use, agents, and thinking modes rather than just raw model scale. On 24 March 2025, deepseek-chat moved to DeepSeek-V3-0324, with the change log highlighting better reasoning, stronger front-end code generation, improved Chinese writing, and more accurate function calling. The release note also said the weights were now under MIT, aligning V3-0324 more closely with R1’s licensing posture than with the original V3 release.

On 28 May 2025, deepseek-reasoner moved to DeepSeek-R1-0528. DeepSeek’s release note and change log say it improved benchmark performance, reduced hallucinations, and added stronger JSON output and function calling support. That is a practical turning point: reasoning was no longer only about math or chain-of-thought, but about structured outputs and tool-driven workflows.

The next large shift came on 21 August 2025 with DeepSeek-V3.1. DeepSeek described it as “our first step toward the agent era,” and the change log says it introduced a hybrid reasoning architecture in which a single model supports both thinking and non-thinking modes. That is the conceptual ancestor of the current API setup. On 22 September 2025, V3.1-Terminus followed with cleaner language consistency and stronger Code Agent and Search Agent behavior. On 29 September 2025, V3.2-Exp introduced DeepSeek Sparse Attention (DSA) for faster, cheaper long-context processing. Finally, on 1 December 2025, DeepSeek-V3.2 became the official successor to V3.2-Exp and, in DeepSeek’s own words, its first model to integrate thinking directly into tool use.

At the time of this review, the official public API docs did not list a newer flagship general API model than V3.2, even though Reuters reported on 3 April 2026 that a V4 launch could happen in the following weeks. That distinction matters: rumor or pre-release reporting is not the same as an official released model.

Accurate timeline

- 7 May 2024: DeepSeek-V2 paper and model card published.

- 17 May 2024:

deepseek-chatupgraded to DeepSeek-V2-0517. - 5 September 2024: DeepSeek-V2.5 launched;

deepseek-chatanddeepseek-coderbecame backward-compatible access points. - 10 December 2024: DeepSeek-V2.5-1210 released.

- 26 December 2024:

deepseek-chatupgraded to DeepSeek-V3. - 20 January 2025:

deepseek-reasonerintroduced as DeepSeek-R1. - 24 March 2025:

deepseek-chatupgraded to DeepSeek-V3-0324. - 28 May 2025:

deepseek-reasonerupgraded to DeepSeek-R1-0528. - 21 August 2025: both aliases moved to DeepSeek-V3.1.

- 22 September 2025: both aliases moved to DeepSeek-V3.1-Terminus.

- 29 September 2025: both aliases moved to DeepSeek-V3.2-Exp.

- 1 December 2025: both aliases moved to DeepSeek-V3.2.

A short comparison table

| Release | Date | What actually changed | License posture |

|---|---|---|---|

| V2 / V2-0517 | May 2024 | MoE + MLA, 236B/21B, 128K, better instruction following in API | Separate model-license terms for weights/use |

| V2.5 / V2.5-1210 | Sep–Dec 2024 | Merged chat + coder, improved writing/coding, better file/webpage handling | Separate model-license terms for weights/use |

| V3 | Dec 2024 | 671B/37B, 14.8T tokens, API unchanged | Separate model-license terms for weights/use |

| R1 / R1-0528 | Jan–May 2025 | Reasoning-first line, then better structured outputs and lower hallucination rates | MIT for repository and weights |

| V3.1 / Terminus | Aug–Sep 2025 | One model with thinking and non-thinking modes; stronger agent behavior | MIT for repository and weights |

| V3.2 / V3.2-Exp | Sep–Dec 2025 | DSA for long context; thinking integrated into tool use | MIT for repository and weights |

The dates, descriptions, and license posture in this table are drawn from DeepSeek’s official change log, release notes, and Hugging Face repositories.

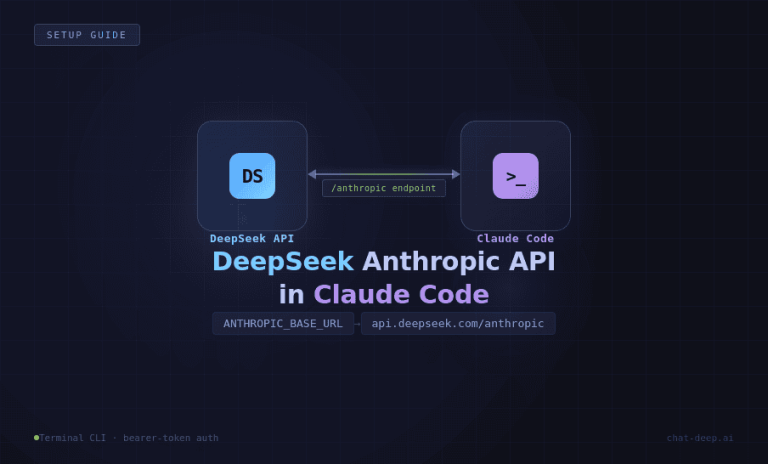

The current API situation

DeepSeek’s current API documentation is unusually explicit about version mapping. The OpenAI-compatible API uses https://api.deepseek.com, and the public docs say deepseek-chat and deepseek-reasoner currently correspond to DeepSeek-V3.2, with deepseek-chat as non-thinking mode and deepseek-reasoner as thinking mode. The same page notes that this mapping differs from the App/Web version, and the pricing page lists a 128K context limit, plus tool calls and JSON output for both models.

Thinking can be enabled in two documented ways: by setting model="deepseek-reasoner" or by enabling the thinking parameter. The official thinking guide also shows that reasoning text is exposed through reasoning_content, and that the same thinking mode now supports tool calls. This is one of the biggest changes from older DeepSeek explainers, which often frame reasoning as a standalone model behavior rather than part of a broader tool-using workflow.

Developers should also note two practical implementation rules. First, DeepSeek’s /chat/completions API is documented as stateless, so conversation history must be passed on every request. Second, DeepSeek’s thinking-mode guide says that during a tool-using reasoning turn, reasoning_content must be handled correctly and cleared between user turns; otherwise the API can return a 400 error.

Official access methods today

DeepSeek currently offers three official access paths: Web, App, and API. The official app announcement says the app is available through the App Store, Google Play, and major Android markets, and lists email, Google, and Apple ID sign-in, plus cross-platform history sync, web search, Deep-Think, file upload, and text extraction. DeepSeek’s current chat site snippet also describes the web product as an assistant for coding, content creation, file reading, and long-context document work.

The access caveat is just as important as the access options. DeepSeek’s own app announcement warns users to download only from official channels, and the API docs separately warn that the API’s model mapping is not identical to the App/Web version. So any guide that treats “DeepSeek” as one uniform experience across web chat, mobile, hosted API, and downloadable weights is oversimplifying the product.

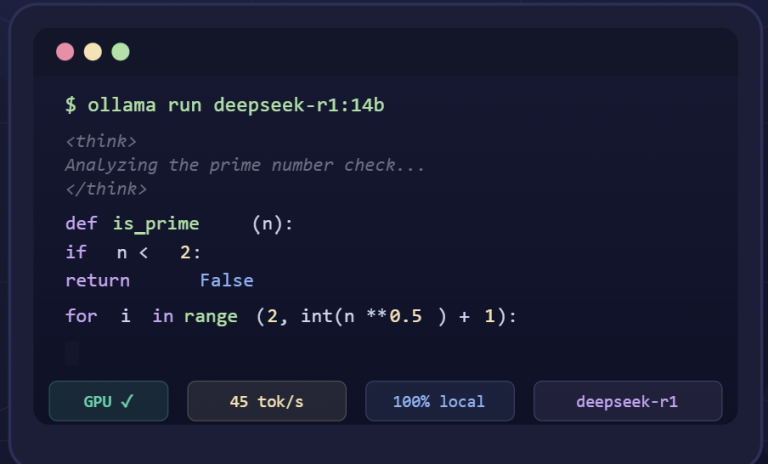

Open weights, code, and licensing

DeepSeek’s licensing history is one of the most misunderstood parts of the ecosystem. The official Hugging Face repositories for DeepSeek-V2.5-1210 and DeepSeek-V3 say the code repository is under MIT, but the actual model use is subject to a separate Model License; both pages still say the model line supports commercial use. In practical terms, that means older DeepSeek releases were open enough for broad use, but not all of them were clean MIT-style releases of both code and weights.

By contrast, DeepSeek’s R1, V3.1, and V3.2 repositories say the repository and model weights are under MIT. The R1 model card goes even further, explicitly saying the series supports commercial use and derivative works, including distillation, while also clarifying that some distilled variants inherit license obligations from the upstream Qwen or Llama bases they were built on.

So the most accurate short description is this: DeepSeek has newer releases with very permissive MIT licensing, but it does not have one uniform license across the whole family. As an inference from the official repositories, V2/V3 are better described as custom-licensed open-weight releases, while R1/V3.1/V3.2 are much cleaner MIT releases.

Practical uses today

In real-world use, DeepSeek looks strongest in four areas: coding, instruction-following and writing, app-level web search and file handling, and agent-style tool workflows. That is not just marketing language; it is the pattern that emerges across the official V2.5, R1-0528, V3.1, V3.1-Terminus, and V3.2 release notes, which repeatedly emphasize code quality, search, structured outputs, agent tasks, and tool use.

The limits are real, too. DeepSeek’s own change log says R1-0528 reduced hallucinations, which also implies hallucinations were still a live problem worth addressing. The same log notes that complex reasoning tasks may consume more tokens than earlier R1. And while DeepSeek supports JSON output and function calling, those features improve workflow structure; they do not turn the model into a reliable source of truth by themselves.

Privacy and what users should know

DeepSeek’s current privacy policy says it collects personal data in three broad ways: information users provide directly, information collected automatically from device and network activity, and information received from other sources such as Apple or Google login services and publicly available online sources. It explicitly says this can include prompts, uploaded files, chat history, device identifiers, IP-based location, and other service logs.

The same policy says DeepSeek uses this data to operate and improve the service, including training and improving its technology, and that it may share data with service providers, search-service integrations, analytics providers, safety-monitoring providers, and entities inside its own corporate group for functions including storage, research and development, and foundation model training and optimization. It also says personal data is collected, processed, and stored in the People’s Republic of China.

Retention is not framed as zero-retention by default. Instead, the policy says retention periods depend on purpose and legal requirements, and gives the example that account, input, and payment data are kept for as long as DeepSeek is processing them to provide the service. For ordinary users and organizations, the practical reading is straightforward: do not treat DeepSeek as a zero-data-risk environment unless you have a separate contractual or technical basis for doing so.

This policy context is reinforced by external scrutiny. Reuters reported that South Korea’s data protection authority said DeepSeek transferred user information and prompts without permission during its initial South Korean rollout; Australia banned DeepSeek on government devices over security concerns; Taiwan banned government departments from using it; and Italy’s privacy regulator blocked the app after saying DeepSeek’s responses to privacy questions were insufficient. These actions do not make DeepSeek unusable for everyone, but they do make privacy and jurisdiction material parts of any deployment decision.

A balanced comparison with competitors

DeepSeek’s strongest argument today is not that it is universally “the best.” It is that it combines several things that many teams want at once: an OpenAI-compatible API, current support for thinking and non-thinking modes, explicit tool-use support in reasoning flows, and newer open-weight releases under MIT. For developers building agents, coding assistants, or local deployments around public weights, that is a meaningful combination.

The counterargument is equally concrete. DeepSeek’s public model aliases move over time, the API differs from the App/Web version, licensing changes across generations, and the privacy posture is much less straightforward than a generic “open model” label might suggest. So compared with competitors, DeepSeek looks strongest where openness, tool use, and integration flexibility matter most, and weaker where governance, residency, and enterprise compliance simplicity matter most. That is an inference from the official docs and Reuters reporting, not a marketing slogan.

Can old articles about DeepSeek still be trusted?

Older DeepSeek articles are best treated as historical snapshots, not current buying guides. The legacy explainer at chat-deep.ai, for instance, says DeepSeek-V2 launched in late 2024, while DeepSeek’s own V2 paper and model card put the release in May 2024. That is not a trivial date error: it shifts the entire timeline by several months and makes later releases appear newer than they really are.

Older explainers also tend to stop at V3 and R1, ignoring V3-0324, R1-0528, V3.1, V3.1-Terminus, V3.2-Exp, and V3.2. They also rarely explain that deepseek-chat and deepseek-reasoner are now aliases for DeepSeek-V3.2 modes rather than static references to older generations. That omission matters more in DeepSeek than in many AI products, because the current identity of an alias is part of how the platform actually works.

A simple rule works well here: if a DeepSeek explainer does not tell you what deepseek-chat and deepseek-reasoner map to today, how thinking mode works, and how the license changes across generations, it is no longer a reliable current guide. It may still be useful as background reading, but not as an up-to-date reference.

Conclusion

DeepSeek in April 2026 is not just “the company behind V3 and R1.” It is a moving platform whose current public API centers on DeepSeek-V3.2, whose newer releases are more agent-oriented than many people realize, whose licensing history is mixed rather than uniform, and whose privacy posture deserves serious attention before enterprise or sensitive use. If you want the current picture, you need the release notes, the current API docs, the model repositories, and the privacy policy together—not a frozen explainer from early 2025.

This article explains how DeepSeek evolved from V2 to V3.2, but it is not the best first stop for every reader. For a simpler current overview of the official website, app, API, models, pricing, and status pages, start with our current DeepSeek AI guide, then use this page when you need the historical and release-level detail.

References

- DeepSeek API Docs — Change Log.

- DeepSeek API Docs — Your First API Call.

- DeepSeek API Docs — Models & Pricing.

- DeepSeek API Docs — Thinking Mode and Multi-round Conversation.

- DeepSeek API release notes for V2.5, V2.5-1210, V3, R1-0528, V3.1, V3.1-Terminus, V3.2-Exp, and V3.2.

- DeepSeek Privacy Policy.

- DeepSeek-V2 paper and official Hugging Face model pages for V2, V3, R1, V3.1, and V3.2.