Quick answer: Use the official DeepSeek API if you want the easiest hosted developer path, current DeepSeek-V4 API models, OpenAI-compatible requests, Anthropic-compatible requests, documented JSON Output, Tool Calls, thinking mode, FIM, Chat Prefix Completion, token billing, and no GPU operations. Run DeepSeek locally if you need offline use, tighter data-control boundaries, local experimentation, checkpoint control, or self-hosted deployment, and you can manage model files, hardware, runtimes, security, logs, monitoring, and performance tradeoffs

The key point is that DeepSeek local and DeepSeek API are not the same thing. The current official DeepSeek API is a hosted developer service using deepseek-v4-flash and deepseek-v4-pro. Running DeepSeek locally usually means downloading open-weight model checkpoints and serving them through a local or self-hosted runtime such as Ollama, LM Studio, vLLM, SGLang, or another inference stack.

Important V4 update: Do not describe deepseek-chat and deepseek-reasoner as the primary current API model IDs. They are now legacy compatibility aliases. For new API integrations, use deepseek-v4-flash or deepseek-v4-pro directly.

Want the easiest way to try prompts first? Use the Chat-Deep.ai browser chat for quick prompts without managing GPUs, local runtimes, or API setup. It is an independent browser experience. For official API keys, billing, production developer access, official app access, or account support, use the official DeepSeek platform and documentation.

Independent guide: Chat-Deep.ai is an independent DeepSeek guide and browser access site. This article is not affiliated with DeepSeek, DeepSeek.com, the official DeepSeek app, the official DeepSeek developer platform, Ollama, LM Studio, vLLM, SGLang, Hugging Face, ModelScope, OpenAI, Anthropic, or any model/runtime provider.

Last verified: April 24, 2026.

Current DeepSeek API snapshot

- Current API model IDs:

deepseek-v4-flashanddeepseek-v4-pro- Current API generation: DeepSeek-V4 Preview

- Base URL for OpenAI-compatible requests:

https://api.deepseek.com- Base URL for Anthropic-compatible requests:

https://api.deepseek.com/anthropic- Context length: 1M tokens

- Maximum output: 384K tokens

- Thinking mode: supported on both current V4 API models

- Non-thinking mode: supported on both current V4 API models

- JSON Output and Tool Calls: supported on both current V4 API models

- FIM Completion: supported in non-thinking mode only

- Legacy aliases:

deepseek-chatanddeepseek-reasonercurrently route todeepseek-v4-flashnon-thinking and thinking modes- Legacy alias retirement: DeepSeek says

deepseek-chatanddeepseek-reasonerwill be retired after July 24, 2026, 15:59 UTC

This guide is updated to stay consistent with the current Chat-Deep.ai homepage, DeepSeek API guide, DeepSeek API pricing guide, DeepSeek Models hub, DeepSeek for Coding guide, Token Usage guide, Thinking Mode guide, Tool Calls guide, and JSON Output guide.

Table of contents

- Critical update: V4 API vs old V3.2 wording

- Simple recommendation

- Quick decision: DeepSeek Local vs API

- What “DeepSeek API” means today

- What “running DeepSeek locally” means

- Hosted API models vs local model families

- Can you run DeepSeek-V4 locally?

- DeepSeek Local vs API comparison table

- Cost: V4 API tokens vs local infrastructure

- Privacy and data control

- Quality and model behavior

- Performance and latency

- Reliability and operations

- Features: JSON, Tool Calls, thinking mode, local servers

- When local DeepSeek is probably the wrong choice

- When the official API may be the wrong choice

- Security checklist before choosing local or API

- Recommended choices by use case

- Migration notes for old V3.2 API wording

- Common mistakes to avoid

- Related guides

- FAQ

- Official sources

Critical update: V4 API vs old V3.2 wording

The most important update for this page is that DeepSeek’s hosted API has moved to V4 model IDs. Older content that says the current hosted API is centered on deepseek-chat, deepseek-reasoner, DeepSeek-V3.2, 128K context, and old V3.2 pricing is outdated for new API guidance.

| Old wording | Current status | Correct wording now |

|---|---|---|

deepseek-chat as the current default API model | Legacy compatibility alias | Use deepseek-v4-flash for fast and economical hosted API workflows |

deepseek-reasoner as the current reasoning API model | Legacy compatibility alias | Use deepseek-v4-pro for harder reasoning, coding, long-context, and agentic tasks |

| DeepSeek-V3.2 as the current hosted API generation | Historical / previous hosted API generation | Use DeepSeek-V4 Preview as the current API generation |

| 128K as the current hosted API context | Outdated for current V4 API docs | Use 1M context for current V4 API models |

| Old V3.2 pricing | Outdated | Use V4-Flash and V4-Pro pricing from the current pricing table |

V3.2, R1, R1-Distill, DeepSeek-Coder, and V4 open weights still matter for local and open-weight discussions. The mistake is treating those local or historical model families as if they were the current hosted API model IDs.

Simple recommendation

- Just want to try prompts? Use the Chat-Deep.ai browser chat or the official DeepSeek chat.

- Building an app or developer workflow? Start with the official DeepSeek API and our DeepSeek API guide.

- Need the fastest current hosted model? Start with

deepseek-v4-flash. - Need stronger reasoning or coding quality? Use

deepseek-v4-pro. - Need offline or private local experiments? Try a smaller local open-weight model through Ollama or LM Studio.

- Need scalable self-hosted inference? Evaluate vLLM, SGLang, or another production serving stack with serious infrastructure planning.

- Need both speed and control? Use a hybrid workflow with clear data-routing rules.

Quick decision: DeepSeek Local vs API

If you only need the practical answer, use this table as a starting point.

| Need | Choose the official DeepSeek API if… | Choose local DeepSeek if… | Choose a hybrid workflow if… |

|---|---|---|---|

| Fastest setup | You want to connect an app quickly with an API key and OpenAI-compatible requests. | You are comfortable installing local runtimes and downloading model files. | You want to prototype with the API while testing local models in parallel. |

| Production apps | You want hosted infrastructure, current V4 API model IDs, documented API features, and fewer operations tasks. | You already have GPU infrastructure and can operate a model server reliably. | You want API-first production with local fallback or local-only internal routes. |

| Privacy-sensitive experiments | Your data policy allows sending prompts and outputs to a hosted provider. | You need prompts, files, and outputs to remain inside your machine or private infrastructure. | You can route sensitive prompts locally and non-sensitive prompts to the API. |

| Offline use | You do not need offline operation. | You need the model to work without internet access after setup. | You use local models offline and the API when connectivity is available. |

| Low operational burden | You do not want to manage GPUs, drivers, storage, monitoring, scaling, or model serving. | You accept the operational work in exchange for more control. | You keep current hosted API features for production and local models for selected tasks. |

| Current V4 feature support | You need documented JSON Output, Tool Calls, thinking mode, FIM, Chat Prefix Completion, token usage fields, and official pricing behavior. | You can tolerate runtime-specific behavior and test feature support yourself. | You use the API for feature-sensitive tasks and local models for simpler private tasks. |

| Model control | You are comfortable using the current hosted V4 model IDs exposed by the official API. | You want to choose specific checkpoints, quantizations, templates, or runtimes. | You want API consistency for production and local control for experimentation. |

| Learning and experimentation | You want to learn API integration and product development. | You want to learn local AI, model files, quantization, and serving tradeoffs. | You want to understand both hosted and local AI workflows. |

What “DeepSeek API” means today

In this guide, DeepSeek API means the official hosted developer API from DeepSeek. It is different from running a DeepSeek model on your laptop, and it is also different from the official DeepSeek web or app experience.

The official DeepSeek API uses an OpenAI-compatible and Anthropic-compatible API format. The OpenAI-compatible base URL is https://api.deepseek.com. The Anthropic-compatible base URL is https://api.deepseek.com/anthropic. DeepSeek also documents https://api.deepseek.com/v1 as an OpenAI compatibility path in some contexts, but /v1 is not a model version.

For new API work, use these current hosted API model IDs:

deepseek-v4-flash: fast and economical V4 model for everyday apps, summaries, extraction, classification, routine coding, and high-volume workflows.deepseek-v4-pro: stronger V4 model for harder reasoning, complex coding, agentic workflows, long-context analysis, and high-value production tasks.

The old names deepseek-chat and deepseek-reasoner are compatibility aliases during the V4 migration period. For new docs, code examples, pricing explanations, and model-selection tables, use the V4 names directly.

What “running DeepSeek locally” means

Running DeepSeek locally means running an open-weight DeepSeek checkpoint on your own computer, workstation, server, or private cloud environment. The model is served by a local or self-hosted runtime instead of DeepSeek’s hosted API.

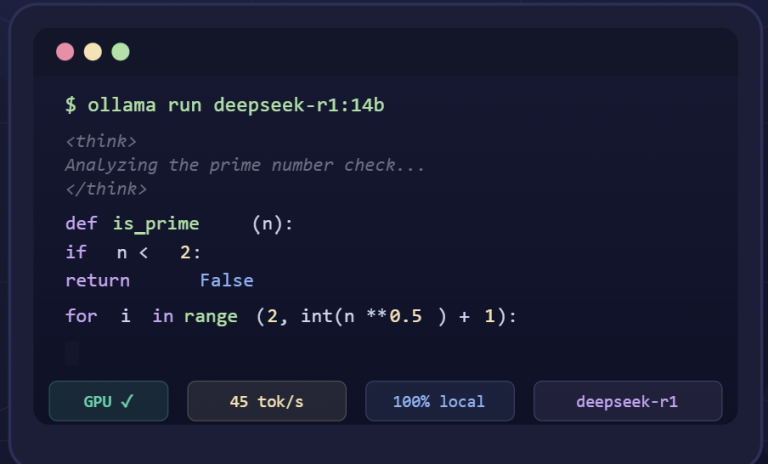

Common local paths include:

- Ollama: usually the easiest beginner path for running smaller local models. See our DeepSeek local install with Ollama guide.

- LM Studio: useful for a desktop interface and a local OpenAI-compatible server. See our DeepSeek in LM Studio guide.

- vLLM or SGLang: better suited for advanced self-hosted serving, GPUs, batching, OpenAI-compatible endpoints, and infrastructure workflows. For deployment-specific details, read our DeepSeek with vLLM guide.

- Custom internal serving: useful for teams with ML infrastructure, security requirements, internal tools, and enough capacity to monitor and operate inference reliably.

Local model names are not the same as official hosted API model IDs. A local runtime tag such as deepseek-r1:8b, a Hugging Face repository such as deepseek-ai/DeepSeek-R1-Distill-Qwen-32B, or a self-hosted alias chosen by your team is not the same thing as deepseek-v4-flash or deepseek-v4-pro on the official hosted API.

Hosted API models vs local model families

DeepSeek model names can be confusing because the same ecosystem includes hosted API model IDs, compatibility aliases, open-weight model families, local runtime tags, distilled checkpoints, and historical coding models. Keep them separate.

| Model family or name | What it is | Use it for | Do not confuse it with |

|---|---|---|---|

deepseek-v4-flash | Current hosted API model ID | Fast and economical official API workflows | Local runtime tags or old deepseek-chat wording |

deepseek-v4-pro | Current hosted API model ID | Hard reasoning, complex coding, long-context, and agentic official API workflows | Local R1 tags or old deepseek-reasoner wording |

deepseek-chat | Legacy hosted API compatibility alias | Migration notes only | A primary current model ID for new integrations |

deepseek-reasoner | Legacy hosted API compatibility alias | Migration notes only | DeepSeek-R1 local models or the primary current reasoning API model |

| DeepSeek-V4 open weights | Open-weight V4 model family | Advanced local/self-hosted research and infrastructure projects | The easier hosted V4 API service |

| DeepSeek-R1 / R1-Zero | Open-weight reasoning model family | Reasoning research and advanced local/self-hosted experiments | The legacy deepseek-reasoner alias |

| R1-Distill models | Smaller distilled checkpoints based on Qwen and Llama families | More practical local reasoning experiments than full R1 | Full hosted DeepSeek API behavior |

| DeepSeek-V3.2 | Open-weight/historical model family and previous hosted API generation | Local/open-weight research, historical context, and model comparison | The current hosted API state |

| DeepSeek-Coder / Coder-V2 | Historical/open-weight coding model family | Local coding experiments and coding-model history | The current hosted V4 API models |

Can you run DeepSeek-V4 locally?

DeepSeek-V4 has open weights, but “open weights” does not automatically mean “easy to run on a normal laptop.” DeepSeek-V4-Pro is listed as a 1.6T total-parameter / 49B activated-parameter model, and DeepSeek-V4-Flash is listed as a 284B total-parameter / 13B activated-parameter model. Both are serious infrastructure targets, especially at long context.

For local learning, smaller distilled models such as R1-Distill variants are usually more practical. For advanced self-hosting, V4, V3.2, R1, and large distilled models require careful planning around GPUs, memory, precision, tensor parallelism, context length, serving engine, chat template, monitoring, and security.

A safe way to describe this is:

- Use the official hosted API when you want current V4 behavior without operating GPUs.

- Use smaller local open-weight models when you want offline experiments or local learning.

- Use self-hosted large DeepSeek models only when your team can operate the infrastructure reliably.

DeepSeek Local vs API comparison table

| Factor | Official DeepSeek API | Local DeepSeek with Ollama or LM Studio | Self-hosted DeepSeek with vLLM or SGLang |

|---|---|---|---|

| Best for | Hosted apps, fast prototypes, production integrations, current V4 API features, and OpenAI-compatible / Anthropic-compatible workflows. | Personal local use, learning, offline drafts, smaller local experiments, and privacy-sensitive tests. | Private infrastructure, scalable serving, batching, internal endpoints, high-volume workloads, and advanced deployment. |

| Setup time | Usually fastest: create an API key, set the base URL, choose deepseek-v4-flash or deepseek-v4-pro, and send requests. | Moderate: install a runtime, download a model, and test prompts locally. | Highest: configure servers, drivers, GPUs, model serving, monitoring, and scaling. |

| Infrastructure owner | DeepSeek operates the hosted API infrastructure. | You operate your local machine and runtime. | You operate the full serving stack. |

| Model names | Current official model IDs: deepseek-v4-flash and deepseek-v4-pro. | Runtime-specific tags or local checkpoint names, such as R1, R1-Distill, V3.2, or V4 variants. | Model repository names or deployment-specific aliases configured by your team. |

| Model quality consistency | Most consistent for current hosted DeepSeek API behavior. | Depends on checkpoint, quantization, runtime, prompt template, context settings, and sampling. | Depends on checkpoint, serving engine, chat template, parser, sampling, context length, and deployment configuration. |

| Offline use | No. It requires network access to DeepSeek’s API. | Yes, after model download and local setup, if the whole workflow is local. | Possible inside private infrastructure or air-gapped environments if fully configured. |

| Data-control boundary | Prompts and outputs are sent to DeepSeek’s hosted service. | Can remain local if the runtime, UI, logs, plugins, telemetry, and network setup are local/private. | Can remain inside your infrastructure if logging, monitoring, access, and storage are controlled. |

| Cost model | Pay-per-token API billing. | Local hardware, electricity, storage, maintenance, and time. | GPU infrastructure, cloud compute, storage, monitoring, engineering time, and operations. |

| Latency | Depends on provider infrastructure, traffic, network path, prompt length, output length, and selected model. | Depends on local CPU/GPU, memory, quantization, model size, runtime, and context length. | Depends on GPU stack, batching, parallelism, model size, context length, and concurrency. |

| Scaling | Easier for most teams because hosting is handled externally. | Limited by your local machine. | Can scale, but only with serious infrastructure work. |

| Reliability | Depends on external API availability, account balance, network access, provider load, and official platform status. | Depends on your machine, local runtime, model files, and storage. | Depends on your deployment, monitoring, capacity, failover, and maintenance. |

| JSON Output / Tool Calls | Use documented official V4 API behavior. | Feature support varies by runtime, model, parser, and prompt template. | Feature support varies by serving engine, parser, chat template, and model. |

| Thinking mode behavior | Official V4 API behavior uses documented thinking / non-thinking modes. | Some local reasoning models may expose visible thinking traces depending on runtime and formatting. | May expose reasoning fields or parsed outputs depending on serving engine and parser configuration. |

| Maintenance burden | Lowest for most teams. | Moderate. | Highest. |

Cost: V4 API tokens vs local infrastructure

The official DeepSeek API is pay-per-token. Current V4 public pricing is listed per 1 million tokens and differs by model:

| Model | Input cache hit | Input cache miss | Output | Best use |

|---|---|---|---|---|

deepseek-v4-flash | $0.028 / 1M tokens | $0.14 / 1M tokens | $0.28 / 1M tokens | Fast, economical, high-volume hosted API workflows. |

deepseek-v4-pro | $0.145 / 1M tokens | $1.74 / 1M tokens | $3.48 / 1M tokens | Hard reasoning, complex coding, agentic, and long-context workflows. |

DeepSeek says product prices may change, so do not hardcode these numbers permanently into product pages, calculators, customer-facing quotes, or dashboards without re-checking the official pricing page. For current rates and billing explanation, use our DeepSeek API pricing guide and the official pricing page.

The API also has context caching enabled by default. Repeated prefixes across requests may count as cache hits, which can reduce input-token cost for workflows that reuse the same long system prompt, document prefix, few-shot examples, or conversation prefix. However, only repeated prefix portions can trigger cache-hit pricing, so you should not assume every request gets the cheaper rate.

Local DeepSeek does not mean “free.” Local deployment can reduce or remove per-token API billing, but it adds other costs:

- Hardware purchase or cloud GPU rental.

- Electricity, cooling, storage, and hardware replacement.

- Engineering time for installation, tuning, updates, and monitoring.

- Security review, logging, access control, and backups.

- Scaling, queueing, and failover if the model serves real users.

- Model download bandwidth and storage for large checkpoints.

- Runtime maintenance, driver updates, CUDA or ROCm compatibility, and dependency updates.

There is no universal break-even point. The API is often better for low to medium traffic, early product development, teams without GPU operations experience, and workflows that require official API features. Local or self-hosted deployment may make sense when volume is high, privacy or offline needs are strong, or your team already has the infrastructure and skills to operate models reliably.

Privacy and data control

Privacy is one of the strongest reasons to compare DeepSeek local vs API carefully. When you use the official DeepSeek API, prompts and outputs are sent to DeepSeek’s hosted service. Before using the API with personal, sensitive, regulated, or customer data, review DeepSeek’s official privacy policy, open platform terms, and your own organization’s data-handling rules.

Local deployment can improve data-control boundaries, but only if the entire stack is actually local or private. “Local” is not automatically private. A desktop app, plugin, telemetry service, remote model downloader, proxy, analytics script, crash reporter, update mechanism, or logging system can still send data outside your machine or organization.

For privacy-sensitive use, check:

- Whether prompts, files, embeddings, retrieved context, tool arguments, or outputs are logged.

- Whether the runtime has telemetry or external integrations enabled.

- Whether plugins or tools can send data to third-party services.

- Whether model files come from trusted repositories.

- Whether internal users can access stored prompts or outputs.

- Whether local chat UIs store histories on disk.

- Whether vector databases or retrieval layers store sensitive chunks.

- Whether your organization has data retention, consent, and regional compliance requirements.

This article is not legal advice. For regulated, enterprise, medical, legal, financial, or customer-data workflows, involve your legal, security, and compliance teams before sending data to a hosted API or deploying local models internally.

Quality and model behavior

The official API gives you the current hosted DeepSeek V4 API behavior. That is valuable if your app depends on predictable hosted model IDs, documented features, official token usage fields, current pricing, current context limits, and supported API parameters.

Local model quality depends on many factors:

- The exact checkpoint you choose.

- Model size and whether it is distilled, quantized, base, instruct, or full-weight.

- The runtime, prompt template, and chat formatting.

- Context length settings and memory limits.

- Sampling parameters such as temperature and top-p.

- Whether your runtime correctly handles reasoning, tool calls, JSON Output, or structured output.

- Whether the model was designed for general chat, reasoning, code, or local inference.

DeepSeek-R1 and DeepSeek-R1-Zero are open-weight reasoning models with 671B total parameters and 37B activated parameters. The R1-Distill family includes smaller 1.5B, 7B, 8B, 14B, 32B, and 70B checkpoints based on Qwen and Llama families. Those distilled models are often more practical locally, but a small distill model should not be described as equivalent to the current hosted V4 API.

DeepSeek-V3.2 is open-weight and licensed under MIT on Hugging Face, but it is a historical/current-open-weight model family rather than the current hosted API state. Its model card notes that V3.2-Speciale is designed exclusively for deep reasoning tasks and does not support tool-calling functionality. If your application depends on Tool Calls, do not assume every open-weight DeepSeek variant behaves the same way as the hosted V4 API.

Performance and latency

API latency depends on provider infrastructure, traffic, network distance, inference scheduling, prompt length, selected model, thinking mode, tool loops, and output length. DeepSeek’s rate-limit documentation says the API does not constrain users with a fixed rate limit, but under high traffic requests may wait while the HTTP connection remains open, and if inference does not start after a long waiting period the server may close the connection.

Implementation note: Even when there is no fixed published user rate limit, the official error-code docs still list 429 Rate Limit Reached. Production clients should implement timeouts, retry handling, exponential backoff, request pacing, queueing, and graceful fallback.

Local latency depends on your hardware and runtime. A smaller quantized model may feel fast on capable local hardware, while a large model with long context can be slow or fail due to memory limits. For self-hosted servers, concurrency, batching, context length, GPU utilization, tensor parallelism, cold starts, and queue depth matter.

Measure your own workload instead of relying on generic benchmark claims. Useful metrics include:

- TTFT: time to first token.

- Tokens per second: generation speed under realistic prompts.

- p95 latency: user-facing latency under load.

- Error rate: failed requests, timeouts, overload behavior, malformed responses, and parser errors.

- Cost per request: API token cost or infrastructure cost allocation.

- Throughput: successful requests per minute under realistic concurrency.

- Context sensitivity: speed and memory behavior as prompt length grows.

For many teams, the API is easier to scale. For teams with infrastructure expertise, local or self-hosted serving can be optimized, but it requires ongoing measurement and operations work.

Reliability and operations

The official DeepSeek API reduces infrastructure work, but it still depends on external availability, account balance, network access, provider behavior, and possible overload. DeepSeek’s error-code documentation lists common categories such as invalid request format, authentication failure, insufficient balance, invalid parameters, rate limit, server error, and server overload.

Local deployment avoids dependency on the hosted API, but it adds your own operational risks:

- Machine or GPU failure.

- Model loading errors.

- Memory limits and context-length failures.

- Driver, CUDA, ROCm, or runtime compatibility issues.

- Storage and model-file corruption.

- Model-download and checksum issues.

- Security patches and dependency updates.

- Backups, monitoring, access control, and abuse prevention.

- Prompt logs, output logs, and private document retention.

- Capacity planning, autoscaling, and failover.

If your application is production-facing, you need a reliability plan either way. For API workflows, monitor errors, balances, latency, usage, and provider status. For local workflows, monitor hardware, server health, logs, queue depth, memory pressure, and capacity. You can also check our DeepSeek status guide when investigating DeepSeek availability or API behavior.

Features: JSON, Tool Calls, thinking mode, and local servers

The official DeepSeek V4 API documents features such as JSON Output, Tool Calls, Chat Prefix Completion, FIM for non-thinking mode, context caching, token usage fields, OpenAI-compatible requests, Anthropic-compatible requests, and thinking / non-thinking modes.

Local runtimes may expose OpenAI-compatible endpoints, but compatibility does not guarantee feature parity. LM Studio can expose a local OpenAI-compatible endpoint. vLLM and SGLang can also serve models through OpenAI-compatible APIs for supported models. However, local runtime behavior can vary for JSON reliability, tool-calling schemas, reasoning fields, chat templates, unsupported parameters, streaming behavior, prompt encoding, and no-tool-call edge cases.

OpenAI-compatible does not mean DeepSeek-compatible in every detail. A local server may accept an OpenAI-style request, but JSON reliability, tool-call behavior, reasoning fields, chat templates, unsupported parameters, sampling defaults, and streaming behavior can still differ from the official DeepSeek API.

Some local reasoning models may show visible thinking traces depending on the model and runtime. Do not treat that as identical to the official API’s structured thinking-mode behavior. Also avoid exposing internal reasoning traces to users unless your product policy, safety review, and user experience justify it.

| Feature | Official DeepSeek V4 API | Local/self-hosted DeepSeek |

|---|---|---|

| JSON Output | Documented on current V4 API models | May work through prompting or runtime support, but must be tested |

| Tool Calls | Documented on current V4 API models | Depends on model, serving engine, parser, and chat template |

| Thinking mode | Documented through V4 thinking / non-thinking modes | May appear as visible tags or runtime-specific fields |

| FIM Completion | Documented as non-thinking mode only | Depends on checkpoint and runtime support |

| Chat Prefix Completion | Documented as beta | Depends on runtime and prompt handling |

| Context caching | Enabled by default in the official API | Depends on serving system and cache implementation |

| Token usage fields | Returned by the official API where applicable | Runtime-specific; may not match official fields |

When local DeepSeek is probably the wrong choice

- You need the fastest production launch and do not have GPU or server experience.

- You need predictable official API features such as JSON Output, Tool Calls, FIM, Chat Prefix Completion, context caching, and thinking mode.

- You cannot maintain drivers, runtimes, security patches, monitoring, storage, and logs.

- You are assuming local is automatically free or private.

- You need exact behavior matching the official DeepSeek API.

- You do not have a plan for access control, network exposure, prompt logging, and abuse prevention.

- You want to run full DeepSeek-V4, full DeepSeek-R1, or full DeepSeek-V3.2 on a normal consumer laptop.

- Your application needs managed uptime, billing, official status tracking, and simple integration more than infrastructure control.

When the official API may be the wrong choice

- You need offline operation after setup.

- Your policy forbids sending prompts, files, outputs, or code to a hosted provider.

- You need full control over checkpoint, quantization, prompt templates, and runtime behavior.

- You already operate GPU infrastructure and can serve models reliably.

- You have very high volume and self-hosting economics are clearly better after measuring real workloads.

- You need an air-gapped or private-network deployment.

- You want to study open-weight model behavior rather than build on hosted API behavior.

Security checklist before choosing local or API

Before choosing DeepSeek local or API, answer these questions:

- What data will be sent to the model?

- Is offline use required?

- Who can access prompts, outputs, logs, embeddings, retrieved context, and uploaded files?

- Are prompts or outputs stored by your app, runtime, proxy, analytics tool, or observability system?

- Are local model files downloaded from trusted sources?

- Is the local runtime fully local, or does it include external services, plugins, telemetry, or update calls?

- Are API keys stored securely in environment variables or a secrets manager?

- Do you have monitoring for errors, latency, usage, costs, and abnormal traffic?

- Do you have a fallback if the API or local server fails?

- Have you reviewed model licenses, provider terms, privacy policies, and data-retention rules?

- Are tool calls or local agents allowed to read files, run commands, access databases, or call internal APIs?

- Do sensitive actions require human approval?

Recommended choices by use case

| Use case | Recommended path | Why |

|---|---|---|

| Personal experimentation | Local or API | Use local if you want to learn model running; use the API if you want faster access to current hosted V4 behavior. |

| Learning local AI | Local | Ollama or LM Studio is better for understanding local models, quantization, prompt behavior, and runtime limits. |

| Privacy-sensitive drafting | Local or hybrid | Local can keep drafts inside your environment if the entire stack is private. |

| Production SaaS feature | API | The hosted API is usually simpler for launch, scaling, current features, and model updates. |

| High-volume internal workflow | Hybrid or self-hosted | Use the API first to measure demand, then evaluate self-hosting if volume and infrastructure justify it. |

| Offline workstation | Local | The API requires network access; local models can work offline after setup. |

| Regulated or enterprise workflow | Depends on review | Choose only after security, legal, compliance, license, data-retention, and vendor review. |

| AI agent with Tool Calls | API or carefully tested self-hosted | Official API behavior is clearer; local tool-call support varies by runtime and model. |

| Long-context document analysis | API or advanced self-hosted | Current V4 API supports 1M context; local long context can be expensive and operationally complex. |

| Developer prototype | API first, local optional | The API is usually faster to integrate; local testing can come later if privacy, cost, or control requires it. |

| Codebase assistant | API, local, or hybrid | Use API for easiest V4 behavior; use local for sensitive code only if the whole stack is private. |

| Research on model weights | Local or self-hosted | Use open-weight checkpoints when the goal is model research rather than hosted product behavior. |

Migration notes for old V3.2 API wording

If you have old code, internal docs, or screenshots that still use deepseek-chat or deepseek-reasoner, update them carefully.

| Old item | Current compatibility behavior | Recommended replacement |

|---|---|---|

deepseek-chat | Routes to deepseek-v4-flash non-thinking mode during the compatibility period | deepseek-v4-flash with thinking disabled |

deepseek-reasoner | Routes to deepseek-v4-flash thinking mode during the compatibility period | deepseek-v4-pro for hard reasoning, or deepseek-v4-flash for lower-cost reasoning |

| “Current API is DeepSeek-V3.2” | Outdated for current hosted API | “Current hosted API generation is DeepSeek-V4 Preview” |

| “Current context is 128K” | Outdated for current V4 API docs | “Current V4 API models list 1M context” |

| Old single pricing table | Outdated | Use separate V4-Flash and V4-Pro pricing |

Old local guides can still mention R1, R1-Distill, V3.2, DeepSeek-Coder, or Coder-V2 if the context is local/open-weight. The important rule is to avoid calling them the current hosted API model IDs.

Common mistakes to avoid

- Assuming

deepseek-r1:8bequalsdeepseek-reasoner. It does not. One is a local runtime tag or checkpoint variant; the other is a legacy hosted API alias. - Assuming

deepseek-chatis still the main current model ID. For new API integrations, usedeepseek-v4-flashordeepseek-v4-pro. - Assuming local is always free. Local removes token billing only if you ignore hardware, electricity, storage, maintenance, and engineering time.

- Assuming the API is always cheaper. High-volume or privacy-driven workflows may justify local or self-hosted infrastructure.

- Assuming full DeepSeek models run on a normal laptop. Full V4, full R1, and full V3.2 are serious infrastructure targets.

- Assuming local is automatically private. Check runtime telemetry, plugins, logs, analytics, proxies, and remote services.

- Assuming 1M context is practical locally. Context length affects memory and latency. Local runtime limits may differ from API limits.

- Copying old model mappings. API IDs, aliases, model versions, and local runtime tags can change.

- Hardcoding pricing into app UI. Prices can change, so use a “last checked” note and source link.

- Ignoring model card and license terms. Always review the license for the checkpoint and any base model dependencies.

- Ignoring logs and telemetry. Privacy depends on the whole system, not only where the model weights run.

- Assuming OpenAI-compatible local servers match the DeepSeek API exactly. Test JSON Output, Tool Calls, streaming, reasoning fields, and token usage behavior.

Related guides

- DeepSeek API Guide — current V4 model IDs, base URL, examples, and migration notes

- DeepSeek API Pricing — current V4 rates, cache-hit pricing, and billing explanation

- DeepSeek Token Usage — usage object, cache-hit tokens, cache-miss tokens, output tokens, and cost formulas

- DeepSeek Models Hub — V4, R1, V3.2, Coder, open weights, and model naming

- DeepSeek V4 Preview — current V4 overview and API model names

- DeepSeek R1 Guide — R1, R1-Zero, and R1-Distill context

- DeepSeek V3.2 Guide — historical and open-weight context

- DeepSeek-Coder Guide — coding-model history and local context

- How to Install DeepSeek Locally — beginner local setup path

- Run DeepSeek in LM Studio — desktop local model workflow

- DeepSeek with vLLM — advanced self-hosted serving

- DeepSeek for Coding — coding prompts, API, FIM, agents, and safety checks

- DeepSeek Tool Calls — function calling and tool-loop behavior

- DeepSeek JSON Output — structured JSON responses

- DeepSeek Thinking Mode — thinking and non-thinking behavior

- DeepSeek Status Guide — availability and troubleshooting links

FAQ

What is the difference between DeepSeek local and the DeepSeek API?

The DeepSeek API is a hosted developer service operated by DeepSeek. Running DeepSeek locally means downloading open-weight model checkpoints and serving them on your own machine or infrastructure. The API is usually easier to use, while local deployment gives more control but adds hardware, runtime, security, and maintenance work.

What are the current DeepSeek API model IDs?

The current official API model IDs are deepseek-v4-flash and deepseek-v4-pro. Use V4-Flash for fast and economical workflows, and V4-Pro for harder reasoning, coding, long-context analysis, and agentic workflows.

Should I still use deepseek-chat or deepseek-reasoner?

Not for new API integrations. They are legacy compatibility aliases during the V4 transition. deepseek-chat currently routes to deepseek-v4-flash non-thinking mode, and deepseek-reasoner currently routes to deepseek-v4-flash thinking mode.

Is a local DeepSeek-R1 model the same as deepseek-reasoner?

No. DeepSeek-R1 and R1-Distill are open-weight/local model families. deepseek-reasoner is a legacy hosted API compatibility alias. Do not treat local R1 runtime tags as the same thing as the hosted API alias.

Can I run DeepSeek-V4 locally?

DeepSeek-V4 has open weights, but full V4 models are large infrastructure targets. V4-Pro is listed as 1.6T total parameters and V4-Flash as 284B total parameters. Smaller distilled models are usually more practical for beginner local experiments.

Should I use the API or local DeepSeek for coding?

Use the API when you want current V4 hosted behavior, JSON Output, Tool Calls, FIM, Chat Prefix Completion, and lower operations burden. Use local models when code privacy, offline operation, or checkpoint control matters more and you can operate the runtime safely.

Is local DeepSeek automatically private?

No. Local model weights help, but privacy depends on the whole stack: runtime, UI, plugins, logs, telemetry, analytics, proxies, update mechanisms, and storage. Check all of them before treating a workflow as private.

Is the DeepSeek API safe for sensitive data?

That depends on your data policy and risk requirements. Hosted API prompts and outputs are sent to DeepSeek’s service. Review official DeepSeek privacy, terms, and platform documentation before using the API with sensitive, regulated, customer, or proprietary data.

Is running DeepSeek locally free?

Not exactly. Local use may avoid per-token API billing, but you still pay through hardware, electricity, storage, setup time, maintenance, monitoring, security work, and infrastructure operations.

How much does the current DeepSeek API cost?

Current V4 pricing is per 1M tokens and differs by model. deepseek-v4-flash is priced at $0.028 cache-hit input, $0.14 cache-miss input, and $0.28 output. deepseek-v4-pro is priced at $0.145 cache-hit input, $1.74 cache-miss input, and $3.48 output. Always verify the official pricing page before production use.

Do local DeepSeek models support JSON Output and Tool Calls?

It depends on the model, runtime, parser, chat template, and serving engine. The official hosted V4 API documents JSON Output and Tool Calls. Local OpenAI-compatible servers may not match the official API behavior exactly, so test your exact runtime before relying on those features.

What is a hybrid DeepSeek workflow?

A hybrid workflow routes some tasks to the hosted API and others to local models. For example, a product might send non-sensitive high-quality reasoning tasks to deepseek-v4-pro, while routing private drafts or offline tasks to a local model.

Is Chat-Deep.ai the official DeepSeek website?

No. Chat-Deep.ai is an independent DeepSeek guide and browser access site. It is not affiliated with DeepSeek, DeepSeek.com, the official DeepSeek app, or the official DeepSeek developer platform.

Conclusion

For most developers, the official DeepSeek API is the best first choice because it gives current V4 model IDs, hosted infrastructure, documented API features, token usage fields, current pricing, and much lower operational burden. Start with deepseek-v4-flash for everyday work and use deepseek-v4-pro when stronger reasoning, coding, long-context analysis, or agentic behavior is worth the extra cost.

Local DeepSeek is the better choice when offline use, data-control boundaries, checkpoint control, or self-hosted infrastructure are the main priority. But local is not automatically private, free, or equivalent to the hosted API. You still need to verify the whole runtime, logs, telemetry, model license, prompt template, parser behavior, performance, and maintenance plan.

The safest rule is simple: use the hosted V4 API for current official behavior, use local models for controlled experiments or private/offline workflows, and keep old deepseek-chat / deepseek-reasoner wording only as legacy migration context.

Official sources and last verified

Last verified: April 24, 2026. DeepSeek model IDs, pricing, local model cards, feature support, context limits, compatibility aliases, and deprecation dates can change. Use the official sources below before shipping production code or publishing customer-facing docs.

- DeepSeek-V4 Preview Release

- DeepSeek API Quick Start

- DeepSeek Models & Pricing

- DeepSeek Thinking Mode

- DeepSeek JSON Output

- DeepSeek Tool Calls

- DeepSeek Context Caching

- DeepSeek Token & Token Usage

- DeepSeek Error Codes

- DeepSeek Rate Limit

- DeepSeek-V4 open-weight collection on Hugging Face

- DeepSeek-V4-Pro model card

- DeepSeek-R1 model card

- DeepSeek-V3.2 model card

- DeepSeek Privacy Policy

- DeepSeek Open Platform Terms of Service