Quick answer: DeepSeek can be useful for coding tasks such as debugging, refactoring, code explanation, test generation, pull request review, JSON reports, tool-connected developer workflows, FIM code infilling, and coding-agent setups. For current hosted API coding workflows, start with deepseek-v4-flash for fast and economical coding help, and use deepseek-v4-pro for harder reasoning, complex debugging, multi-file analysis, agentic coding, architecture tradeoffs, and long-context code review.

The older API names deepseek-chat and deepseek-reasoner are now legacy compatibility aliases. They currently route to deepseek-v4-flash non-thinking and thinking modes, and should appear only in migration notes, not as the primary model names for new coding examples.

Independent guide: Chat-Deep.ai is an independent DeepSeek guide and browser chat experience. This article is not affiliated with DeepSeek, DeepSeek.com, the official DeepSeek app, the official DeepSeek developer platform, Claude Code, Anthropic, OpenCode, Ollama, LM Studio, vLLM, Hugging Face, GitHub, or any model/runtime provider.

Last verified: April 24, 2026.

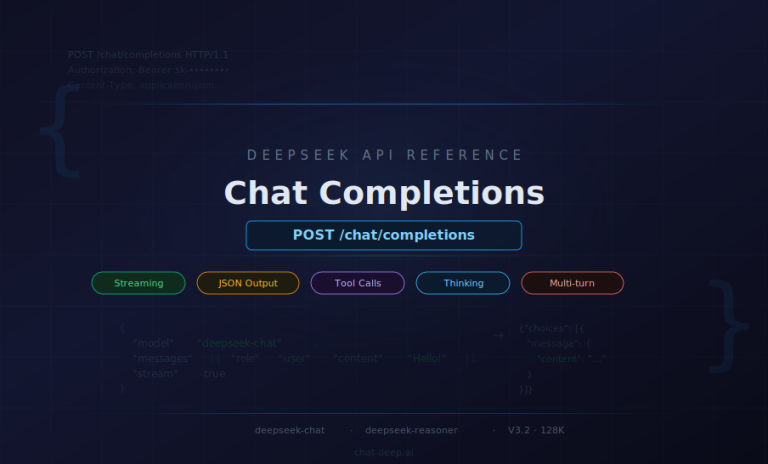

Current DeepSeek API snapshot for coding

- Current API model IDs:

deepseek-v4-flashanddeepseek-v4-pro- Current API generation: DeepSeek-V4 Preview

- Base URL for OpenAI-compatible requests:

https://api.deepseek.com- Base URL for Anthropic-compatible requests:

https://api.deepseek.com/anthropic- Context length: 1M tokens

- Maximum output: 384K tokens

- Thinking mode and non-thinking mode: supported on both current V4 API models

- JSON Output and Tool Calls: supported on both current V4 API models

- FIM Completion: supported in non-thinking mode only

- Chat Prefix Completion: supported as a beta feature

- Legacy aliases:

deepseek-chatanddeepseek-reasonercurrently route todeepseek-v4-flashnon-thinking and thinking modes- Legacy alias retirement: DeepSeek says

deepseek-chatanddeepseek-reasonerwill be retired after July 24, 2026, 15:59 UTC

This guide is updated to stay consistent with the current Chat-Deep.ai homepage, DeepSeek API guide, DeepSeek API pricing guide, DeepSeek Models hub, Python SDK guide, Node.js TypeScript guide, Thinking Mode guide, Tool Calls guide, JSON Output guide, and Token Usage guide.

Want to test coding prompts first? Try quick coding prompts in the Chat-Deep.ai browser chat. It is an independent DeepSeek-style browser experience. For official API keys, billing, production developer access, or account support, use the official DeepSeek platform.

Table of contents

- Critical update: V4 replaces the old V3.2 coding guidance

- Quick answer: is DeepSeek good for coding?

- Who this guide is for

- What “DeepSeek for coding” means today

- Which DeepSeek model should developers use for coding?

- Recommended API settings for coding

- DeepSeek-Coder vs current hosted DeepSeek for coding

- Practical coding tests to run before trusting the output

- Common coding tasks DeepSeek can help with

- Prompt patterns for better coding results

- Using DeepSeek FIM Completion for code infilling

- Using Chat Prefix Completion for code-shaped output

- Using DeepSeek in Claude Code and OpenCode

- JSON Output and Tool Calls for coding agents

- When to use thinking mode for coding

- API vs local DeepSeek for coding

- Cost and context tips for coding workflows

- Safety checklist for AI-generated code

- Best-practice workflows

- Next step: choose the right DeepSeek path

- Common mistakes to avoid

- FAQ

- Official sources and last verified

Critical update: V4 replaces the old V3.2 coding guidance

Older coding guides may still say to start with deepseek-chat and use deepseek-reasoner for hard coding tasks. That wording is now outdated for new API content. The current official DeepSeek API model IDs are deepseek-v4-flash and deepseek-v4-pro.

| Old wording | Current status | Recommended coding wording now |

|---|---|---|

deepseek-chat as the default coding API model | Legacy compatibility alias | Use deepseek-v4-flash for most fast, economical coding help |

deepseek-reasoner as the current reasoning model | Legacy compatibility alias | Use deepseek-v4-pro for harder coding, reasoning, architecture, and agentic workflows |

| DeepSeek‑V3.2 as the current hosted API family | Historical / previous hosted API generation | Use DeepSeek‑V4 Preview as the current API generation |

| 128K context as the current hosted API limit | Outdated for current V4 API docs | Use 1M context and 384K maximum output for current V4 API models |

| Old V3.2 pricing | Outdated | Use separate V4‑Flash and V4‑Pro pricing |

Keep the old names only when helping developers migrate existing code. Do not use them as the main model recommendation in new coding tutorials, prompt examples, pricing sections, or API snippets.

Quick answer: is DeepSeek good for coding?

Yes, DeepSeek can be useful for many developer tasks: code explanation, bug diagnosis, refactoring, test generation, pull request review, shell commands, SQL, regex, documentation, API schema design, structured code-review reports, and coding-agent workflows.

DeepSeek performs best when you provide enough context: programming language, framework version, expected behavior, actual behavior, stack trace, relevant code snippet, failing input, expected output, constraints, and the tests you already ran. A vague prompt such as “fix this code” usually performs worse than a prompt that includes exact failure details and clear success criteria.

Use extra caution with security-critical code, authentication logic, payment flows, database migrations, production infrastructure, dependency upgrades, licensing-sensitive code, and compliance-sensitive projects. DeepSeek can suggest code, but it cannot guarantee that generated code is secure, correct, licensed appropriately, or production-ready. Always run tests, linting, type checks, security review, dependency review, and human review before merging AI-generated code.

Who this guide is for

Use this guide if you want to understand how to use DeepSeek for developer work: coding prompts, model selection, hosted API workflows, FIM completion, Chat Prefix Completion, JSON Output, Tool Calls, Claude Code compatibility, local model tradeoffs, token costs, and generated-code safety.

If you only want to test a coding prompt without managing API keys or GPUs, start with the Chat-Deep.ai browser chat. If you want production API access, start with the DeepSeek API guide. If you want local experiments, use the DeepSeek local install guide, the LM Studio guide, or the DeepSeek with vLLM guide.

What “DeepSeek for coding” means today

“DeepSeek for coding” can mean several related workflows. They are not the same thing, and choosing the wrong one can create unnecessary cost, complexity, or privacy risk.

- Independent browser chat coding help: useful for quick explanations, debugging ideas, learning, and one-off snippets. You can test prompts in the Chat-Deep.ai browser chat.

- Official DeepSeek Chat: useful when you want to use DeepSeek through the official web/app experience. Use official DeepSeek properties for official account access.

- Official DeepSeek API: useful for coding tools, bots, developer assistants, code review systems, CI workflows, repository analysis, and production integrations.

- Anthropic-compatible workflow: useful when a coding tool expects Anthropic-style APIs, such as Claude Code integration paths documented by DeepSeek.

- FIM Completion Beta: useful for fill-in-the-middle code completion when you already have a prefix and suffix.

- Chat Prefix Completion Beta: useful when you want the model response to start with a fixed prefix, such as a Python fenced code block.

- JSON Output: useful for structured code review reports, test plans, lint summaries, migration plans, and CI annotations.

- Tool Calls: useful when a coding agent needs to request repository search, file reads, test runs, CI checks, package metadata, or safe internal tools.

- Local models: useful for offline or private coding experiments, especially with R1-Distill, DeepSeek-Coder, or DeepSeek-Coder-V2 checkpoints.

- Advanced self-hosted serving: useful for teams building private coding assistants with vLLM, SGLang, or similar serving stacks.

If you are building an API-based coding product, start with the current official hosted API and current V4 model IDs. If you are learning local AI or experimenting with private code workflows, local models can be useful, but their behavior may differ from the hosted API.

Which DeepSeek model should developers use for coding?

The best model depends on the coding task. Do not use the strongest or most expensive reasoning path for every small syntax fix. Match the model to the task, and re-check official docs before locking product documentation or pricing assumptions.

| Option | Best for | Current hosted API status | Recommended coding use |

|---|---|---|---|

deepseek-v4-flash | Everyday coding help, refactoring, explanations, test generation, JSON reports, summaries, classification, quick debugging, and cost-sensitive coding assistants. | Current official V4 API model. | Start here for most coding workflows. |

deepseek-v4-pro | Hard debugging, algorithmic reasoning, architecture analysis, multi-file codebase reasoning, difficult code review, agentic coding, and long-context synthesis. | Current official V4 API model. | Use when the task needs stronger reasoning or higher-quality planning. |

deepseek-v4-flash with thinking enabled | Lower-cost reasoning, tool planning, and agentic workflows where cost matters. | Current V4 thinking-mode path. | Use when you need reasoning but want to stay closer to Flash cost and speed. |

deepseek-v4-pro with thinking enabled | Hard code reasoning, complex architecture tradeoffs, coding agents, long analysis, and multi-step tool workflows. | Current V4 thinking-mode path. | Best hosted API choice for difficult coding workflows. |

deepseek-chat | Existing code migration only. | Legacy alias currently routing to deepseek-v4-flash non-thinking mode. | Replace with deepseek-v4-flash in new examples. |

deepseek-reasoner | Existing code migration only. | Legacy alias currently routing to deepseek-v4-flash thinking mode. | Replace with deepseek-v4-pro for hard coding tasks, or deepseek-v4-flash for lower-cost reasoning. |

| DeepSeek-R1 / R1-Distill local models | Local reasoning experiments, offline debugging help, and private coding tests. | Local/open-weight family, not the current hosted API default. | Good for local experiments; test carefully before production use. |

| DeepSeek-Coder / DeepSeek-Coder-V2 | Historical and open-weight coding model use cases, including local completion and infilling experiments. | Not the current hosted API default. | Use as a historical/open-weight coding family, not as the assumption for current hosted API examples. |

For a full model-family overview, use the DeepSeek models hub. For historical model context, use the DeepSeek-Coder historical overview, the DeepSeek R1 guide, and the DeepSeek V3.2 guide.

Recommended API settings for coding

For deterministic coding help in non-thinking mode, use a lower temperature. DeepSeek’s official temperature guide recommends temperature=0.0 for coding and math. Use higher temperature only when brainstorming architecture options, naming ideas, or alternative implementations.

from openai import OpenAI

import os

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com",

)

response = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{

"role": "user",

"content": "Refactor this function without changing behavior: ..."

}

],

temperature=0.0,

max_tokens=1200,

extra_body={"thinking": {"type": "disabled"}},

)

print(response.choices[0].message.content)For thinking-mode coding requests, route the task deliberately. Use deepseek-v4-pro and reasoning_effort="high" or reasoning_effort="max" when the task is genuinely difficult. Keep reasoning_content separate from final content, and follow the DeepSeek Thinking Mode guide for tool-call loops.

response = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{

"role": "user",

"content": "Analyze this multi-file bug and propose the smallest safe fix: ..."

}

],

reasoning_effort="high",

max_tokens=4000,

extra_body={"thinking": {"type": "enabled"}},

)

message = response.choices[0].message

print(message.content)DeepSeek-Coder vs current hosted DeepSeek for coding

DeepSeek-Coder and DeepSeek-Coder-V2 are historically important coding model families. They matter for open-weight coding history, local experiments, and some self-hosted workflows.

However, the current hosted API decision should not start from old deepseek-coder assumptions or old V3.2 aliases. For hosted coding workflows today, start with deepseek-v4-flash for everyday coding help and deepseek-v4-pro for harder reasoning, complex coding, and agentic coding workflows.

Treat DeepSeek-Coder, R1-Distill, and V3.2 checkpoints as local/open-weight/historical context unless you deliberately choose those checkpoints in a local runtime or a specific third-party integration.

Practical coding tests to run before trusting the output

Use small, repeatable tests before trusting an AI-assisted coding workflow. The goal is not to prove that a model is always right; the goal is to learn where it helps, where it fails, and how much verification your project needs.

1. Debugging a TypeScript error

Use: start with deepseek-v4-flash; switch to deepseek-v4-pro when the root cause is ambiguous or the bug spans multiple files.

Find the root cause of this TypeScript error.

Framework: Next.js

Expected behavior: ...

Actual behavior: ...

Stack trace: ...

Relevant code: ...

Recent changes: ...

Return the smallest safe fix and list tests to run.Verify: run the failing test, run type checks, confirm the fix does not change public API behavior, and check whether the model invented dependencies or files.

2. Refactoring a Python function

Use: deepseek-v4-flash with a behavior-preserving prompt and low temperature.

Refactor this Python function for readability.

Do not change behavior.

Do not add dependencies.

Keep public function names and return types unchanged.

After the refactor, list unit tests that should pass before and after.Verify: run unit tests, compare before/after outputs on edge cases, and review exception handling.

3. Generating Jest or pytest tests

Use: deepseek-v4-flash for routine tests; use deepseek-v4-pro when expected behavior is complex or hidden across files.

Generate tests for this function.

Test framework: Jest

Include happy path, edge cases, invalid input, and regression cases.

Do not assume behavior not visible in the code.

If behavior is unclear, ask clarifying questions before writing tests.Verify: generated tests should fail for the right reason before the fix and pass after the fix. Remove tests that merely assert implementation details or encode wrong assumptions.

Common coding tasks DeepSeek can help with

Explain unfamiliar code

DeepSeek can summarize what a function, class, SQL query, shell script, or configuration file does. A good prompt includes the language, framework, and the level of detail you want.

Explain this Python function for a mid-level backend developer.

Focus on inputs, outputs, side effects, edge cases, and hidden assumptions.Debug a stack trace

For debugging, include the stack trace, relevant code, expected behavior, actual behavior, and recent changes. Use deepseek-v4-pro when the failure involves multi-step reasoning or ambiguous causes.

Find the likely root cause of this error.

Language: TypeScript

Framework: Next.js

Expected behavior: ...

Actual behavior: ...

Stack trace: ...

Relevant code: ...

Return the smallest safe fix.Refactor a function

DeepSeek is useful for refactoring when you clearly state that behavior must not change. Ask for a short explanation plus tests.

Refactor this function for readability without changing behavior.

Preserve public API names.

List any assumptions.

Then suggest unit tests that should pass before and after.Generate tests

Give DeepSeek the function, framework, test runner, and edge cases. Generated tests should still be reviewed because an AI model can misunderstand intended behavior.

Generate pytest tests for this function.

Cover normal cases, edge cases, invalid inputs, and regression cases.

Do not mock behavior unless necessary.Review a pull request

DeepSeek can help with PR review summaries, risk lists, and suggested tests. For a code review bot, use concise, actionable output rather than raw reasoning traces.

Review this diff as a senior engineer.

Return:

1. Bugs or correctness risks

2. Security concerns

3. Performance concerns

4. Missing tests

5. Suggested changesConvert code between languages

DeepSeek can translate logic from one language to another, but you should specify library choices, language versions, and behavior constraints.

Convert this Python function to TypeScript.

Target Node.js 20.

Avoid external dependencies.

Keep behavior identical and include tests.Write SQL, regex, and shell scripts

These are strong everyday use cases, but they can be risky. Ask DeepSeek to explain the query or command and include safe alternatives before running it.

Write a PostgreSQL query for this report.

Include indexes that may help.

Explain the query plan risks.

Do not modify data.Document code

DeepSeek can generate docstrings, README sections, API docs, and onboarding notes. Provide the intended audience and keep documentation close to verified code behavior.

Design API schemas

DeepSeek can propose request/response shapes, OpenAPI snippets, JSON schemas, and validation rules. Use JSON Output when you need structured results.

Generate structured lint or review reports

For automated coding tools, ask DeepSeek to return structured JSON with severity, file, line, issue, and suggested fix. Validate the JSON before using it in CI or UI.

Prompt patterns for better coding results

DeepSeek performs better when you provide constraints. Use these prompt patterns as starting points.

Explain this code

Explain this code in plain English.

Audience: junior developer.

Include: purpose, inputs, outputs, side effects, edge cases, and possible bugs.Find the bug

Find the bug in this code.

Expected behavior: ...

Actual behavior: ...

Failing input: ...

Error message: ...

Relevant code: ...

Return the smallest safe fix and explain why it works.Refactor without changing behavior

Refactor this code without changing behavior.

Constraints:

- Keep public function names

- Do not add dependencies

- Preserve error handling

- Include before/after test casesGenerate tests

Generate tests for this function.

Test framework: Jest

Include happy path, edge cases, invalid input, and regression cases.

Do not assume behavior not visible in the code.Review this diff

Review this diff as a senior reviewer.

Be concise.

Return only actionable issues.

Group by severity: critical, major, minor.Return JSON only

Return valid json only.

Schema:

{

"summary": "string",

"issues": [

{

"severity": "critical|major|minor",

"file": "string",

"line": "number|null",

"issue": "string",

"suggested_fix": "string"

}

],

"tests_to_add": ["string"]

}Compare two implementation options

Compare these two implementations.

Focus on correctness, performance, maintainability, security, and testability.

End with a recommendation and risks.Write a migration plan

Create a safe migration plan.

System: ...

Current behavior: ...

Target behavior: ...

Constraints: no downtime, rollback required, database migration involved.

Return phases, tests, monitoring, and rollback steps.Act as a senior reviewer

Act as a senior backend reviewer.

Do not rewrite the whole file unless necessary.

Point out only issues that could affect correctness, security, performance, or maintainability.List assumptions and risks

Before suggesting code, list your assumptions.

Then list risks.

Then provide the minimal change needed.Never paste secrets, private keys, API tokens, database credentials, private certificates, proprietary code, or customer data unless your organization’s data policy explicitly allows it. If you use the hosted API, prompts and outputs are sent to the provider. If you use local models, verify that the entire runtime, UI, plugins, logs, telemetry, and network setup are actually local or private.

Using DeepSeek FIM Completion for code infilling

FIM means Fill-in-the-Middle. It is useful when you already have the beginning and end of a code block and you want the model to fill the missing middle. In coding tools, this is closer to code completion than a normal chat prompt.

DeepSeek’s official FIM Completion Beta uses the completions endpoint and requires base_url="https://api.deepseek.com/beta". The official FIM docs say the maximum tokens for FIM Completion are currently 4K, so use it for focused code infilling rather than very large file generation. Current V4 model documentation lists FIM as available in non-thinking mode only.

from openai import OpenAI

import os

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com/beta",

)

response = client.completions.create(

model="deepseek-v4-pro",

prompt="def normalize_email(email):\n ",

suffix="\n return email",

max_tokens=128,

)

print(response.choices[0].text)Use FIM when you need the model to complete code inside an existing file. Use normal chat when you need explanation, review, reasoning, or multi-turn collaboration. For full API request details, see the DeepSeek Chat Completions guide and the official FIM documentation linked at the end of this article.

Using Chat Prefix Completion for code-shaped output

Chat Prefix Completion Beta is useful when you want the assistant response to start with a specific prefix, such as a Python code block or a JSON object. This can reduce extra prose when you want code-only output.

DeepSeek’s official Chat Prefix Completion docs say this beta feature requires base_url="https://api.deepseek.com/beta", and the last message in the messages list must have role assistant with prefix=True.

from openai import OpenAI

import os

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com/beta",

)

messages = [

{"role": "user", "content": "Write a small Python function that validates an email."},

{"role": "assistant", "content": "```python\n", "prefix": True},

]

response = client.chat.completions.create(

model="deepseek-v4-flash",

messages=messages,

stop=["```"],

extra_body={"thinking": {"type": "disabled"}},

)

print(response.choices[0].message.content)Even when you force code-shaped output, still run tests and review the code. Prefix completion controls the output shape; it does not guarantee correctness.

Using DeepSeek in Claude Code and OpenCode

DeepSeek’s official documentation includes coding-agent integration paths, including Claude Code and OpenCode. This does not mean DeepSeek owns Claude Code, OpenCode, or Anthropic. It means DeepSeek exposes compatibility paths and documented setup instructions that some coding tools can use.

Claude Code setup

A current official Claude Code setup uses the Anthropic-compatible DeepSeek API endpoint and V4 model names:

export ANTHROPIC_BASE_URL=https://api.deepseek.com/anthropic

export ANTHROPIC_AUTH_TOKEN=${DEEPSEEK_API_KEY}

export ANTHROPIC_MODEL=deepseek-v4-pro[1m]

export ANTHROPIC_DEFAULT_OPUS_MODEL=deepseek-v4-pro

export ANTHROPIC_DEFAULT_SONNET_MODEL=deepseek-v4-pro

export ANTHROPIC_DEFAULT_HAIKU_MODEL=deepseek-v4-flash

export CLAUDE_CODE_SUBAGENT_MODEL=deepseek-v4-pro

export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1

export CLAUDE_CODE_DISABLE_NONSTREAMING_FALLBACK=1

export CLAUDE_CODE_EFFORT_LEVEL=maxWhen using Anthropic-compatible requests, use DeepSeek model names, not Anthropic model names. DeepSeek’s Anthropic API documentation also notes that unsupported model names may be mapped automatically to deepseek-v4-flash, so verify which model is actually used in your workflow.

OpenCode setup

DeepSeek’s official coding-agent guide also documents an OpenCode path. It recommends using a recent OpenCode version and selecting the DeepSeek-V4-Pro model during provider configuration. For agentic coding, V4-Pro is usually the better hosted API choice because the task often requires tool planning, codebase reasoning, and multi-step debugging.

JSON Output and Tool Calls for coding agents

JSON Output is useful for structured developer tools: code review reports, lint summaries, TODO extraction, vulnerability triage, migration plans, test suggestions, and CI annotations. DeepSeek’s JSON Output guide says to set response_format={"type":"json_object"}, include the word “json” in the prompt, provide an example JSON shape, and set max_tokens reasonably to reduce the risk of truncated JSON.

Return valid json only.

Analyze this diff and return:

{

"summary": "string",

"issues": [

{

"severity": "critical|major|minor",

"file": "string",

"line": "number|null",

"issue": "string",

"suggested_fix": "string"

}

],

"tests_to_add": ["string"]

}DeepSeek’s JSON Output documentation also notes that the API may occasionally return empty content when using JSON Output. For production coding tools, add retry logic, validate parsed JSON, and show a safe fallback instead of assuming every response will be parseable.

Tool Calls are useful when a coding agent needs real repository context. For example, the model can request a tool call to search files, read a file, run a test command, inspect package metadata, or check CI status. The important caveat is that the model does not execute tools by itself. Your application must execute the requested function, validate inputs, control permissions, and return the result to the model.

Strict tool mode is beta. DeepSeek’s Tool Calls documentation says strict mode requires base_url="https://api.deepseek.com/beta" and strict:true inside function definitions. Use strict mode when you need tighter schema adherence, but still validate all arguments before executing tools.

When to use thinking mode for coding

Use thinking mode when the coding task truly benefits from deeper reasoning. Good examples include:

- Hard debugging where the stack trace is ambiguous.

- Algorithmic reasoning and edge-case analysis.

- Multi-file codebase reasoning.

- Architecture tradeoff review.

- Test failure triage.

- Complex refactors with hidden dependencies.

- Comparing multiple implementation strategies.

- Agentic coding workflows that need tool planning and verification.

Do not use thinking mode for every small coding task. It can add latency and output tokens, and simple tasks such as formatting, short snippets, small syntax fixes, or cheap autocomplete usually do not need deep reasoning.

In the current official DeepSeek API, thinking mode is controlled with the thinking object. With the OpenAI SDK pattern, pass the thinking parameter through extra_body:

response = client.chat.completions.create(

model="deepseek-v4-pro",

messages=messages,

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)Thinking-mode output can use reasoning_content for reasoning-related output and content for the final answer. For normal user-facing coding products, the final output should usually be concise, actionable, and verifiable rather than exposing raw reasoning traces. In thinking-mode tool loops, follow the official DeepSeek tool-calling pattern because the API may require the current turn’s reasoning_content to continue the tool loop correctly.

For a deeper implementation walkthrough, use the DeepSeek Thinking Mode guide.

API vs local DeepSeek for coding

The official API is usually easier for production coding tools because it gives you hosted behavior, current V4 model IDs, OpenAI-compatible integration, Anthropic-compatible integration, JSON Output, Tool Calls, thinking mode, and beta features such as FIM or Chat Prefix Completion.

Local models can be useful for offline or private coding experiments, especially when you do not want code snippets to leave your own machine or infrastructure. However, local privacy only holds if the runtime, UI, plugins, logging, telemetry, and network setup are also local or private.

For local coding, R1-Distill, DeepSeek-Coder, and DeepSeek-Coder-V2 may be useful depending on your runtime and hardware. Full large open-weight models are not normal beginner laptop targets. If you need an agent workflow with tools, do not assume every local DeepSeek variant supports the same hosted API features.

For the full decision framework, read DeepSeek Local vs API. For beginner local setup, use DeepSeek local install with Ollama or DeepSeek in LM Studio. For advanced serving, use DeepSeek with vLLM.

Cost and context tips for coding workflows

Coding tasks can consume many tokens because developers often paste long files, stack traces, diffs, dependency files, schemas, logs, test output, and repository context. The easiest way to control cost and latency is to send only the relevant context.

- Send selected snippets instead of entire repositories.

- Include file paths and function names so the model understands context.

- Use retrieval or repository search before calling the model.

- Summarize large logs before asking for root-cause analysis.

- Ask for concise patches rather than full file rewrites.

- Use

deepseek-v4-flashfor routine tasks and reservedeepseek-v4-profor harder analysis. - Use non-thinking mode for simple formatting, summaries, and JSON reports.

- Use thinking mode only when the task benefits from multi-step reasoning.

- Monitor cache-hit and cache-miss input tokens when using repeated prompts or repeated repository context.

Current official V4 pricing is listed per 1M tokens and differs by model:

| Model | Input cache hit | Input cache miss | Output | Best coding use |

|---|---|---|---|---|

deepseek-v4-flash | $0.028 / 1M tokens | $0.14 / 1M tokens | $0.28 / 1M tokens | Routine coding help, extraction, summaries, JSON reports, quick debugging, and high-volume tools. |

deepseek-v4-pro | $0.145 / 1M tokens | $1.74 / 1M tokens | $3.48 / 1M tokens | Hard debugging, codebase reasoning, complex coding, long-context review, and coding agents. |

DeepSeek context caching can reduce cost when repeated prefixes are reused, such as the same system prompt, repository instructions, or long context prefix. Only repeated prefix portions can trigger cache hits, so do not assume every coding request gets the cheaper cache-hit rate.

For coding tools, monitor input tokens, output tokens, cache-hit tokens, cache-miss tokens, reasoning tokens when available, request latency, failed requests, tool-call retries, JSON parse errors, and user-visible error rates. If you are investigating availability, use the DeepSeek status checker.

Safety checklist for AI-generated code

Before using AI-generated code in production, apply this checklist:

- Run unit tests, integration tests, and regression tests.

- Run linting, formatting, and type checks.

- Review security risks, especially injection, auth, access control, deserialization, file handling, dependency risks, and supply-chain issues.

- Verify package names, versions, and licenses.

- Do not paste secrets, tokens, keys, private certificates, or production credentials into prompts.

- Validate JSON output before parsing it into automation.

- Validate tool-call arguments before executing repository, shell, database, or network tools.

- Never let a coding agent run shell commands, database writes, payment actions, or deletion actions without strict controls.

- Test database migrations on staging before production.

- Use human review for production changes.

- Log model outputs carefully and avoid storing sensitive code or customer data unnecessarily.

Best-practice workflows

| Use case | Recommended DeepSeek path | Suggested model / mode | Why | Key caveat |

|---|---|---|---|---|

| Beginner learning a new language | Browser chat or API | deepseek-v4-flash | Good for explanations, examples, and learning prompts. | Ask for version-specific examples and verify with official docs. |

| Debugging a stack trace | API or browser chat | deepseek-v4-flash first; deepseek-v4-pro if difficult | Simple bugs often do not need stronger reasoning; complex failures may benefit from it. | Include expected behavior, actual behavior, and full error context. |

| Refactoring a legacy function | API or browser chat | deepseek-v4-flash | Good for readability and maintainability suggestions. | Require tests and behavior-preserving constraints. |

| Generating tests | API or browser chat | deepseek-v4-flash | Good for covering normal, edge, and regression cases. | Generated tests can encode wrong assumptions. |

| Reviewing a pull request | API | deepseek-v4-flash with JSON Output; deepseek-v4-pro for hard reviews | Useful for severity, file, line, issue, and suggested fix fields. | Validate output and avoid noisy automated comments. |

| Building a coding agent | API with Tool Calls | deepseek-v4-pro for hard agent tasks; deepseek-v4-flash for simple tools | Tool Calls can connect the model to search, file reading, tests, and CI. | The application executes tools, not the model. |

| Using Claude Code with DeepSeek | Anthropic-compatible API | deepseek-v4-pro for main coding-agent model | Official docs provide a Claude Code setup path. | Unsupported model names may map to deepseek-v4-flash; verify actual model behavior. |

| Filling a missing code block | FIM Completion Beta | Current V4 model, non-thinking mode only | Designed for prefix/suffix code infilling. | Requires beta base URL and has a 4K FIM max-token limit. |

| Private/offline code experiments | Local runtime | R1-Distill, DeepSeek-Coder, or Coder-V2 depending on runtime | Can keep code within your local/private setup if configured correctly. | Local behavior may not match the hosted API. |

| Production SaaS coding assistant | Official API first, with optional local evaluation later | deepseek-v4-flash for most tasks; deepseek-v4-pro for hard reasoning | Hosted API reduces infrastructure work and supports documented API features. | Monitor cost, latency, failures, privacy requirements, and generated-code safety. |

Next step: choose the right DeepSeek path

| If you want to… | Use this page |

|---|---|

| Try a coding prompt quickly | Chat-Deep.ai browser chat |

| Build a coding tool with API access | DeepSeek API guide |

| Use Python with DeepSeek | DeepSeek Python SDK guide |

| Use Node.js or TypeScript with DeepSeek | DeepSeek Node.js TypeScript guide |

| Use OpenAI SDK patterns with DeepSeek | OpenAI SDK with DeepSeek |

| Compare current DeepSeek models | DeepSeek models hub |

| Check official API pricing | DeepSeek API pricing |

| Track real token usage | DeepSeek Token Usage guide |

| Use JSON Output for code reports | DeepSeek JSON Output guide |

| Use tools and function calling | DeepSeek Tool Calls guide |

| Handle thinking-mode behavior | DeepSeek Thinking Mode guide |

| Check outages or API availability | DeepSeek status checker |

| Run local experiments | DeepSeek local install guide |

| Use a local desktop UI | DeepSeek in LM Studio |

| Serve models with vLLM | DeepSeek with vLLM guide |

Common mistakes to avoid

- Treating

deepseek-chatanddeepseek-reasoneras the current primary model IDs. They are legacy compatibility aliases. Usedeepseek-v4-flashanddeepseek-v4-proin new code. - Treating DeepSeek-Coder as the current hosted API model. It is historically important, but current hosted API usage should follow V4 model IDs.

- Assuming

deepseek-reasonerequals original R1. It is now a legacy compatibility alias, not the current primary reasoning model ID. - Using thinking mode for every small task. It can increase latency and token usage.

- Pasting whole repositories. Send relevant snippets, diffs, logs, and tests instead.

- Pasting secrets or private keys. Redact credentials and follow your data policy.

- Trusting generated code without tests. Always test and review.

- Ignoring JSON truncation or empty JSON responses. Use reasonable

max_tokens, add retry logic, and validate parsed JSON. - Assuming local output matches hosted API output. Checkpoint, runtime, prompt template, quantization, and sampling can change behavior.

- Assuming Tool Calls execute automatically. Your app must execute tools and validate arguments.

- Copying outdated API alias mappings. Re-check official docs before publishing product docs or code examples.

- Linking to old screenshots. Remove screenshots that still show V3.2, 128K, old pricing,

deepseek-chatas current, ordeepseek-reasoneras current.

FAQ

Is DeepSeek good for coding?

Yes. DeepSeek can help with code explanation, debugging, refactoring, tests, code review, JSON reports, Tool Calls, FIM code infilling, and coding-agent workflows. Always verify generated code with tests and human review.

Which DeepSeek model should I use for coding now?

Use deepseek-v4-flash for most coding help, summaries, refactoring, tests, and high-volume workflows. Use deepseek-v4-pro for hard debugging, multi-file reasoning, architecture tradeoffs, and agentic coding workflows.

Should I still use deepseek-chat or deepseek-reasoner for coding?

Not for new code examples. They are legacy compatibility aliases. deepseek-chat currently routes to deepseek-v4-flash non-thinking mode, and deepseek-reasoner currently routes to deepseek-v4-flash thinking mode.

Should I use deepseek-v4-flash or deepseek-v4-pro for coding?

Use deepseek-v4-flash when speed and cost matter most. Use deepseek-v4-pro when the coding task needs deeper reasoning, complex debugging, long-context analysis, tool planning, or better agentic behavior.

Does DeepSeek support FIM code completion?

Yes. DeepSeek documents FIM Completion as a beta feature. It requires https://api.deepseek.com/beta, uses the completions endpoint, and has a 4K maximum-token limit for FIM completion. Current V4 docs list FIM as non-thinking-mode-only.

What is Chat Prefix Completion for coding?

Chat Prefix Completion is a beta feature where the last message is an assistant prefix, such as ```python, and the model completes from that prefix. It requires the beta base URL and prefix=True on the final assistant message.

When should I use thinking mode for coding?

Use thinking mode for hard debugging, algorithmic reasoning, complex code review, architecture tradeoffs, multi-file analysis, and agentic tool workflows. Avoid it for small formatting, syntax, or short autocomplete tasks.

Can DeepSeek return structured JSON for code review reports?

Yes. Use JSON Output with response_format={"type":"json_object"}, explicitly ask for JSON in the prompt, provide a schema example, set enough max_tokens, and validate parsed output before using it in automation.

Can DeepSeek coding agents use tools?

Yes. Current V4 models support Tool Calls. The model proposes tool calls, but your application executes them. Always validate tool arguments before reading files, running tests, calling APIs, or changing data.

Can I use DeepSeek with Claude Code?

DeepSeek documents a Claude Code integration path through its Anthropic-compatible API. The current setup uses V4 model names such as deepseek-v4-pro and deepseek-v4-flash. Verify actual model routing before relying on custom model names.

Is local DeepSeek better than the API for coding?

Local DeepSeek models can help with private or offline experiments, but setup, hardware, runtime, quantization, and model choice matter. The hosted API is usually easier for production coding tools, while local models are useful when privacy or offline control is the priority.

Can I trust DeepSeek-generated code?

Do not trust generated code blindly. Run tests, type checks, linting, security review, dependency review, and human review before using generated code in production.

Is Chat-Deep.ai the official DeepSeek website?

No. Chat-Deep.ai is an independent DeepSeek guide and browser access site. It is not affiliated with DeepSeek, DeepSeek.com, the official DeepSeek app, or the official DeepSeek developer platform.

Final recommendation

For current hosted coding workflows, use deepseek-v4-flash as the default model for ordinary developer tasks and deepseek-v4-pro for hard reasoning, multi-file debugging, architecture decisions, coding agents, and long-context analysis. Keep deepseek-chat and deepseek-reasoner only as migration aliases.

For code completion inside existing files, use FIM Completion Beta. For code-shaped answers, use Chat Prefix Completion Beta. For structured reports, use JSON Output. For coding agents, use Tool Calls with strict validation and clear permission controls.

Most importantly: treat AI-generated code as a draft. DeepSeek can speed up thinking and implementation, but tests, security review, and human judgment remain mandatory for production software.

Official sources and last verified

Last verified: April 24, 2026. DeepSeek model names, pricing, coding-agent support, FIM behavior, Chat Prefix behavior, Tool Calls, JSON Output, context limits, and alias deprecation dates can change. Use the official sources below before shipping production code or publishing customer-facing API documentation.

- DeepSeek-V4 Preview Release

- DeepSeek API Quick Start

- DeepSeek Models & Pricing

- DeepSeek Create Chat Completion API Reference

- DeepSeek Temperature Parameter Guide

- DeepSeek FIM Completion Beta

- DeepSeek Chat Prefix Completion Beta

- DeepSeek Integrate with Coding Agents

- DeepSeek Anthropic API Compatibility

- DeepSeek JSON Output

- DeepSeek Tool Calls

- DeepSeek Thinking Mode

- DeepSeek Context Caching

- DeepSeek Token & Token Usage

- DeepSeek Error Codes